(→CPU Complex (CCX)) |

(Fixed assumed typo, 1532 is not a common size) |

||

| (344 intermediate revisions by 59 users not shown) | |||

| Line 1: | Line 1: | ||

{{amd title|Zen|arch}} | {{amd title|Zen|arch}} | ||

{{microarchitecture | {{microarchitecture | ||

| − | | atype | + | |atype=CPU |

| − | | name | + | |name=Zen |

| − | | designer | + | |designer=AMD |

| − | | manufacturer | + | |manufacturer=GlobalFoundries |

| − | + | |introduction=March 2, 2017 | |

| − | | introduction | + | |process=14 nm |

| − | + | |cores=4 | |

| − | | process | + | |cores 2=6 |

| − | | cores | + | |cores 3=8 |

| − | | cores 2 | + | |cores 4=12 |

| − | | cores 3 | + | |cores 5=16 |

| − | | cores 4 | + | |cores 6=24 |

| − | | cores 5 | + | |cores 7=32 |

| + | |type=Superscalar | ||

| + | |oooe=Yes | ||

| + | |speculative=Yes | ||

| + | |renaming=Yes | ||

| + | |stages=19 | ||

| + | |decode=4-way | ||

| + | |isa=x86-64 | ||

| + | |extension=MOVBE | ||

| + | |extension 2=MMX | ||

| + | |extension 3=SSE | ||

| + | |extension 4=SSE2 | ||

| + | |extension 5=SSE3 | ||

| + | |extension 6=SSSE3 | ||

| + | |extension 7=SSE4.1 | ||

| + | |extension 8=SSE4.2 | ||

| + | |extension 9=POPCNT | ||

| + | |extension 10=AVX | ||

| + | |extension 11=AVX2 | ||

| + | |extension 12=AES | ||

| + | |extension 13=PCLMUL | ||

| + | |extension 14=RDRND | ||

| + | |extension 15=F16C | ||

| + | |extension 16=BMI | ||

| + | |extension 17=BMI2 | ||

| + | |extension 18=RDSEED | ||

| + | |extension 19=ADCX | ||

| + | |extension 20=PREFETCHW | ||

| + | |extension 21=CLFLUSHOPT | ||

| + | |extension 22=XSAVE | ||

| + | |extension 23=SHA | ||

| + | |extension 24=CLZERO | ||

| + | |pipeline=Yes | ||

| + | |issues=4 | ||

| + | |l1i=64 KiB | ||

| + | |l1i per=core | ||

| + | |l1i desc=4-way set associative | ||

| + | |l1d=32 KiB | ||

| + | |l1d per=core | ||

| + | |l1d desc=8-way set associative | ||

| + | |l2=512 KiB | ||

| + | |l2 per=core | ||

| + | |l2 desc=8-way set associative | ||

| + | |l3=2 MiB | ||

| + | |l3 per=core | ||

| + | |l3 desc=16-way set associative | ||

| + | |core names=Yes | ||

| + | |core name=Naples | ||

| + | |core name 2=Whitehaven | ||

| + | |core name 3=Summit Ridge | ||

| + | |core name 4=Raven Ridge | ||

| + | |core name 5=Snowy Owl | ||

| + | |core name 6=Great Horned Owl | ||

| + | |core name 7=Banded Kestrel | ||

| + | |successors=Yes | ||

| + | |predecessor=Excavator | ||

| + | |predecessor link=amd/microarchitectures/excavator | ||

| + | |predecessor 2=Puma | ||

| + | |predecessor 2 link=amd/microarchitectures/puma | ||

| + | |successor=Zen+ | ||

| + | |successor link=amd/microarchitectures/zen+ | ||

| + | |successor 2=Zen 2 | ||

| + | |successor 2 link=amd/microarchitectures/zen 2 | ||

| + | |successor 3=Zen 3 | ||

| + | |successor 3 link=amd/microarchitectures/zen 3 | ||

| + | |successor 4=Zen 4 | ||

| + | |successor 4 link=amd/microarchitectures/zen 4 | ||

| + | |successor 5=Zen 5 | ||

| + | |successor 5 link=amd/microarchitectures/zen 5 | ||

| + | }} | ||

| − | | | + | '''Zen''' ('''family 17h''') is the [[microarchitecture]] developed by [[AMD]] as a successor to both {{\\|Excavator}} and {{\\|Puma}}. Zen is an entirely new design, built from the ground up for optimal balance of performance and power capable of covering the entire computing spectrum from fanless notebooks to high-performance desktop computers. |

| − | | | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | + | Zen was officially launched on March 2, [[2017]]. Zen was replaced by {{\\|Zen+}} in [[2018]]. | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | | | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | + | == Etymology == | |

| − | + | :[[File:amd-zen-black-logo.png|left|Zen Logo]] | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | | | ||

| − | | | ||

| − | + | ''‘’Zen‘’'' was picked by Michael Clark, [[AMD]]'s senior fellow and lead architect. | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | + | *Zen was picked to represent the balance needed between the various competing aspects of a microprocessor - transistor allocation/die size, clock/frequency restriction, power limitations, and new instructions to implement. | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | + | * For performance desktop and mobile computing, Zen is branded as {{amd|Athlon}}, {{amd|Ryzen 3}}, {{amd|Ryzen 5}}, {{amd|Ryzen 7}}, {{amd|Ryzen 9}} and {{amd|Ryzen Threadripper}} processors. | |

| − | + | * For servers, Zen is branded as {{amd|EPYC}}. | |

== Codenames == | == Codenames == | ||

| − | |||

| − | |||

| − | |||

{| class="wikitable" | {| class="wikitable" | ||

|- | |- | ||

! Core !! C/T !! Target | ! Core !! C/T !! Target | ||

|- | |- | ||

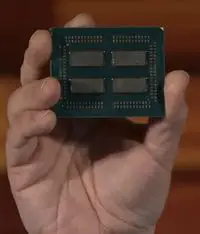

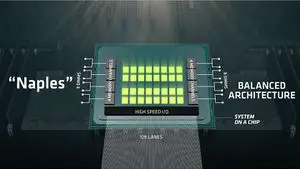

| − | | {{amd|Naples|l=core}} || 32/64 || High-end server multiprocessors | + | | {{amd|Naples|l=core}} || Up to 32/64 || High-end server [[multiprocessors]] |

|- | |- | ||

| − | | {{amd| | + | | {{amd|Whitehaven|l=core}} || Up to 16/32 || Enthusiasts market processors |

|- | |- | ||

| − | | {{amd|Summit Ridge|l=core}} || 8/16 || | + | | {{amd|Snowy Owl|l=core}} || Up to 16/32 || Embedded edge processors |

| + | |- | ||

| + | | {{amd|Summit Ridge|l=core}} || Up to 8/16 || Mainstream to high-end desktops | ||

| + | |- | ||

| + | | {{amd|Raven Ridge|l=core}} || Up to 4/8 || Mobile processors with {{\\|Vega}} GPU | ||

| + | |- | ||

| + | | {{amd|Great Horned Owl|l=core}} || Up to 4/8 || Embedded processors with {{\\|Vega}} GPU | ||

| + | |- | ||

| + | | {{amd|Dali|l=core}} || Up to 2/4 || Budget mobile processors with {{\\|Vega}} GPU | ||

| + | |- | ||

| + | | {{amd|Banded Kestrel|l=core}} || Up to 2/4 || Low-power/Cost-sensitive embedded processors with {{\\|Vega}} GPU | ||

| + | |} | ||

| + | |||

| + | == Process Technology == | ||

| + | {{see also|14 nm process}} | ||

| + | [[Zen]] is manufactured on ''Global Foundries''' [[14 nm process]] Low Power Plus (14LPP). [[AMD]]'s previous microarchitectures were based on [[32 nm|32]] and [[28 nm|28]] nanometer processes. The jump to [[14 nm]] was part of [[AMD]]'s attempt to remain competitive against [[Intel]] (both {{intel|Skylake}} and {{intel|Kaby Lake}} are also manufactured on [[14 nm]]). The move to [[14 nm]] will bring along related benefits of a smaller node such as reduced heat, reduced power consumption, and higher density for identical designs. | ||

| + | |||

| + | |||

| + | ===Comparison=== | ||

| + | {| class="wikitable" cellpadding="3px" style="border: 1px solid black; border-spacing: 0px; width: 100%; text-align:center;" | ||

| + | ! colspan="2" | Core | ||

| + | ! {{amd|Zen|l=arch}} | ||

| + | ! {{amd|Zen+|l=arch}} | ||

| + | ! {{amd|Zen 2|l=arch}} | ||

| + | ! {{amd|Zen 3|l=arch}} | ||

| + | ! {{amd|Zen 3+|l=arch}} | ||

| + | ! {{amd|Zen 4|l=arch}} | ||

| + | ! {{amd|Zen 4c|l=arch}} | ||

| + | ! {{amd|Zen 5|l=arch}} | ||

| + | ! {{amd|Zen 5c|l=arch}} | ||

| + | ! {{amd|Zen 6|l=arch}} | ||

| + | ! {{amd|Zen 6c|l=arch}} | ||

| + | |- | ||

| + | ! style="text-align: left;" rowspan="2" | Codename | ||

| + | ! style="text-align: left;" | Core | ||

| + | | | ||

| + | | | ||

| + | | ''Valhalla'' | ||

| + | | ''Cerberus'' | ||

| + | | | ||

| + | | ''Persephone'' | ||

| + | | ''Dionysus'' | ||

| + | | ''Nirvana'' | ||

| + | | ''Prometheus'' | ||

| + | | ''Morpheus'' | ||

| + | | ''Monarch'' | ||

| + | |- | ||

| + | ! style="text-align: left;" | CCD | ||

| + | | | ||

| + | | | ||

| + | | ''Aspen <br>Highlands'' | ||

| + | | ''Brecken <br>Ridge'' | ||

| + | | | ||

| + | | ''Durango'' | ||

| + | | ''Vindhya'' | ||

| + | | ''Eldora'' | ||

| + | | | ||

| + | | | ||

| + | | | ||

| + | |- | ||

| + | ! style="text-align: left;" rowspan="2" | Cores <br>(threads) | ||

| + | ! style="text-align: left;" | CCD | ||

| + | | | ||

| + | | | ||

| + | | 8 (16) | ||

| + | | 8 (16) | ||

| + | | | ||

| + | | 8 (16) | ||

| + | | 16 (32) | ||

| + | | 8 (16) | ||

| + | | 16 (32) | ||

| + | | | ||

| + | | | ||

| + | |- | ||

| + | ! style="text-align: left;" | CCX | ||

| + | | | ||

| + | | | ||

| + | | 4 (8) | ||

| + | | 8 (16) | ||

| + | | | ||

| + | | 8 (16) | ||

| + | | 8 (16) | ||

| + | | 8 (16) | ||

| + | | | ||

| + | | | ||

| + | | | ||

| + | |- | ||

| + | ! style="text-align: left;" rowspan="2" | L3 cache | ||

| + | ! style="text-align: left;" | CCD | ||

| + | | | ||

| + | | | ||

| + | | 32 MB | ||

| + | | 32 MB | ||

| + | | | ||

| + | | 32 MB | ||

| + | | 32 MB | ||

| + | | 32 MB | ||

| + | | 32 MB | ||

| + | | | ||

| + | | | ||

| + | |- | ||

| + | ! style="text-align: left;" | CCX | ||

| + | | | ||

| + | | | ||

| + | | 16 MB | ||

| + | | 32 MB | ||

| + | | | ||

| + | | 32 MB | ||

| + | | 16 MB | ||

| + | | 32 MB | ||

| + | | | ||

| + | | | ||

| + | | | ||

| + | |- | ||

| + | ! style="text-align: left;" rowspan="2" | Die size | ||

| + | ! style="text-align: left;" | CCD area | ||

| + | | 44 mm<sup>2</sup> | ||

| + | | | ||

| + | | 74 mm<sup>2</sup> | ||

| + | | 80.7 mm<sup>2</sup> | ||

| + | | | ||

| + | | 66.3 mm<sup>2</sup> | ||

| + | | 72.7 mm<sup>2</sup> | ||

| + | | 70.6 mm<sup>2</sup> | ||

| + | | | ||

| + | | | ||

| + | | | ||

| + | |- | ||

| + | ! style="text-align: left;" | Core area<br>(Fab node) | ||

| + | | 7 mm<sup>2</sup><br>([[14 nm]]) | ||

| + | | ([[12 nm]]) | ||

| + | | 2.83 mm<sup>2</sup><br>([[7 nm]]) | ||

| + | | 3.24 mm<sup>2</sup><br>([[7 nm]]) | ||

| + | | ([[7 nm]]) | ||

| + | | 3.84 mm<sup>2</sup><br>([[5 nm]]) | ||

| + | | 2.48 mm<sup>2</sup><br>([[5 nm]]) | ||

| + | | ([[4 nm]]) | ||

| + | | ([[3 nm]]) | ||

| + | | ([[2 nm]]) | ||

| + | | ([[2 nm]]) | ||

|- | |- | ||

| − | |||

|} | |} | ||

== Brands == | == Brands == | ||

| − | {{ | + | {| class="wikitable" style="text-align: center;" |

| + | |- | ||

| + | ! colspan="11" | AMD Zen-based processor brands | ||

| + | |- | ||

| + | ! rowspan="2" | Logo || rowspan="2" | Family !! rowspan="2" | General Description !! colspan="8" | Differentiating Features | ||

| + | |- | ||

| + | ! Cores !! Unlocked !! {{x86|AVX2}} !! [[SMT]] !! {{amd|XFR}} !! [[IGP]] !! [[ECC]] !! [[Multiprocessing|MP]] | ||

| + | |- | ||

| + | ! colspan="11" | Mainstream | ||

| + | |- | ||

| + | | [[File:amd ryzen 3 logo.png|75px|link=Ryzen 3]] || {{amd|Ryzen 3}} || Entry level Performance || [[quad-core|Quad]] || {{tchk|yes}} || {{tchk|yes}} || {{tchk|no}} || {{tchk|yes}} || {{tchk|some}} || {{tchk|yes}} || {{tchk|no}} | ||

| + | |- | ||

| + | | rowspan="2" | [[File:amd ryzen 5 logo.png|75px|link=Ryzen 5]] || rowspan="2" | {{amd|Ryzen 5}} || rowspan="2" | Mid-range Performance || [[quad-core|Quad]] || {{tchk|yes}} || {{tchk|yes}} || {{tchk|yes}} || {{tchk|yes}} || {{tchk|some}} || {{tchk|yes}} || {{tchk|no}} | ||

| + | |- | ||

| + | | [[hexa-core|Hexa]] || {{tchk|yes}} || {{tchk|yes}} || {{tchk|yes}} || {{tchk|yes}} || {{tchk|no}} || {{tchk|yes}} || {{tchk|no}} | ||

| + | |- | ||

| + | | [[File:amd ryzen 7 logo.png|75px|link=Ryzen 7]] || {{amd|Ryzen 7}} || High-end Performance || [[octa-core|Octa]] || {{tchk|yes}} || {{tchk|yes}} || {{tchk|yes}} || {{tchk|yes}} || {{tchk|no}} || {{tchk|yes}} || {{tchk|no}} | ||

| + | |- | ||

| + | | [[File:amd ryzen 9 logo.png|75px|link=Ryzen 7]] || {{amd|Ryzen 9}} || High-end Performance || [[12 cores|12]]-[[16 cores|16]] || {{tchk|yes}} || {{tchk|yes}} || {{tchk|yes}} || {{tchk|yes}} || {{tchk|no}} || {{tchk|yes}} || {{tchk|no}} | ||

| + | |- | ||

| + | ! colspan="11" | Enthusiasts / Workstations | ||

| + | |- | ||

| + | | [[File:ryzen threadripper logo.png|75px|link=Ryzen Threadripper]] || {{amd|Ryzen Threadripper}} || Enthusiasts || [[8 cores|8]]-[[16 cores|16]] || {{tchk|yes}} || {{tchk|yes}} || {{tchk|yes}} || {{tchk|yes}} || {{tchk|no}} || {{tchk|yes}} || {{tchk|no}} | ||

| + | |- | ||

| + | ! colspan="11" | Servers | ||

| + | |- | ||

| + | | [[File:amd epyc logo.png|75px|link=amd/epyc]] || {{amd|EPYC}} || High-performance Server Processor || [[8 cores|8]]-[[32 cores|32]] || {{tchk|no}} || {{tchk|yes}} || {{tchk|yes}} || || {{tchk|no}} || {{tchk|yes}} || {{tchk|yes}} | ||

| + | |- | ||

| + | ! colspan="11" | Embedded / Edge | ||

| + | |- | ||

| + | | [[File:epyc embedded logo.png|75px|link=amd/epyc embedded]] || {{amd|EPYC Embedded}} || Embedded / Edge Server Processor || [[8 cores|8]]-[[16 cores|16]] || {{tchk|no}} || {{tchk|yes}} || {{tchk|some}} || || {{tchk|no}} || {{tchk|yes}} || {{tchk|no}} | ||

| + | |- | ||

| + | | [[File:ryzen embedded logo.png|75px|link=amd/ryzen embedded]] || {{amd|Ryzen Embedded}} || Embedded APUs || [[4 cores|4]] || || {{tchk|yes}} || {{tchk|some}} || || {{tchk|yes}} || {{tchk|yes}} || {{tchk|no}} | ||

| + | |} | ||

| + | |||

| + | * '''Note:''' While a model has an unlocked multiplier, not all chipsets support overclocking. (see [[#Sockets/Platform|§Sockets]]) | ||

| + | * '''Note:''' 'X' models will enjoy "Full XFR" providing an additional +100 MHz (200 for {{amd|1500X}} and {{amd|Threadripper}} line) <br>when sufficient thermo/electric requirements are met. Non-X models are limited to just +50 MHz. | ||

| + | |||

| + | === Identification === | ||

| + | [[File:amd ryzen black bg logo.png|thumb|right|Ryzen brand logo]] | ||

| + | |||

| + | {{chip identification | ||

| + | | parts = 7 | ||

| + | | ex 1 = Ryzen | ||

| + | | ex 2 = 7 | ||

| + | | ex 3 = | ||

| + | | ex 4 = 1 | ||

| + | | ex 5 = 7 | ||

| + | | ex 6 = 00 | ||

| + | | ex 7 = X | ||

| + | | ex 2 1 = Ryzen | ||

| + | | ex 2 2 = 5 | ||

| + | | ex 2 3 = | ||

| + | | ex 2 4 = 3 | ||

| + | | ex 2 5 = 5 | ||

| + | | ex 2 6 = 50 | ||

| + | | ex 2 7 = H | ||

| + | | ex 3 1 = Ryzen | ||

| + | | ex 3 2 = 3 | ||

| + | | ex 3 3 = | ||

| + | | ex 3 4 = 2 | ||

| + | | ex 3 5 = 2 | ||

| + | | ex 3 6 = 00 | ||

| + | | ex 3 7 = U | ||

| + | | desc 1 = '''Brand Name'''<br><table><tr><td style="width: 50px;">'''{{amd|Ryzen}}'''</td><td></td></tr></table> | ||

| + | | desc 2 = '''Market segment'''<br><table><tr><td style="width: 50px;">'''3'''</td><td>Low-end performance</td></tr><tr><td>'''5'''</td><td>Mid-range performance</td></tr><tr><td>'''7'''</td><td>Enthusiast / High-end performance</td></tr><tr><td>'''9'''</td><td>High-end performance / Workstation</td></tr><tr><td>'''Threadripper'''</td><td>High-end performance / Workstation</td></tr></table> | ||

| + | | desc 3 = | ||

| + | | desc 4 = '''Generation'''<br><table><tr><td style="width: 50px;">'''1'''</td><td>First generation Zen (2017)</td></tr><tr><td style="width: 50px;">'''2'''</td><td>First generation Zen for Mobile and Desktop APUs (2017); First generation Zen with enhanced node (Zen+)(2018)</td></tr><tr><td style="width: 50px;">'''3'''</td><td>First generation Zen with enhanced node (Zen+) for Mobile and Desktop APUs (2019); Second generation Zen (Zen 2)(2019)</td></tr><tr><td style="width: 50px;">'''4'''</td><td>Second generation Zen (Zen 2) for Mobile and Desktop APUs (2020)</td></tr><tr><td style="width: 50px;">'''5'''</td><td>Third generation Zen (Zen 3)(2020)</td></tr></table> | ||

| + | | desc 5 = '''Performance Level'''<br><table><tr><td style="width: 50px;">'''9'''</td><td>Extreme (Ryzen Threadripper & Ryzen 9)</td></tr><tr><td>'''8'''</td><td>Highest (Ryzen 7)</td></tr><tr><td>'''6-7'''</td><td>High (Ryzen 5 & 7)</td></tr><tr><td>'''4-5'''</td><td>Mid (Ryzen 5)</td></tr><tr><td>'''1-3'''</td><td>Low (Ryzen 3)</td></tr></table> | ||

| + | | desc 6 = '''Model Number'''<br>Speed bump and/or differentiator for high core count chips (8 cores+). | ||

| + | | desc 7 = '''Power Segment'''<br><table><tr><td style="width: 50px;">'''(none)'''</td><td>Standard Desktop</td></tr><tr><td>'''U'''</td><td>Standard Mobile</td></tr><tr><td>'''X'''</td><td>High Performance, with XFR</td></tr><tr><td style="width: 50px;">'''WX'''</td><td>High Core Count Workstation</td></tr><tr><td>'''G'''</td><td>Desktop + [[IGP]]</td></tr><tr><td>'''E'''</td><td>Low-power Desktop</td></tr><tr><td>'''GE'''</td><td>Low-power Desktop + [[IGP]]</td></tr><tr><td>'''M'''</td><td>Low-power Mobile</td></tr><tr><td>'''H'''</td><td>High-performance Mobile</td></tr><tr><td>'''S'''</td><td>Slim Mobile</td></tr><tr><td>'''HS'''</td><td>High-Performance Slim Mobile</td></tr><tr><td>'''XT'''</td><td>Extreme</td></tr></table> | ||

| + | }} | ||

== Release Dates == | == Release Dates == | ||

| − | The first set of processors, as part of the {{amd|Ryzen}} family | + | [[File:ryzen threadripper.png|right|thumb|First 16-core HEDT market CPU]] |

| + | |||

| + | The first set of processors, as part of the {{amd|Ryzen 7}} family were introduced at an [[AMD]] event on February 22, [[2017]] before the ''Game Developer Conference'' (GDC). However initial models don't get shipped until March 2. {{amd|Ryzen 5}} [[hexa-core]] and [[quad-core]] variants were released on April 11, [[2017]]. Server processors are set to be released in by the end of Q2, 2017. In October [[2017]], [[AMD]] launched mobile Zen-based processors featuring {{\\|Vega}} GPUs. | ||

| + | |||

| + | [[File:amd zen ryzen rollout.png|600px]] | ||

| − | + | {{clear}} | |

| − | |||

== Compatibility == | == Compatibility == | ||

| − | [[Linux]] added initial support for Zen starting with Linux Kernel 4. | + | [[Linux]] added initial support for [[Zen]] starting with Linux Kernel 4.10. [[Microsoft]] will only support Windows 10 for [[Zen]]. |

{| class="wikitable" | {| class="wikitable" | ||

! Vendor !! OS !! Version !! Notes | ! Vendor !! OS !! Version !! Notes | ||

|- | |- | ||

| − | | rowspan="3" | Microsoft || rowspan="3" | Windows || style="background-color: #ffdad6;" | Windows 7 || No Support | + | | rowspan="3" | [[Microsoft]] || rowspan="3" | Windows || style="background-color: #ffdad6;" | Windows 7 || No Support |

|- | |- | ||

| style="background-color: #ffdad6;" | Windows 8 || No Support | | style="background-color: #ffdad6;" | Windows 8 || No Support | ||

| Line 126: | Line 345: | ||

| style="background-color: #d6ffd8;" | Windows 10 || Support | | style="background-color: #d6ffd8;" | Windows 10 || Support | ||

|- | |- | ||

| − | | Linux || Linux || style="background-color: #d6ffd8;" | Kernel 4. | + | | rowspan="2" | Linux || rowspan="2" | Linux || style="background-color: #d6ffd8;" | Kernel 4.10 || Initial Support |

| + | |- | ||

| + | | style="background-color: #d6ffd8;" | Kernel 4.15 || Full Support | ||

|} | |} | ||

== Compiler support == | == Compiler support == | ||

| + | With the release of {{amd|Ryzen}}, [[AMD]] introduced their own compiler: ''AMD Optimizing C/C++ Compiler'' (AOCC). <br>AOCC is an ''LLVM'' port especially modified to generate optimized x86 code for the [[Zen]] microarchitecture. | ||

| + | |||

{| class="wikitable" | {| class="wikitable" | ||

|- | |- | ||

! Compiler !! Arch-Specific || Arch-Favorable | ! Compiler !! Arch-Specific || Arch-Favorable | ||

| + | |- | ||

| + | | [[AOCC]] || <code>-march=znver1</code> || <code>-mtune=znver1</code> | ||

|- | |- | ||

| [[GCC]] || <code>-march=znver1</code> || <code>-mtune=znver1</code> | | [[GCC]] || <code>-march=znver1</code> || <code>-mtune=znver1</code> | ||

| Line 139: | Line 364: | ||

|- | |- | ||

| [[Visual Studio]] || <code>/arch:AVX2</code> || ? | | [[Visual Studio]] || <code>/arch:AVX2</code> || ? | ||

| + | |} | ||

| + | |||

| + | === CPUID === | ||

| + | {{see also|amd/cpuid|l1=AMD CPUID}} | ||

| + | {| class="wikitable tc1 tc2 tc3 tc4" | ||

| + | ! Core !! Extended<br>Family !! Family !! Extended<br>Model !! Model | ||

| + | |- | ||

| + | | rowspan="2" | {{amd|Naples|l=core}}, {{amd|Whitehaven|l=core}}, {{amd|Summit Ridge|l=core}} | ||

| + | || 0x8 || 0xF || 0x0 || 0x1 | ||

| + | |- | ||

| + | | colspan="4" | Family 23 Model 1 | ||

| + | |- | ||

| + | | rowspan="2" | {{amd|Raven Ridge|l=core}} || 0x8 || 0xF || 0x1 || 0x1 | ||

| + | |- | ||

| + | | colspan="4" | Family 23 Model 17 | ||

|} | |} | ||

== Architecture == | == Architecture == | ||

| − | AMD Zen is an entirely new design from the ground up which introduces considerable amount of improvements and design changes over {{\\|Excavator}}. Zen-based microprocessors | + | [[AMD]] [[Zen]] is an entirely new design from the ground up which introduces considerable amount of improvements and design changes over {{\\|Excavator}}. <br>Mainstream [[Zen]]-based microprocessors utilize [[AMD]]'s {{amd|Socket AM4}} unified platform along with the {{amd|Promontory|l=chipset}} chipset. |

| + | {| border="0" cellpadding="5" width="100%" | ||

| + | |- | ||

| + | |width="50%" valign="top" align="left"| | ||

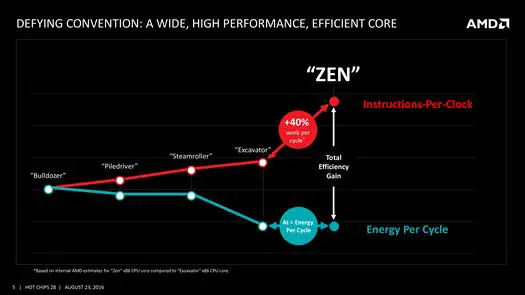

=== Key changes from {{\\|Excavator}} === | === Key changes from {{\\|Excavator}} === | ||

| − | * Zen was designed to succeed | + | * Zen was designed to succeed ''both'' {{\\|Excavator}} (High-performance) and <br>{{\\|Puma}} (Low-power) covering the entire range in one architecture |

** Cover the entire spectrum from fanless notebooks to high-performance desktops | ** Cover the entire spectrum from fanless notebooks to high-performance desktops | ||

** More aggressive clock gating with multi-level regions | ** More aggressive clock gating with multi-level regions | ||

** Power focus from design, employs low-power design methodologies | ** Power focus from design, employs low-power design methodologies | ||

| + | *** >15% switching capacitance (C<sub>AC</sub>) improvement | ||

* Utilizes [[14 nm process]] (from [[28 nm]]) | * Utilizes [[14 nm process]] (from [[28 nm]]) | ||

| − | * | + | * 52% improvement in IPC per core for a single-thread (AMD Claim) |

| + | ** From {{\\|Piledriver}} to Zen | ||

| + | ** Based on the industry-standardized SPECint_base2006 score compiled with GCC 4.6 -O2 at a fixed 3.4GHz | ||

| + | * Up to 3.7× performance/watt improvment | ||

* Return to conventional high-performance x86 design | * Return to conventional high-performance x86 design | ||

** Traditional design for cores without shared blocks (e.g. shared SIMD units) | ** Traditional design for cores without shared blocks (e.g. shared SIMD units) | ||

** Large beefier core design | ** Large beefier core design | ||

| − | * Core engine | + | * '''Cache system''' |

| − | ** Simultaneous Multithreading (SMT) support, 2 threads/core (see [[# | + | ** L1 |

| + | *** 64 KiB (double from previous capacity of 32 KiB) | ||

| + | *** Write-back L1 cache eviction policy (From write-through) | ||

| + | *** 2× the bandwidth | ||

| + | ** L2 | ||

| + | *** 2× the bandwidth | ||

| + | *** Faster L2 cache | ||

| + | ** Faster L3 cache | ||

| + | ** Better L1$ and L2$ data prefetcher | ||

| + | ** 5× L3 bandwidth | ||

| + | ** Move elimination block added | ||

| + | ** Page Table Entry (PTE) Coalescing | ||

| + | |width="50%" valign="top" align="left"| | ||

| + | * '''Core engine''' | ||

| + | ** Simultaneous Multithreading (SMT) support, 2 threads/core <br>(see [[#Simultaneous_MultiThreading (SMT)|§ SMT]] for details) | ||

** Branch Predictor | ** Branch Predictor | ||

*** Improved branch mispredictions | *** Improved branch mispredictions | ||

| Line 161: | Line 422: | ||

**** Lower miss latency penalty | **** Lower miss latency penalty | ||

*** BP is now decoupled from fetch stage | *** BP is now decoupled from fetch stage | ||

| − | ** Large | + | ** Large μop cache (2K instructions) |

** Wider μop dispatch (6, up from 4) | ** Wider μop dispatch (6, up from 4) | ||

** Larger instruction scheduler | ** Larger instruction scheduler | ||

| − | *** Integer (84, up | + | *** Integer (84, up from 48) |

| − | *** Floating Point (96, up | + | *** Floating Point (96, up from 60) |

** Larger retire throughput (8, up from 4) | ** Larger retire throughput (8, up from 4) | ||

** Larger Retire Queue (192, up from 128) | ** Larger Retire Queue (192, up from 128) | ||

| − | *** | + | *** Duplicated for each thread |

** Larger Load Queue (72, up from 44) | ** Larger Load Queue (72, up from 44) | ||

** Larger Store Queue (44, up from 32) | ** Larger Store Queue (44, up from 32) | ||

| − | *** | + | *** Duplicated for each thread |

** Quad-issue FPU (up from 3-issue) | ** Quad-issue FPU (up from 3-issue) | ||

** Faster Load to FPU (down to 7, from 9 cycles) | ** Faster Load to FPU (down to 7, from 9 cycles) | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

==== New instructions ==== | ==== New instructions ==== | ||

| − | Zen introduced a number of new [[x86]] instructions: | + | [[Zen]] introduced a number of new [[x86]] instructions: |

* <code>{{x86|ADX}}</code> - Multi-Precision Add-Carry Instruction extension | * <code>{{x86|ADX}}</code> - Multi-Precision Add-Carry Instruction extension | ||

* <code>{{x86|RDSEED}}</code> - Hardware-based RNG | * <code>{{x86|RDSEED}}</code> - Hardware-based RNG | ||

| Line 197: | Line 444: | ||

* <code>{{x86|CLFLUSHOPT}}</code> - Flush Cache Line | * <code>{{x86|CLFLUSHOPT}}</code> - Flush Cache Line | ||

* <code>{{x86|XSAVE}}</code> - Privileged Save/Restore | * <code>{{x86|XSAVE}}</code> - Privileged Save/Restore | ||

| − | * <code>{{x86|CLZERO}}</code> - Zero-out Cache Line (AMD exclusive) | + | * <code>{{x86|CLZERO}}</code> - Zero-out Cache Line ([[AMD]] exclusive) |

| − | + | |} | |

| − | While not new, Zen also supports {{x86|AVX}}, {{x86|AVX2}}, {{x86|FMA3}}, {{x86|BMI1}}, {{x86|BMI2}}, {{x86|AES}}, {{x86|RdRand}}, {{x86|SMEP}}. Note that with Zen, AMD dropped support for | + | While not new, [[Zen]] also supports {{x86|AVX}}, {{x86|AVX2}}, {{x86|FMA3}}, {{x86|BMI1}}, {{x86|BMI2}}, {{x86|AES}}, {{x86|RdRand}}, {{x86|SMEP}}. Note that with [[Zen]], [[AMD]] dropped support for {{x86|XOP}}, {{x86|TBM}} and {{x86|LWP}}. |

| − | + | '''Note:''' WikiChip's testing shows {{x86|FMA4}} still works despite not being officially supported and not even reported by {{amd|CPUID}}.<br> | |

| − | + | This has also been confirmed by [http://agner.org/optimize/blog/read.php?i=838 ''Agner'' here]. Those tests were not exhaustive. Never use them in production. | |

| − | {{ | ||

| − | [ | ||

| + | ---- | ||

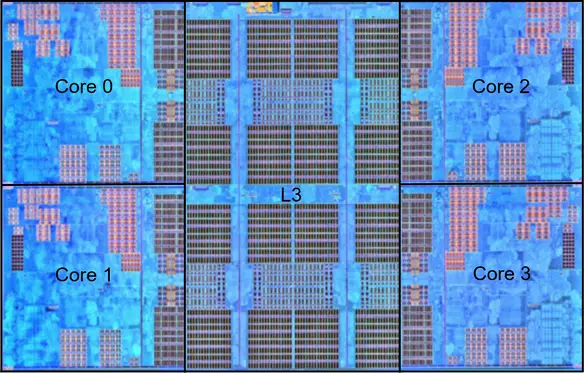

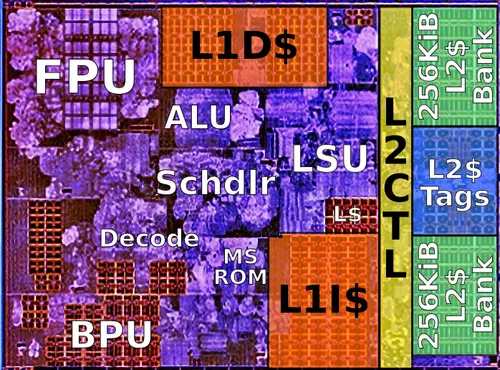

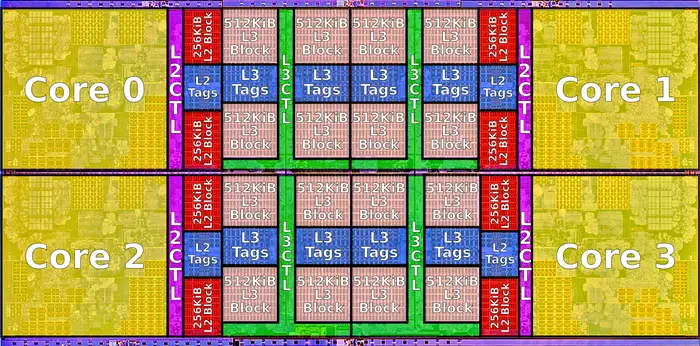

=== Memory Hierarchy === | === Memory Hierarchy === | ||

| − | * Cache | + | {| border="0" cellpadding="5" width="100%" |

| − | ** L1I Cache: | + | |- |

| + | |width="40%" valign="top" align="left"| | ||

| + | * '''Cache''' | ||

| + | ** '''L0 µOP cache:''' | ||

| + | *** 2,048 µOPs, 8-way set associative | ||

| + | **** 32-sets, 8-µOP line size | ||

| + | *** Parity protected | ||

| + | ** '''L1I Cache:''' | ||

*** 64 KiB 4-way set associative | *** 64 KiB 4-way set associative | ||

| − | **** | + | **** 256-sets, 64 B line size |

| − | **** | + | **** Shared by the two threads, per core |

| − | ** L1D Cache: | + | *** Parity protected |

| + | ** '''L1D Cache:''' | ||

*** 32 KiB 8-way set associative | *** 32 KiB 8-way set associative | ||

| − | **** | + | **** 64-sets, 64 B line size |

| − | **** | + | **** Write-back policy |

*** 4-5 cycles latency for Int | *** 4-5 cycles latency for Int | ||

*** 7-8 cycles latency for FP | *** 7-8 cycles latency for FP | ||

| − | ** L2 Cache: | + | *** SEC-DED ECC |

| + | ** '''L2 Cache:''' | ||

*** 512 KiB 8-way set associative | *** 512 KiB 8-way set associative | ||

| − | *** | + | *** 1,024-sets, 64 B line size |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

*** Write-back policy | *** Write-back policy | ||

| − | *** | + | *** Inclusive of L1 |

| − | ** System DRAM: | + | *** Latency: |

| − | *** 2 | + | **** 17 cycles latency (ONLY {{amd|Summit Ridge|l=core}}) |

| + | **** 12 cycles latency (All others) | ||

| + | *** DEC-TED ECC | ||

| + | |width="60%" valign="top" align="left"| | ||

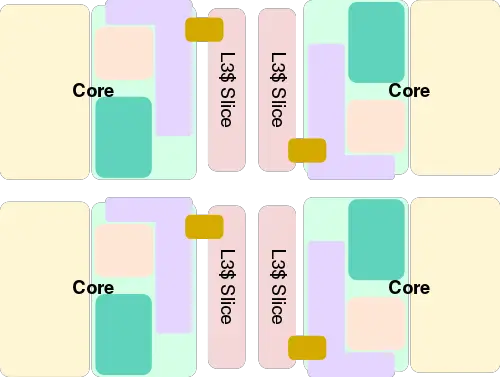

| + | ** '''L3 Cache:''' | ||

| + | *** Victim cache | ||

| + | *** ''Summit Ridge, Naples'': 8 MiB/CCX, shared across all cores. | ||

| + | *** ''Raven Ridge'': 4 MiB/CCX, shared across all cores. | ||

| + | *** 16-way set associative | ||

| + | **** 8,192-sets, 64 B line size | ||

| + | *** 40 cycles latency | ||

| + | *** DEC-TED ECC | ||

| + | ** '''System DRAM:''' | ||

| + | *** 2 channels per die | ||

| + | *** ''Summit Ridge'': up to PC4-21300U (DDR4-2666 UDIMM), ECC supported | ||

| + | *** ''Raven Ridge'': up to PC4-23466U (DDR4-2933 UDIMM), ECC supported by PRO models | ||

| + | *** ''Naples'': up to PC4-21300L (DDR4-2666 RDIMM/LRDIMM), ECC supported | ||

| + | *** ECC: x4 DRAM device failure correction (''Chipkill''), x8 SEC-DED ECC, Patrol <br>and Demand scrubbing, Data poisoning | ||

| − | Zen TLB consists of dedicated level one TLB for instruction cache and another one for data cache. | + | [[Zen]] TLB consists of dedicated level one TLB for instruction cache and another one for data cache. |

| − | + | * '''TLBs''' | |

| − | * TLBs | + | ** '''ITLB''' |

| − | ** ITLB | ||

*** 8 entry L0 TLB, all page sizes | *** 8 entry L0 TLB, all page sizes | ||

*** 64 entry L1 TLB, all page sizes | *** 64 entry L1 TLB, all page sizes | ||

*** 512 entry L2 TLB, no 1G pages | *** 512 entry L2 TLB, no 1G pages | ||

| − | ** DTLB | + | *** Parity protected |

| − | *** 64 entry, all page sizes | + | ** '''DTLB''' |

| − | + | *** 64 entry L1 TLB, all page sizes | |

| − | *** 1, | + | *** 1,536-entry L2 TLB, no 1G pages |

| − | *** | + | *** Parity protected |

| + | |} | ||

| + | |||

| + | == All Zen Chips == | ||

| + | |||

| + | <!-- NOTE: | ||

| + | This table is generated automatically from the data in the actual articles. | ||

| + | If a microprocessor is missing from the list, an appropriate article for it needs to be | ||

| + | created and tagged accordingly. | ||

| + | Missing a chip? please dump its name here: http://en.wikichip.org/wiki/WikiChip:wanted_chips | ||

| + | --> | ||

| + | {{comp table start}} | ||

| + | <table class="comptable sortable tc13 tc14 tc15 tc16 tc17 tc18 tc19"> | ||

| + | <tr class="comptable-header"><th> </th><th colspan="20">List of all Zen-based Processors</th></tr> | ||

| + | <tr class="comptable-header"><th> </th><th colspan="14">Processor</th><th colspan="6">Features</th></tr> | ||

| + | {{comp table header 1|cols=Family, Core, C, T, Freq, Turbo, TDP, L3$, L2$, L1$, Max Mem, Process, Launched, Price, SMT, AMD-V, XFR, SEV, SME, TSME}} | ||

| + | <tr class="comptable-header comptable-header-sep"><th> </th><th colspan="25">[[Uniprocessors]]</th></tr> | ||

| + | {{#ask: [[Category:microprocessor models by amd]] [[instance of::microprocessor]] [[microarchitecture::Zen]] [[max cpu count::1]] | ||

| + | |?full page name | ||

| + | |?model number | ||

| + | |?microprocessor family | ||

| + | |?core name | ||

| + | |?core count | ||

| + | |?thread count | ||

| + | |?base frequency#GHz | ||

| + | |?turbo frequency (1 core)#GHz | ||

| + | |?tdp | ||

| + | |?l3$ size | ||

| + | |?l2$ size | ||

| + | |?l1$ size#KiB | ||

| + | |?max memory#GiB | ||

| + | |?process | ||

| + | |?first launched | ||

| + | |?release price | ||

| + | |?has simultaneous multithreading | ||

| + | |?has amd amd-vi technology | ||

| + | |?has amd extended frequency range | ||

| + | |?has amd secure encrypted virtualization technology | ||

| + | |?has amd secure memory encryption technology | ||

| + | |?has amd transparent secure memory encryption technology | ||

| + | |format=template | ||

| + | |template=proc table 3 | ||

| + | |userparam=22:17 | ||

| + | |mainlabel=- | ||

| + | |valuesep=, | ||

| + | |limit=100 | ||

| + | }} | ||

| + | <tr class="comptable-header comptable-header-sep"><th> </th><th colspan="25">[[Multiprocessors]] (dual-socket)</th></tr> | ||

| + | {{#ask: [[Category:microprocessor models by amd]] [[instance of::microprocessor]] [[microarchitecture::Zen]] [[max cpu count::>>1]] | ||

| + | |?full page name | ||

| + | |?model number | ||

| + | |?microprocessor family | ||

| + | |?core name | ||

| + | |?core count | ||

| + | |?thread count | ||

| + | |?base frequency#GHz | ||

| + | |?turbo frequency (1 core)#GHz | ||

| + | |?tdp | ||

| + | |?l3$ size | ||

| + | |?l2$ size | ||

| + | |?l1$ size#KiB | ||

| + | |?max memory#GiB | ||

| + | |?process | ||

| + | |?first launched | ||

| + | |?release price | ||

| + | |?has simultaneous multithreading | ||

| + | |?has amd amd-vi technology | ||

| + | |?has amd extended frequency range | ||

| + | |?has amd secure encrypted virtualization technology | ||

| + | |?has amd secure memory encryption technology | ||

| + | |?has amd transparent secure memory encryption technology | ||

| + | |format=template | ||

| + | |template=proc table 3 | ||

| + | |userparam=22:17 | ||

| + | |mainlabel=- | ||

| + | |valuesep=, | ||

| + | |limit=100 | ||

| + | }} | ||

| + | {{comp table count|ask=[[Category:microprocessor models by amd]] [[instance of::microprocessor]] [[microarchitecture::Zen]]}} | ||

| + | </table> | ||

| + | {{comp table end}} | ||

| + | |||

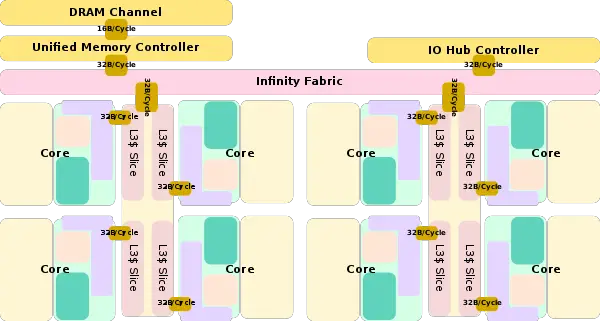

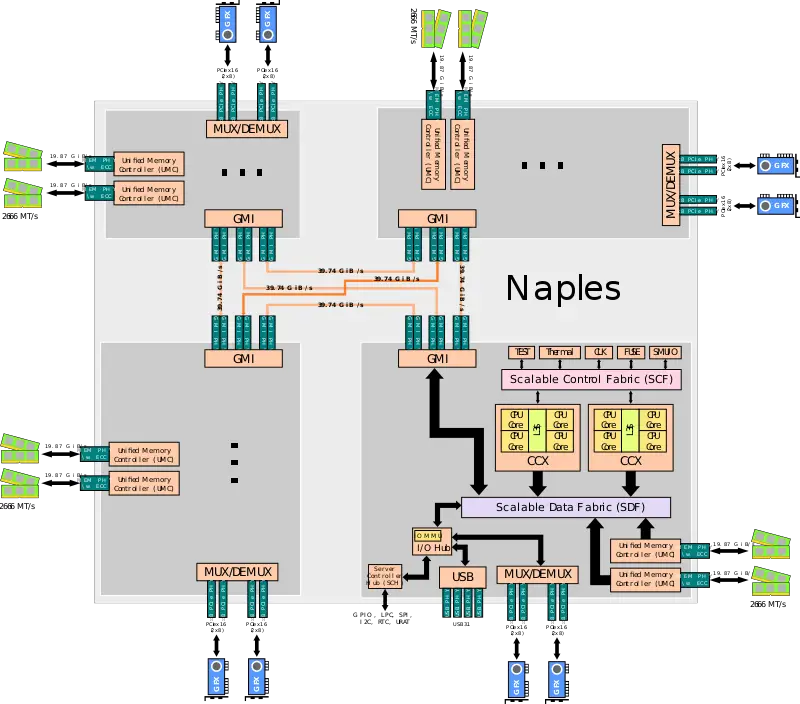

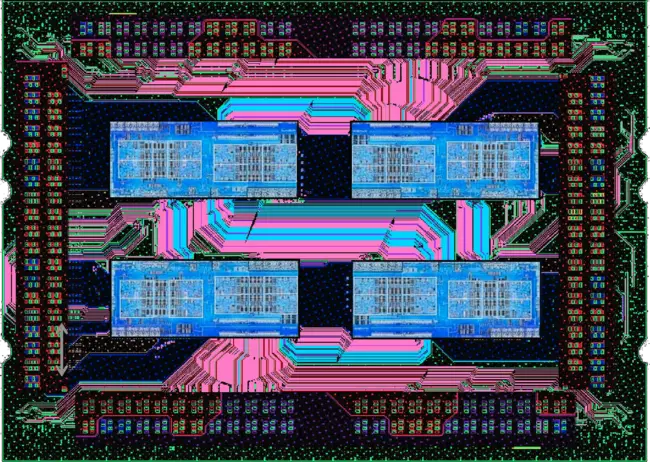

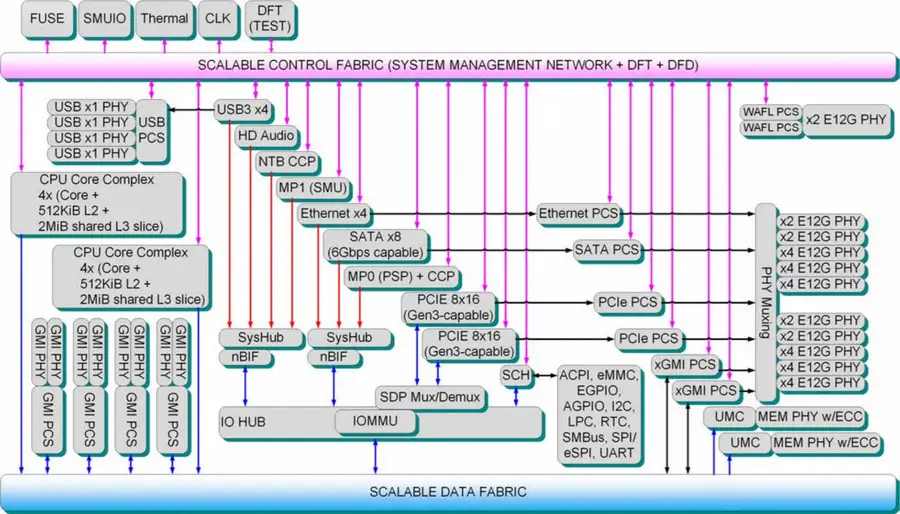

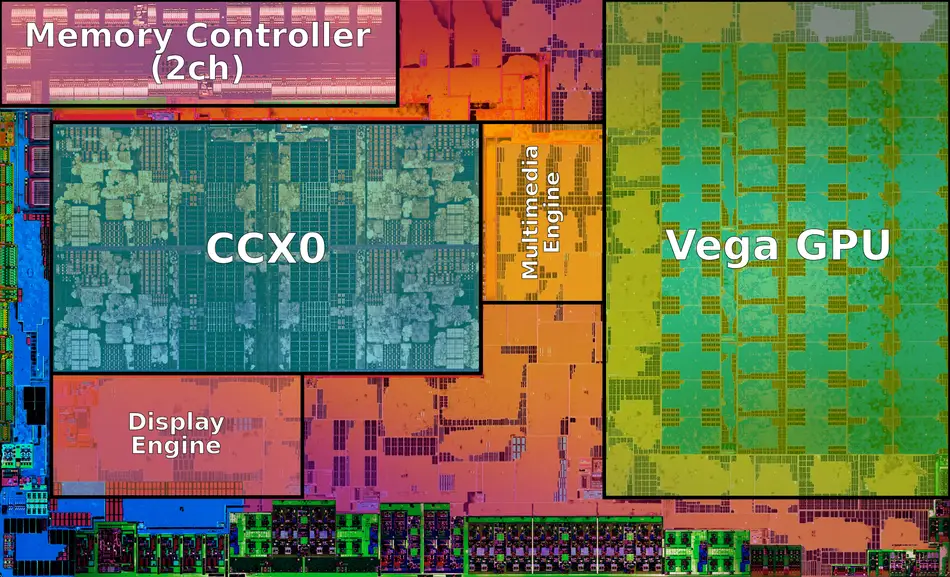

| + | == Block Diagram == | ||

| + | === Client Configuration === | ||

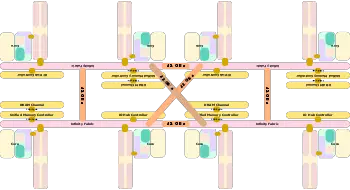

| + | ==== Entire SoC Overview ==== | ||

| + | [[File:zen soc block.svg|600px]] | ||

| + | |||

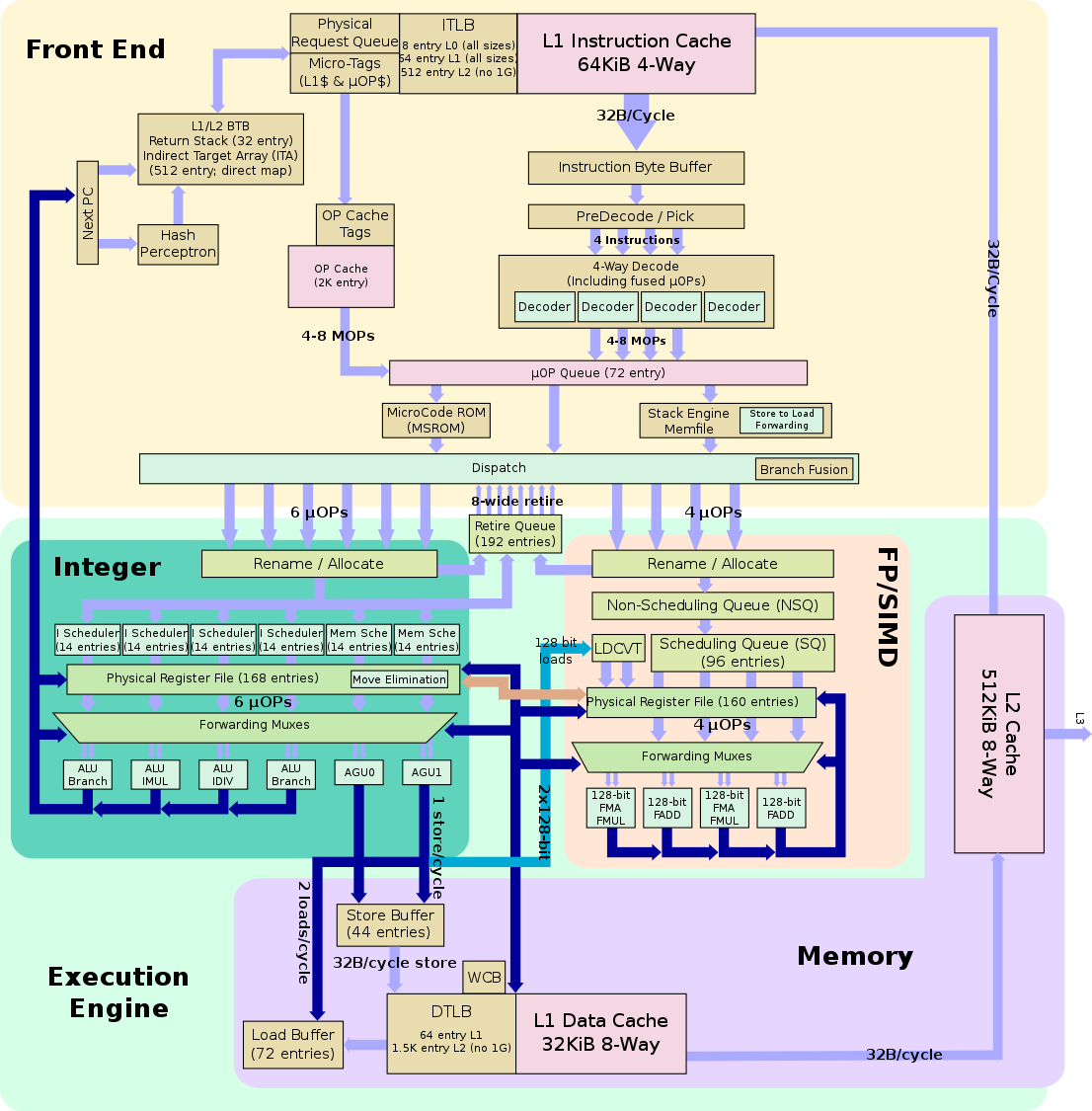

| + | ==== Individual Core ==== | ||

| + | [[File:zen block diagram.svg]] | ||

| + | |||

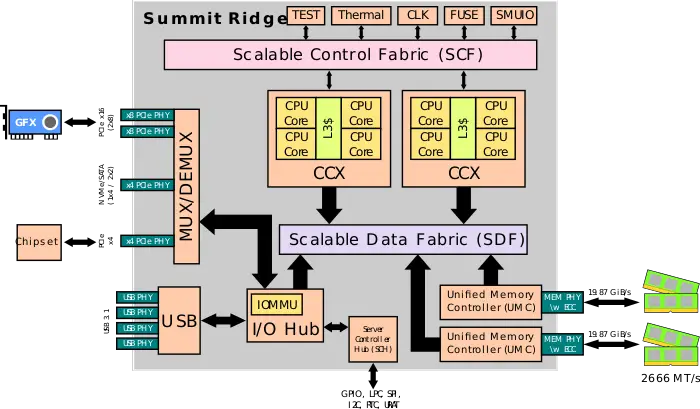

| + | === Single/Multi-chip Packages === | ||

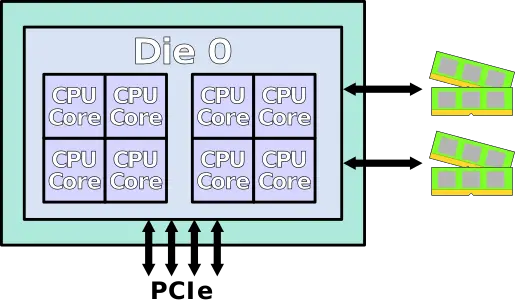

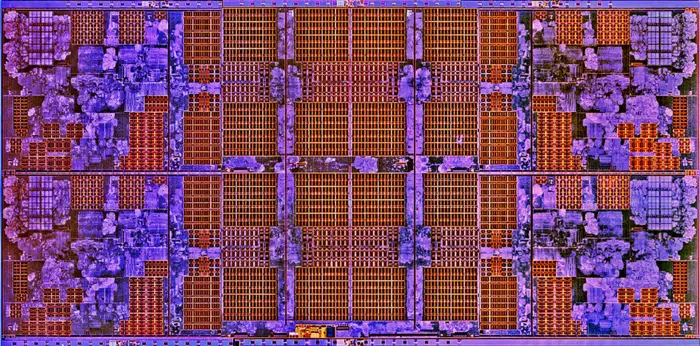

| + | ==== Single-die ==== | ||

| + | Single-die as used in {{amd|Summit Ridge|l=core}}: | ||

| + | |||

| + | :[[File:AMD Summit Ridge SoC.svg|700px]] | ||

| + | |||

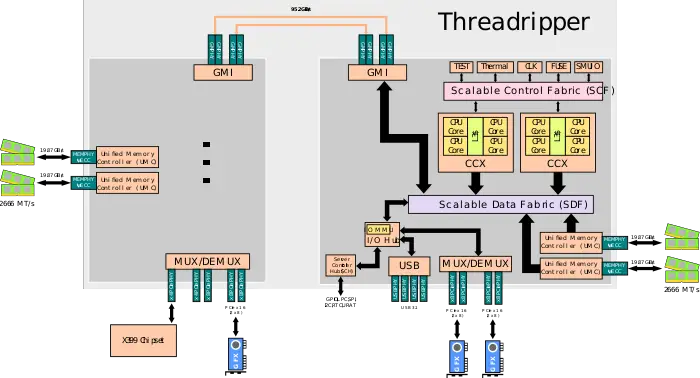

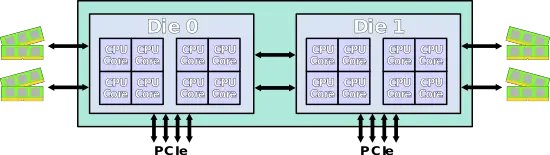

| + | ==== 2-die MCP ==== | ||

| + | 2-die MCP used for {{amd|Threadripper}}: | ||

| + | |||

| + | :[[File:AMD Threadripper SoC.svg|700px]] | ||

| + | |||

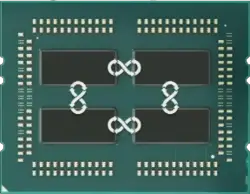

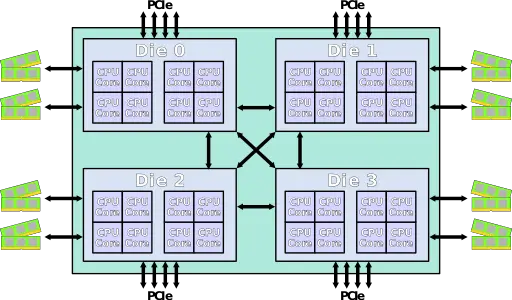

| + | ==== 4-die MCP ==== | ||

| + | 4-die MCP used for {{amd|EPYC}}: | ||

| + | |||

| + | :[[File:AMD Naples SoC.svg|800px]] | ||

| + | |||

| + | ==== 4-die CCX configs ==== | ||

| + | <div style="display: inline-block"> | ||

| + | <div style="float: left; margin: 15px;">'''32-core configuration:'''<br>[[File:zen soc block (32 cores).svg|350px]]</div> | ||

| + | <div style="float: left; margin: 15px;">'''24-core configuration:'''<br>[[File:zen soc block (24 cores).svg|350px]]</div> | ||

| + | <div style="float: left; margin: 15px;">'''16-core configuration:'''<br>[[File:zen soc block (16 cores).svg|350px]]</div> | ||

| + | <div style="float: left; margin: 15px;">'''8-core configuration:'''<br>[[File:zen soc block (8 cores).svg|350px]]</div> | ||

| + | </div> | ||

| + | |||

| + | == Core == | ||

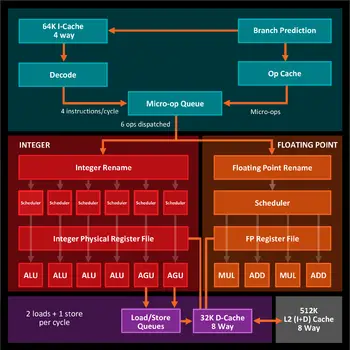

=== Pipeline === | === Pipeline === | ||

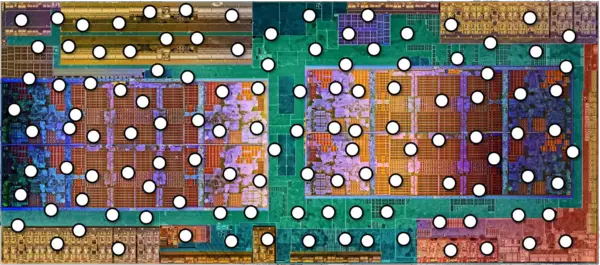

[[File:amd zen hc28 page 0004.jpg|525px|right]] | [[File:amd zen hc28 page 0004.jpg|525px|right]] | ||

| Line 249: | Line 629: | ||

==== Broad Overview ==== | ==== Broad Overview ==== | ||

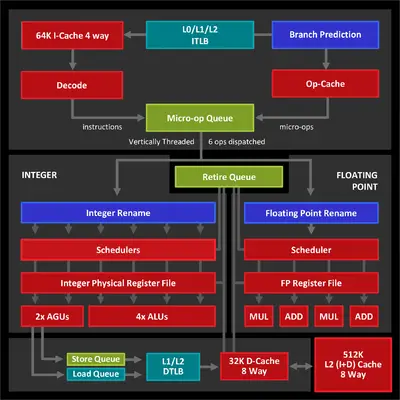

| − | At a very broad view, Zen shares | + | While Zen is an entirely new design, AMD continued to maintain their traditional design philosophy which shows throughout their design choice such as a split scheduler and split FP and int&memory execution units. At a very broad view, Zen shares many similarities with its predecessor but introduces new elements and major changes. Each core is composed of a front end ([[in-order]] area) that fetches instructions, decodes them, generates [[µOPs]] and [[fused µOPs]], and sends them to the Execution Engine ([[out-of-order]] section). Instructions are either fetched from the [[L1I$]] or come from the µOPs cache (on subsequent fetches) eliminating the decoding stage altogether. Zen decodes 4 instructions/cycle into the µOP Queue. The µOP Queue dispatches separate µOPs to the Integer side and the FP side (dispatching to both at the same time when possible). |

[[File:amd zen hc28 overview.png|350px|left]] | [[File:amd zen hc28 overview.png|350px|left]] | ||

| Line 257: | Line 637: | ||

Unlike many of Intel's recent microarchitectures (such as {{intel|Skylake|l=arch}} and {{intel|Kaby Lake|l=arch}}) which make use of a unified scheduler, AMD continue to use a split pipeline design. µOP are decoupled at the µOP Queue and are sent through the two distinct pipelines to either the Integer side or the FP side. The two sections are completely separate, each featuring separate schedulers, queues, and execution units. The Integer side splits up the µOPs via a set of individual schedulers that feed the various ALU units. On the floating point side, there is a different scheduler to handle the 128-bit FP operations. Zen support all modern {{x86|extensions|x86 extensions}} including {{x86|AVX}}/{{x86|AVX2}}, {{x86|BMI1}}/{{x86|BMI2}}, and {{x86|AES}}. Zen also supports {{x86|SHA}}, secure hash implementation instructions that are currently only found in [[Intel]]'s ultra-low power microarchitectures (e.g. {{intel|Goldmont|l=arch}}) but not in their mainstream processors. | Unlike many of Intel's recent microarchitectures (such as {{intel|Skylake|l=arch}} and {{intel|Kaby Lake|l=arch}}) which make use of a unified scheduler, AMD continue to use a split pipeline design. µOP are decoupled at the µOP Queue and are sent through the two distinct pipelines to either the Integer side or the FP side. The two sections are completely separate, each featuring separate schedulers, queues, and execution units. The Integer side splits up the µOPs via a set of individual schedulers that feed the various ALU units. On the floating point side, there is a different scheduler to handle the 128-bit FP operations. Zen support all modern {{x86|extensions|x86 extensions}} including {{x86|AVX}}/{{x86|AVX2}}, {{x86|BMI1}}/{{x86|BMI2}}, and {{x86|AES}}. Zen also supports {{x86|SHA}}, secure hash implementation instructions that are currently only found in [[Intel]]'s ultra-low power microarchitectures (e.g. {{intel|Goldmont|l=arch}}) but not in their mainstream processors. | ||

| − | From the memory subsystem point of view, data is fed into the execution units from the [[L1D$]] via the load and store queue (both of which were almost doubled in capacity) via the two [[Address Generation Units]] (AGUs) at the rate of 2 loads and 1 store per cycle. Each core also has a 512 KiB level 2 cache. L2 feeds both the the level 1 data and level 1 instruction caches at 32B per cycle (32B can be | + | From the memory subsystem point of view, data is fed into the execution units from the [[L1D$]] via the load and store queue (both of which were almost doubled in capacity) via the two [[Address Generation Units]] (AGUs) at the rate of 2 loads and 1 store per cycle. Each core also has a 512 KiB level 2 cache. L2 feeds both the the level 1 data and level 1 instruction caches at 32B per cycle (32B can be sent in either direction (bidirectional bus) each cycle). L2 is connected to the L3 cache which is shared across all cores. As with the L1 to L2 transfers, the L2 also transfers data to the L3 and vice versa at 32B per cycle (32B in either direction each cycle). |

{{clear}} | {{clear}} | ||

| Line 263: | Line 643: | ||

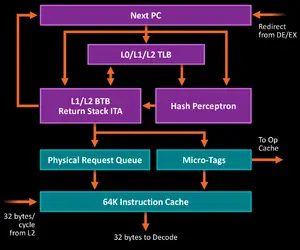

==== Front End ==== | ==== Front End ==== | ||

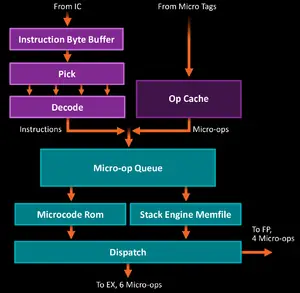

[[File:amd zen hc28 fetch.png|300px|right]] | [[File:amd zen hc28 fetch.png|300px|right]] | ||

| − | The Front End of the Zen core deals with the [[in-order]] operations such as [[instruction fetch]] and [[instruction decode]]. The instruction fetch is composed of two paths: a traditional decode path where instructions come from the [[instruction cache]] and a [[µOPs cache]] that are determined by the [[branch prediction]] (BP) unit. The instruction stream and the branch prediction unit track instructions in 64B windows. | + | The Front End of the Zen core deals with the [[in-order]] operations such as [[instruction fetch]] and [[instruction decode]]. The instruction fetch is composed of two paths: a traditional decode path where instructions come from the [[instruction cache]] and a [[µOPs cache]] that are determined by the [[branch prediction]] (BP) unit. The instruction stream and the branch prediction unit track instructions in 64B windows. Zen is AMD's first design to feature a [[µOPs cache]], a unit that not only improves performance, but also saves power (the µOPs cache was first introduced by [[Intel]] in their {{intel|Sandy Bridge|l=arch}} microarchitecture). |

The [[branch prediction]] unit is decoupled and can start working as soon as it receives a desired operation such as a redirect, ahead of traditional instruction fetches. AMD still uses a [[perceptron branch predictor|hashed perceptron system]] similar to the one used in {{\\|Jaguar}} and {{\\|Bobcat}}, albeit likely much more finely tuned. AMD stated it's also larger than previous architectures but did not disclose actual sizes. Once the BP detects an indirect target operation, the branch is moved to the Indirect Target Array (ITA) which is 512 entry deep. The BP includes a 32-entry return stack. | The [[branch prediction]] unit is decoupled and can start working as soon as it receives a desired operation such as a redirect, ahead of traditional instruction fetches. AMD still uses a [[perceptron branch predictor|hashed perceptron system]] similar to the one used in {{\\|Jaguar}} and {{\\|Bobcat}}, albeit likely much more finely tuned. AMD stated it's also larger than previous architectures but did not disclose actual sizes. Once the BP detects an indirect target operation, the branch is moved to the Indirect Target Array (ITA) which is 512 entry deep. The BP includes a 32-entry return stack. | ||

| Line 273: | Line 653: | ||

* 512-entry L2 TLB, no 1G pages | * 512-entry L2 TLB, no 1G pages | ||

| − | Instructions are fetched from the [[L2 cache]] at the rate of 32B/cycle. Zen | + | ===== Fetching ===== |

| + | Instructions are fetched from the [[L2 cache]] at the rate of 32B/cycle. Zen features an asymmetric [[level 1 cache]] with a 64 [[KiB]] [[instruction cache]], double the size of the L1 data cache. Depending on the branch prediction decision instructions may be fetched from the instruction cache or from the [[µOPs]] cache in which eliminates the need for performing the costly instruction decoding. | ||

[[File:amd zen hc28 decode.png|left|300px]] | [[File:amd zen hc28 decode.png|left|300px]] | ||

| − | On the traditional side of decode instructions are fetched from the L1$ at 32B | + | On the traditional side of decode, instructions are fetched from the L1$ at 32B aligned bytes per cycle and go to the instruction byte buffer and through the pick stage to the decode. Actual tests show the effective throughput is generally much lower (around 16-20 bytes). This is slightly higher than the fetch window in [[Intel]]'s {{intel|Skylake}} which has a 16-byte fetch window. The size of the instruction byte buffer is of 20 entries (10 entries per thread in SMT). |

| + | |||

| + | ===== µOP cache & x86 tax ===== | ||

| + | Decoding is the biggest weakness of [[x86]], with decoders being one of the most expensive and complicated aspect of the entire microarchitecture. Instructions can vary from a single byte up {{x86|instructions format|to fifteen}}. Determining instruction boundaries is a complex task in itself. The best way to avoid the x86 decoding tax is to not decode instructions at all. Ideally, most instructions get a hit from the BP and acquire a µOP tag, sending them directly to be retrieved from the µOP cache which are then sent to the µOP Queue. This bypasses most of the expensive fetching and decoding that would otherwise be needed to be done. This caching mechanism is also a considerable power saving feature. | ||

| + | |||

| + | The [[µOP cache]] used in Zen is not a [[trace cache]] and much closely resembles the one used by Intel in their microarchitectures since {{intel|Sandy Bridge|l=arch}}. The µOP cache is an independent unit not part of the [[L1I$]] and is not a necessarily a subset of the L1I cache either; I.e., there are instances where there could be a hit in the µOP cache but a miss in the L1$. This happens when an instruction that got stored in the µOP cache gets evicted from L1. During the fetch stage probing must be done from both paths. Zen has a specific unit called 'Micro-Tags' which does the probing and determines whether the instruction should be accessed from the µOP cache or from the L1I$. The µOP cache itself has a dedicated $tags for accessing those µOPs. | ||

| + | |||

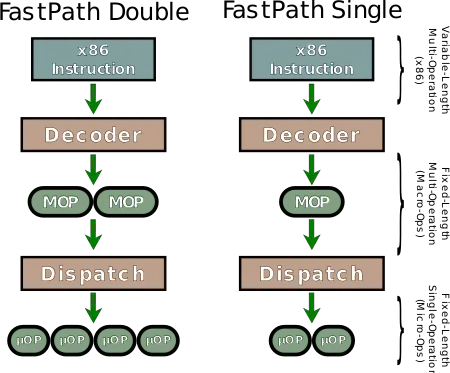

| + | ===== Decode ===== | ||

| + | [[File:amd fastpath single-double (zen).svg|right|450px]] | ||

| + | Having to execute [[x86]], there are instructions that actually include multiple operations. Some of those operations cannot be realized efficiently in an OoOE design and therefore must be converted into simpler operations. In the front-end, complex x86 instructions are broken down into simpler fixed-length operations called [[macro-operations]] or MOPs (sometimes also called complex OPs or COPs). Those are often mistaken for being "[[RISC]]ish" in nature but they retain their CISC characteristics. MOPS can perform both an arithmetic operation and memory operation (e.g. you can read, modify, and write in a single MOP). MOPs can be further cracked into smaller simpler single fixed length operation called [[micro-operations]] (µOPs). µOPs are a fixed length operation that performs just a single operation (i.e., only a single load, store, or an arithmetic). Traditionally AMD used to distinguish between the two ops, however with Zen AMD simply refers to everything as µOPs although internally they are still two separate concepts. | ||

| + | |||

| + | Decoding is done by the 4 Zen decoders. The decode stage allows for four [[x86]] instructions to be decoded per cycle which are in turn sent to the µOP Queue. Previously, in the {{\\|Bulldozer}}/{{\\|Jaguar}}-based designs AMD had two paths: a FastPath Single which emitted a single MOP and a FastPath Double which emitted two MOPs which are in turn sent down the pipe to the schedulers. Michael Clark (Zen's lead architect) noted that Zen has significantly denser MOPs meaning almost all instructions will be a FastPath Single (i.e., one to one transformations). What would normally get broken down into two MOPs in {{\\|Bulldozer}} is now translated into a single dense MOP. It's for those reasons that while up to 8MOPs/cycle can be emitted, usually only 4MOPs/cycle are emitted from the [[instruction decoder|decoders]]. | ||

| + | |||

| + | Dispatch is capable of sending up to 6 µOP to [[Integer]] EX and an up to 4 µOP to the [[Floating Point]] (FP) EX. Zen can dispatch to both at the same time (i.e. for a maximum of 10 µOP per cycle), however, since the retire control unit (RCU) can only handle up to 6 MOPs/cycle, the effective number of dispatched µOPs is likely lower. | ||

| + | |||

| + | ====== MSROM ====== | ||

| + | A third path that may occasionally be reached is the Microcode Sequencer (MS) ROM. Instructions that end up emitting more than two macro-ops will be redirected to microcode ROM. When this happens the OP Queue is stalled (possibly along with the decoders) and the MSROM gets to emit its MOPs. | ||

| − | At the decode stage Zen incorporates the | + | ===== Optimizations ===== |

| + | A number of optimization opportunities are exploited at this stage. | ||

| + | ====== Stack Engine ====== | ||

| + | At the decode stage Zen incorporates the the Stack Engine Memfile (SEM). Note that while AMD refers to SEM as a new unit, they have had a Stack Engine in their designs since {{\\|K10}}. The Memfile sits between the queue and dispatch monitoring the MOP traffic. The Memfile is capable of performing [[store-to-load forwarding]] right at dispatch for loads that trail behind known stores with physical addresses. Other things such as eliminating stack PUSH/POP operations are also done at this stage so they are effectively a zero-latency instructions; proceeding instructions that rely on the stack pointer are not delayed. This is a fairly effective low-power solution that off-loads some of the work that would otherwise be done by [[AGU]]. | ||

| − | + | ====== µOP-Fusion ====== | |

| + | At this stage of the pipeline, Zen performs additional optimizations such as [[micro-op fusion]] or branch fusion - an operation where a comparison and branch op gets combined into a single µOP (resulting in a single schedule+single execute). An almost identical optimization is also performed by Intel's competing microarchitectures. In Zen, <code>{{x86|CMP}}</code> or <code>{{x86|TEST}}</code> (no other [[ALU]] instructions qualify) immediately followed by a [[conditional jump]] can be fused into a single µOP. Note that non-{{x86|RIP-Relative Addressing|RIP-relative memory}} will not be fused. Up to two fused branch µOPs can be executed each cycle when [[not taken]]. When taken, only single fused branch µOPs can be executed each cycle. | ||

| + | It's interesting to reiterate the fact that the branch fusion is actually done by the dispatch stage instead of decode. This is a bit unusual because you'd normally perform that operation in decode in order to reduce the number of internal instructions. In Zen, the decoders can still end up emitting two ops just to be fused together in the dispatch stage. This change can likely be attributed to the various optimizations that came along with the introduction of the µOPs cache (which sits parallel to the decoders in the pipeline). It also implies that the decoders are of a simple design intended to be further translated later own in the pipe thereby being limited to a number of key transformations such as instruction boundary detection (i.e., x86 instruction length and rearrangement). | ||

{{clear}} | {{clear}} | ||

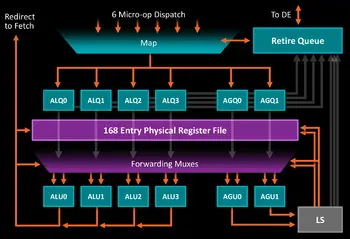

==== Execution Engine ==== | ==== Execution Engine ==== | ||

[[File:amd zen hc28 integer.png|350px|right]] | [[File:amd zen hc28 integer.png|350px|right]] | ||

| − | As mentioned early, Zen returns to a fully partitioned core design with a private L2 cache and private [[FP]]/[[SIMD]] units. Previously those units | + | As mentioned early, Zen returns to a fully partitioned core design with a private L2 cache and private [[FP]]/[[SIMD]] units. Previously those units shared resources spanning two cores. Zen's Execution Engine (Back-End) is split into two major sections: [[integer]] & memory operations and [[floating point]] operations. The two sections are decoupled with independent [[register renaming|renaming]], [[schedulers]], [[queues]], and execution units. Both Integer and FP sections have access to the [[Retire Queue]] which is 192 entries (96 per thread) and can [[retire]] 8 instructions per cycle (independent of either Integer or FP). The wider-than-dispatch retire allows Zen to catch up and free the resources much quicker (previous architectures saw bottleneck at this point in situations where an older op is stalling causing a reduction in performance due to retire needing to catch up to the front of the machine). |

| + | |||

| + | Because the two regions are entirely divided, a penalty of one cycle latency will incur for operands that crosses boundaries; for example, if an [[operand]] of an integer arithmetic µOP depends on the result of a floating point µOP operation. This applies both ways. This is a similar to the inter-[[Common Data Bus]] exchanges in Intel's designs (e.g., {{intel|Skylake|l=arch}}) which incur a delay of 1 to 2 cycles when dependent operands cross domains. | ||

| + | ===== Move elimination ===== | ||

| + | Move elimination is possible in both Integer and FP domains; register moves are done internally by modifying the register mapping rather than through an execution of a µOP. No execution unit resources is used in the process and such µOP result in zero latency. In WikiChip's tests, almost all move eliminations succeed; including chained moves. An elimination will never occur for moves involving the register itself. This applies to both 32-bit and 64-bit integer registers as well as all 128-bit and 256-bit vector registers but not half registers (e.g. 16/8 bit registers). | ||

===== Integer ===== | ===== Integer ===== | ||

| − | The Integer Execute can receive up to 6 µOPs/cycle from Dispatch where it is mapped from [[logical registers]] to [[physical registers]]. Zen has a 168-entry physical integer [[register file]], an identical size to that of {{intel|Broadwell|l=arch}}. Instead of a large scheduler, Zen has 6 distributed scheduling queues, each 14 entries deep (4x[[ALU]], 2x[[AGU]]). Zen includes a number of enhancements such as differential checkpoints tracking branch instructions and eliminating redundant values as well as [[move eliminations]]. Note that register moves are done internally by modifying the register mapping rather than through an execution of a µOP | + | The Integer Execute can receive up to 6 µOPs/cycle from Dispatch where it is mapped from [[logical registers]] to [[physical registers]]. Zen has a 168-entry physical 64-bit integer [[register file]], an identical size to that of {{intel|Broadwell|l=arch}}. Instead of a large scheduler, Zen has 6 distributed scheduling queues, each 14 entries deep (4x[[ALU]], 2x[[AGU]]). Zen includes a number of enhancements such as differential checkpoints tracking branch instructions and eliminating redundant values as well as [[move eliminations]]. Note that register moves are done internally by modifying the register mapping rather than through an execution of a µOP. While AMD stated that the ALUs are largely symmetric except for a number of exceptions, it's still unknown which operations are reserved to which units. |

| + | |||

| + | Generally, the four ALUs will execute four integer instructions per cycle. Simple operations can be done by any of the ALUs whereas the more expensive multiplication and division ones can only be done by their respective ALUs (there is one of each). Additionally, two of Zen's [[ALU]]s are capable of performing a branch, therefore Zen can peak at 2 branches per cycle. This only occurs if they are not taken. The two branches can simultaneously execute two branch instructions from the same thread or from two separate threads. If the branch is taken, Zen is restricted to only 1 branch per cycle. This is a similar restriction which is found in Intel's architectures such as {{intel|Haswell|l=arch}}. In {{intel|Haswell|l=arch}}, port 0 can only execute predicted "not-taken" branches whereas port 6 can perform both "taken" and "not taken". AMD's reason for adding a second branch is driven by an entirely different reason compared to Haswell which had done the same. The second branch unit in {{intel|Haswell|l=arch}} was added largely in an effort to mitigate port contention. Prior to that change, code involving tight loops that performed SSE operations ended up fighting over the same port as both the SSE operation and the actual branch ended up being scheduled on the same port. Zen doesn't actually have this issue. The addition of a second branch unit in their case serves to purely boost the performance of branch-heavy code. | ||

| + | |||

| + | The 2 [[AGU]]s can be used in conjunction with the ALUs. µOPs involving a memory operands will make use of both at the same time and will not be (i.e., the operations don't get split up). Zen is capable of a read+write or read+read operations in one cycle (See [[#Memory Subsystem|§ Memory Subsystem]]). | ||

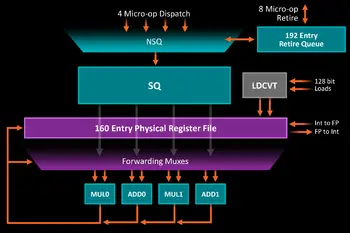

===== Floating Point ===== | ===== Floating Point ===== | ||

| + | The Floating Point side can receive up to 4 µOPs/cycle from Dispatch where it is mapped from [[logical registers]] to [[physical registers]]. Zen has a 160-entry physical 128-bit floating point [[register file]], just 8 entries shy of the size used in [[Intel]]'s {{intel|Skylake|l=arch}}/{{intel|Kaby Lake|l=arch}} architectures. The register file can perform direct transfers to the Integer register files as needed. | ||

[[File:amd zen hc28 fp.png|350px|left]] | [[File:amd zen hc28 fp.png|350px|left]] | ||

| − | |||

| + | Before ops go to the scheduling queue, they go through the Non-Scheduling Queue (NSQ) first which is essentially a wait buffer. Because FP instructions typically have higher latency, they can create a back-up at Dispatch. The non-scheduling queue attempts to reduce this by queuing more FP instructions which lets Dispatch continue on as much as possible on the Integer side. Additionally, the NSQ can go ahead and start working on the memory components of the FP instructions so that they can be ready once they go through the Scheduling Queue. From the schedulers, the instructions are sent to be executed. The FP scheduler has four pipes (1 more than that of {{\\|Excavator}}) with execution units that operate on 128-bit floating point. | ||

| + | |||

| + | The FP deals with all vector operations. The simple integer vector operations (e.g. shift, add) can all be done in one cycle, half the latency of AMD's previous architecture. Basic [[floating point]] math has a latency of three cycles including [[multiplication]] (one additional cycle for double precision). [[Fused multiply-add]] are five cycles. | ||

| + | |||

| + | The FP has a single pipe for 128-bit load operations. In fact, the entire FP side is optimized for 128-bit operations. Zen supports all the latest instructions such as SSE and {{x86|AVX1}}/{{x86|AVX2|2}}. The way 256-bit AVX was designed was so that they can be carried out as two independent 128-bit operations. Zen takes advantage of that by operating on those instructions as two operations; i.e., Zen splits up 256-bit operations into two µOPs so they are effectively half the throughput of their 128-bit operations counterparts. Likewise, stores are also done on 128-bit chunks, making 256-bit loads have an effective throughput of one store every two cycles. The pipes are fairly well balanced, therefore most operations will have at least two pipes to be scheduled on retaining the throughput of at least one such instruction each cycle. As implies, 256-bit operations will use up twice the resources to complete (i.e., 2x register, scheduler, and ports). This is a compromise [[AMD]] has taken which helps conserve die space and power. By contrast, [[Intel]]'s competing design, {{intel|Skylake}}, does have dedicated 256-bit circuitry. It's also worth noting that Intel's contemporary {{intel|Skylake SP|server class models|l=core}} have extended this further to incorporate dedicated 512-bit circuitry supporting {{x86|AVX-512}} with the highest performance models {{intel|Skylake (server)#Execution_engine|l=arch|having a whole second}} dedicated AVX-512 unit. | ||

| + | |||

| + | Additionally Zen also supports {{x86|SHA}} and {{x86|AES}} with 2 AES units implemented in an attempt to improve encryption performance. Those units can be found on pipes 0 and 1 of the floating point scheduler. | ||

{{clear}} | {{clear}} | ||

==== Memory Subsystem ==== | ==== Memory Subsystem ==== | ||

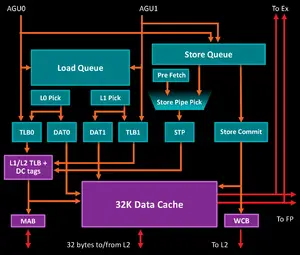

[[File:amd zen hc28 memory.png|300px|right]] | [[File:amd zen hc28 memory.png|300px|right]] | ||

| − | Loads and Stores are conducted via the two AGUs which can operate simultaneously. Zen has a | + | Loads and Stores are conducted via the two AGUs which can operate simultaneously. Zen has a 44-entry Load Queue and a 44-entry Store Queue. Taking the two 14-entry deep AGU schedulers into account, the processor can keep up to 72 out-of-order loads in flight (same as Intel's {{intel|Skylake|l=arch}}). Zen employs a split TLB-data pipe design which allows TLB tag access to take place while the data cache is being fed in order to determine if the data is available and send their address to the L2 to start prefetching early on. Zen is capable of up to two loads per cycle (2x16B each) and up to one store per cycle (1x16B). The L1 TLB is 64-entry for all page sizes and the L2 TLB is a 1536-entry with no 1 GiB pages. |

| − | Zen incorporates a 64 KiB 4-way set associative L1 instruction cache | + | Zen incorporates a 64 KiB 4-way set associative L1 instruction cache and a 32 KiB 8-way set associative L1 data cache. Both the instruction cache and the data cache can fetch from the L2 cache at 32 Bytes per cycle. The L2 cache is a 512 KiB 8-way set associative unified cache, inclusive, and private to the core. The L2 cache can fetch and write 32B/cycle into the 8MB L3 cache (32B in either direction each cycle, i.e. bidirectional bus). |

| + | |||

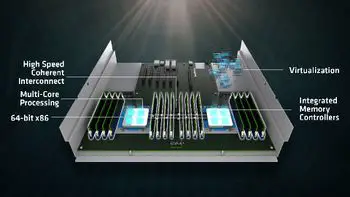

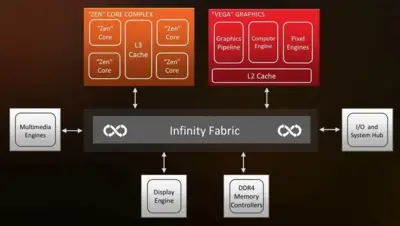

| + | == Infinity Fabric == | ||

| + | {{main|amd/infinity fabric|l1=AMD's Infinity Fabric}} | ||

| + | The '''Infinity Fabric''' ('''IF''') is a system of transmissions and controls that underpin the entire Zen microarchitecture, any graphics microarchitecture (e.g {{amd|Vega}}), and any other additional accelerators they might add in the future. Consisting of two separate fabrics, one for control signals and a second for data transmission, the infinity fabric is the primary means by which data flows from one core to the other, across CCXs, chips, to any graphics unit, and from any I/O (e.g. USB). | ||

| + | |||

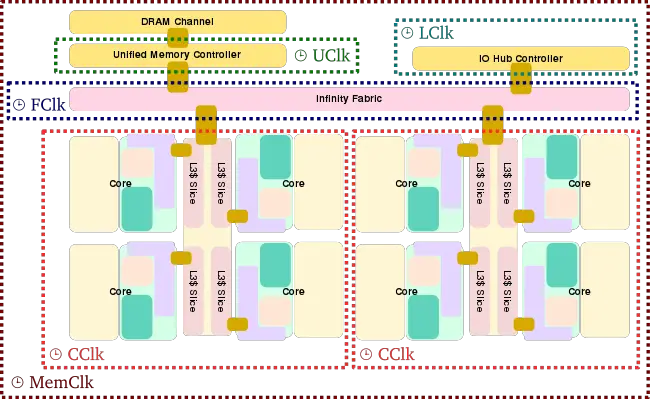

| + | == Clock domains == | ||

| + | Zen is divided into a number of [[clock domains]], each operating at a certain frequency: | ||

| + | |||

| + | * '''UClk''' - UMC Clock - The frequency at which the Unified Memory Controller's (UMC) operates at. This frequency is identical to MemClk. | ||

| + | * '''LClk''' - Link Clock - The clock at which the I/O Hub Controller communicates with the chip. | ||

| + | * '''FClk''' - Fabric Clock - The clock at which the data fabric operates at. This frequency is identical to MemClk. | ||

| + | * '''MemClk''' - Memory Clock - Internal and external memory clock. | ||

| + | * '''CClk''' - Core Clock - The frequency at which the CPU core and the caches operate at (i.e. advertised frequency). | ||

| + | |||

| + | For example, a stock {{amd|Ryzen 7 1700}} with 2400 MT/s DRAM will have a CClk = 3000 MHz, MemClk = FClk = UClk = 1200 MHz. | ||

| + | |||

| + | |||

| + | [[File:zen soc clock domain.svg|650px]] | ||

| + | |||

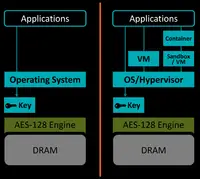

| + | == Security == | ||

| + | [[File:amd sme.png|right|200px]] | ||

| + | AMD incorporated a number of new security technologies into their server-class Zen processors (e.g., {{amd|EPYC}}). The various security features are offered via a new dedicated security subsystem which integrates an {{armh|Cortex-A5|l=arch}} core. The dedicated secure processor runs a secured kernel with the firmware which sits externally (e.g., on an SPI ROM). The secure processor is responsible for the cryptographic functionalities for the secure key generation and management as well as hardware-validated boots. | ||

| + | |||

| + | <table class="wikitable"> | ||

| + | <tr><td></td><th>SME</th><th>SEV</th></tr> | ||

| + | <tr><th>Protection Per</th><td>Whole Machine</td><td>Individual VMs</td></tr> | ||

| + | <tr><th>Type of Protection</th><td>Physical Memory Attack</td><td>Physical Memory Attack<br>Vulnerable VM</td></tr> | ||

| + | <tr><th>Encryption Per</th><td>Native page table</td><td>Guest page table</td></tr> | ||

| + | <tr><th>Key Management</th><td>Key/Machine</td><td>Key/VM</td></tr> | ||

| + | <tr><th>Requires Driver</th><td>No</td><td>Yes</td></tr> | ||

| + | </table> | ||

| + | |||

| + | |||

| + | === Secure Memory Encryption (SME) === | ||

| + | {{main|x86/secure memory encryption|l1=Secure Memory Encryption}} | ||

| + | '''Secure Memory Encryption''' ('''SME''') is a new feature which offers full hardware memory encryption against physical memory attacks. A single key is used for the encryption. An [[AES-128]] Encryption engine sits on the [[integrated memory controller]] thereby offering real-time per page table entry encryption - this works across execution cores, network, storage, graphics, and any other I/O access that goes through the DMA. SME incurs additional latency tax only for encrypted pages. | ||

| + | |||

| + | AMD also supports '''Transparent SME''' ('''TSME''') on their workstation-class PRO (Performance, Reliability, Opportunity) processors in addition to the server models. TSME is subset of SME limited to base encryption without OS/HV involvement, allowing for legacy OS/HV software support. In this mode, all memory is encrypted regardless of the value of the C-bit on any particular page. When this mode is enabled, SME and SEV are not available. | ||

| + | |||

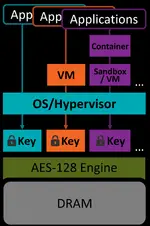

| + | === Secure Encrypted Virtualization (SEV) === | ||

| + | {{main|x86/secure encrypted virtualization|l1=Secure Encrypted Virtualization}}[[File:amd sev.png|right|150px]] | ||

| + | '''Secure Encrypted Virtualization''' ('''SEV''') is a more specialized version of SME whereby individual keys can be used per hypervisor and per VM, a cluster of VMs, or a container. This allows the hypervisor memory to be encrypted and cryptographically isolated from the guest machines. Additionally SEV can work alongside unencrypted VMs from the same hypervisor. All this functionality is integrated and works with existing AMD-V technology. | ||

| + | |||

| + | |||

| + | : [[File:amd sev architecture.png|700px]] | ||

| + | |||

| + | {{clear}} | ||

| + | |||

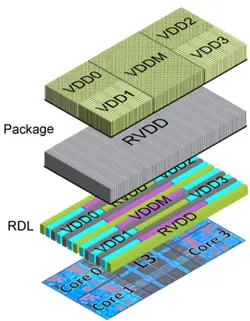

| + | == Power == | ||

| + | <div style="display: inline-block; float: right;"> | ||

| + | [[File:zen ccx voltage.png|250px]] | ||

| + | * '''RDL''' - Redistribution layer | ||

| + | * '''LDOs''' - Regulate RVDD to create VDD per core | ||

| + | * '''RVDD''' - Ungated supply | ||

| + | * '''VDD''' - Gated core supply | ||

| + | * '''VDDM''' - [[L2]]/[[L3]] [[SRAM]] supply | ||

| + | </div> | ||

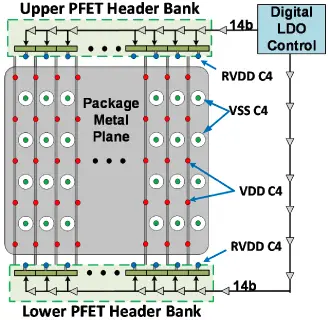

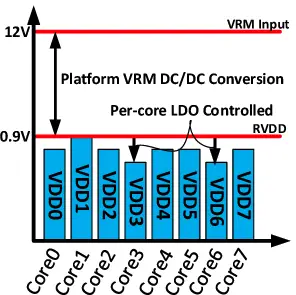

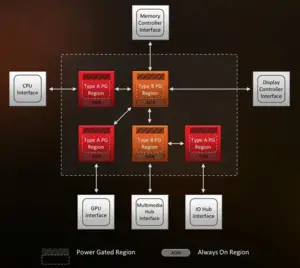

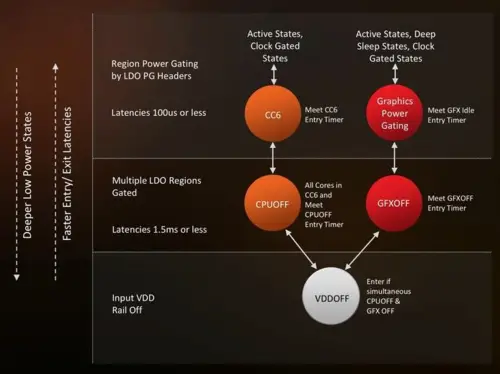

| + | Zen presented AMD with a number of new challenges in the area of power largely due to their decision to cover the entire spectrum of systems from ultra-low power to high performance. Previously AMD handled this by designing two independent architectures (i.e., {{\\|Excavator}} and {{\\|Puma}}). In Zen, SoC voltage coming from the [[Voltage Regulator Module]] (VRM) is fed to the RVDD, a package metal plane that distributes the highest VID request from all cores. In Zen, each core has a digital [[LDO regulator]] (low-dropout) and a [[digital frequency synthesizer]] (DFS) to vary frequency and voltage across power states on individual core basis. The LDO regulates RVDD for each power domain and create an optimal VDD per core using a system of sensors they've embedded across the entire chip; this is in addition to other properties such as countermeasures against droop. This is in contrast to some alternative solutions by [[Intel]] which attempted to integrated the voltage regulator (FIVR) on die in {{intel|Haswell|l=arch}} (and consequently removing it in {{intel|Skylake|l=arch}} due to a number of thermal restrictions it created). Zen's new voltage control is an attempt at a much finer power tuning on a per core level based on a collection of information it has on that core and overall chip. | ||

| + | |||

| + | <div style="display: block;"> | ||

| + | [[File:amd zen package metal plane.png|350px]] | ||

| + | [[File:amd zen per core voltage distribution.png|350px]] | ||

| + | </div> | ||

| + | |||

| + | |||

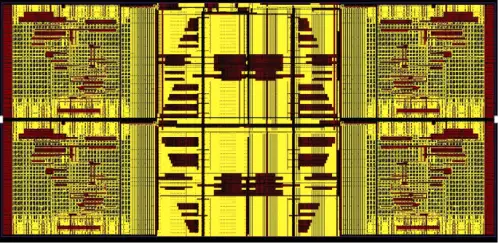

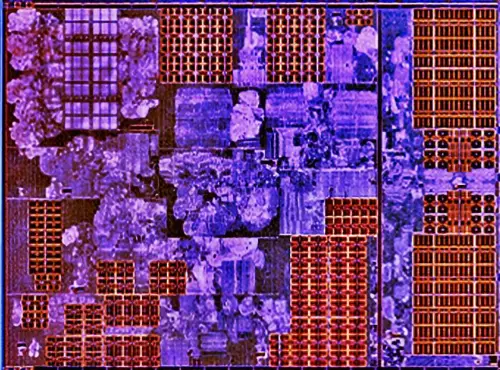

| + | AMD uses a Metal-Insulator-Metal Capacitor (MIMCap) layer between the two upper level metal layers for fast current injection in order to mitigate voltage droop. AMD stated that it covers roughly 45% of the core and a slightly smaller coverage of the L3. In addition to the LDO circuit integrated for each core is a low-latency power supply [[droop detector]] that can trigger the digital LDOs to turn on more drivers to counter droops. | ||

| + | |||

| + | |||

| + | <div style="text-align: center;">[[File:amd zen mimcap.png]]</div> | ||

| + | |||

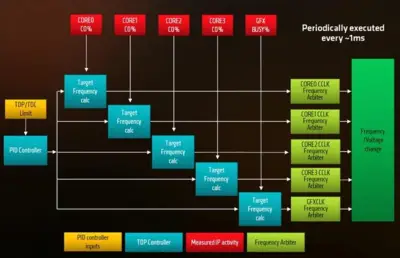

| + | A larger number of sensors across the entire die are used to measure many of the CPU states including [[frequency]], [[voltage]], [[power]], and [[temperature]]. The data is in turn used for workload characterization, [[adaptive voltage]], frequency tuning, and [[dynamic clocking]]. [[Adaptive voltage and frequency scaling]] (AVFS), an on-die closed-loop system that adjusts the voltage in real time following real-time measurements based on sensory data collected. This is part of AMD's "Precision Boost" technology offering high granularity of 25 MHz clock increments. | ||

| + | |||

| + | Zen implements over 1300 sensors to monitor the state of the die over all [[critical paths]] including the CCX and external components such as the memory fabric. Additionally the CCX also incorporates 48 high-speed power supply monitors, 20 [[thermal diodes]], and 9 high-speed droop detectors. | ||

| + | <div style="text-align: center;">[[File:zen pure power sensory.png|600px]]</div> | ||

| + | |||

| + | === System Management Unit === | ||

| + | {{empty section}} | ||

== Features == | == Features == | ||

| Line 324: | Line 818: | ||

=== SenseMI Technology === | === SenseMI Technology === | ||

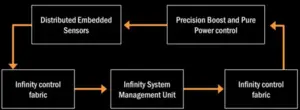

| − | + | '''SenseMI Technology''' (pronounced ''Sense-Em-Eye'') is an umbrella term for a number of features AMD added to Zen microprocessors designed to increase performance through various self-tuning using a network of sensors: | |

| − | '''SenseMI Technology''' (pronounced ''Sense-Em-Eye'') | ||

[[File:10682-icon-neural-net-prediction-140x140.png|50px|left]] | [[File:10682-icon-neural-net-prediction-140x140.png|50px|left]] | ||

| − | '''Neural Net Prediction''' - This appears to be largely marketing term for Zen's much beefier and more finely | + | '''Neural Net Prediction''' - This appears to be largely marketing term for Zen's much beefier and more finely tuned [[branch prediction]] unit. Zen uses a [[perceptron branch predictor|hashed perceptron system]] to intelligently anticipate future code flows, allowing warming up of cold blocks in order to avoid possible waits. Most of that functionality is already found on every modern high-end microprocessor (including AMD's own previous microarchitectures). Because AMD has not disclosed any more specific information about BP, it can only be speculated that no new groundbreaking logic was introduced in Zen. |

{{clear}} | {{clear}} | ||

[[File:10682-icon-smart-prefetch-140x140.png|50px|left]] | [[File:10682-icon-smart-prefetch-140x140.png|50px|left]] | ||

| − | '''Smart Prefetch''' - As with the Prediction Unit, this too appears to be a marketing term for the number of changes AMD introduced in the fetch stage where the the branch predictor can get a hit on the next µOP and retrieve it via the µOPs cache directly to the µOPs Queue, eliminating the costly decode pipeline stages. | + | '''Smart Prefetch''' - As with the Prediction Unit, this too appears to be a marketing term for the number of changes AMD introduced in the fetch stage where the the branch predictor can get a hit on the next µOP and retrieve it via the µOPs cache directly to the µOPs Queue, eliminating the costly decode pipeline stages. Additionally Zen can detect various data patterns in the program's execution and predict future data requests allowing for prefetching ahead of time reducing latency. |

{{clear}} | {{clear}} | ||

[[File:10682-icon-pure-power-140x140.png|50px|left]] | [[File:10682-icon-pure-power-140x140.png|50px|left]] | ||

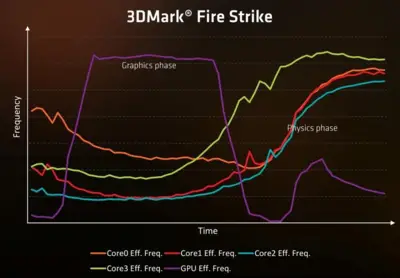

| − | '''Pure Power''' - A feature in Zen that allows for [[dynamic voltage and frequency scaling]] (DVFS), similar to AMD's {{amd|PowerTune}} technology, along with a number of other enhancements that extends beyond the core to the Infinity Fabric (AMD's new proprietary interconnect). Pure Power monitors the | + | [[File:zen pure power loop.png|right|300px]] |

| + | '''Pure Power''' - A feature in Zen that allows for [[dynamic voltage and frequency scaling]] (DVFS), similar to AMD's {{amd|PowerTune}} technology or {{amd|Cool'n'Quiet}}, along with a number of other enhancements that extends beyond the core to the Infinity Fabric (AMD's new proprietary interconnect). Pure Power monitors the state of the processor (e.g., workload), which in terms allows it to downclock when not under load in order to save power. Zen incorporates a network of sensors across the entire chip to help aid Pure Power in its monitoring. | ||

{{clear}} | {{clear}} | ||

[[File:10682-icon-precision-boost-140x140.png|50px|left]] | [[File:10682-icon-precision-boost-140x140.png|50px|left]] | ||

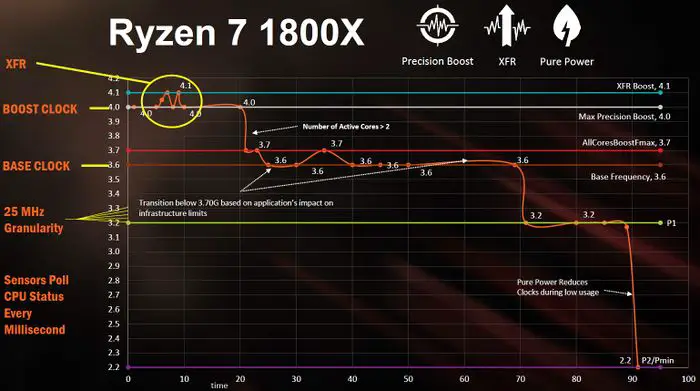

| − | '''Precision Boost''' - A feature that provides the ability to adjust the frequency of the processor on-the-fly given sufficient headroom (e.g. thermal limits based on the sensory data collected by | + | '''{{amd|Precision Boost}}''' - A feature that provides the ability to adjust the frequency of the processor on-the-fly given sufficient headroom (e.g. thermal limits based on the sensory data collected by a network of sensors across the chip), i.e. "Turbo Frequency". Precision Boost adjusts in 25 MHz increments. With Zen-based APUs, AMD introduced '''{{amd|Precision Boost 2}}''' - an enhancement of the original PB feature that uses a new algorithm that controls the boost frequency on a per-thread basis depending on the headroom. |

{{clear}} | {{clear}} | ||

| + | [[File:amd zen xfr.jpg|300px|right]] | ||

[[File:10682-icon-frequency-range-140x140.png|50px|left]] | [[File:10682-icon-frequency-range-140x140.png|50px|left]] | ||

| − | '''Extended Frequency Range''' ('''XFR''') - This is a fully automated solution that attempts to allow higher upper limit on the maximum frequency based on the cooling technique used (e.g. air, water, LN2). Whenever the chip senses that it's suitable enough for a given frequency, it will attempt to increase that limit further. | + | '''{{amd|XFR|Extended Frequency Range}}''' ('''XFR''') - This is a fully automated solution that attempts to allow higher upper limit on the maximum frequency based on the cooling technique used (e.g. air, water, LN2). Whenever the chip senses that it's suitable enough for a given frequency, it will attempt to increase that limit further. XFR is partially enabled on all models, providing an extra +50 MHz frequency boost whenever possible. For 'X' models, full XFR is enabled providing twice the headroom of up to +100 MHz. With Zen-based APUs, AMD introduced '''{{amd|Mobile XFR}}''' (mXFR) which offers mobile devices with premium cooling a sustainable higher boost frequency for a longer period of time. |

| + | {{clear}} | ||

| + | The AMD presentation slide on the right depicts a normal use case for the {{amd|Ryzen 7}} {{amd|Ryzen 7/1800X|1800X}}. When under normal workload, the processor will operate at around its base frequency of 3.6 GHz. When experiencing heavier workload, Precision Boost will kick in increment it as necessary up to its maximum frequency of 4 GHz. With adequate cooling, {{amd|XFR}} will bump it up an additional 100 MHz. This boost is sustainable for the first two active cores, at which point the boost frequency will drop to the "all core" frequency. When light workload get experienced, the processor will reduce its frequency. As Pure Power senses the workload and CPU state, it can also drastically downclock the CPU when appropriate (such as in the graph during mostly idle scenarios). | ||

| + | <div style="text-align: center;">[[File:ryzen-xfr-1800x example.jpg|700px]]</div> | ||

{{clear}} | {{clear}} | ||

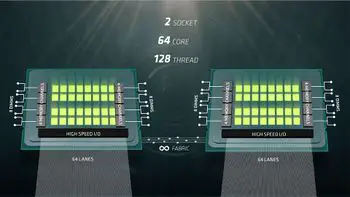

== Scalability == | == Scalability == | ||

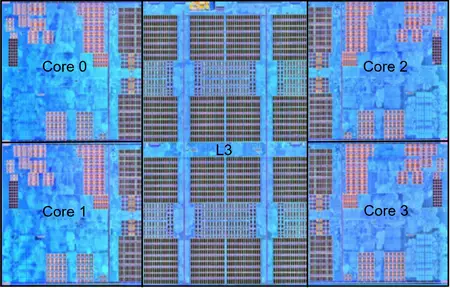

=== CPU Complex (CCX) === | === CPU Complex (CCX) === | ||