Instruction Set Architecture

- Instructions

- Addressing Modes

- Calling Convention

- Microarchitectures

- Model-Specific Register

- CPUID

- Assembly

- Interrupts

- Registers

- Micro-Ops

- Timer

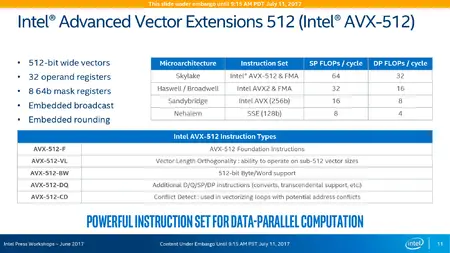

Advanced Vector Extensions 512 (AVX-512) is collective name for a number of 512-bit SIMD x86 instruction set extensions. The extensions were formally introduced by Intel in July 2013 with first general-purpose microprocessors implementing the extensions introduced in July 2017.

Contents

Overview[edit]

AVX-512 is a set of 512-bit SIMD extensions that allow programs to pack sixteen single-precision eight double-precision floating-point numbers, or eight 64-bit or sixteen 32-bit integers within 512-bit vectors. The extension provides double the computation capabilities of that of AVX/AV2.

- AVX512F - AVX-512 Foundation

- Base of the 512-bit SIMD instruction extensions which is a comprehensive list of features for most HPC and enterprise applications. AVX-512 Foundation is the natural extensions to AVX/AVX2 which is extended using the EVEX prefix which builds on the existing VEX prefix. Any processor that implements any portion of the AVX-512 extensions MUST implement AVX512F.

- AVX512CD - AVX-512 Conflict Detection Instructions

- Enables vectorization of loops with possible address conflict.

- AVX512PF - AVX-512 Prefetch Instructions

- Adds new prefetch instructions matching the gather/scatter instructions introduced by AVX512F.

- AVX512ER - AVX-512 Exponential and Reciprocal Instructions (ERI)

- Doubles the precision of the RCP, RSQRT and EXP2 approximation instructions from 14 to 28 bits.

- AVX512BW - AVX-512 Byte and Word Instructions

- Adds new and supplemental 8-bit and 16-bit integer instructions, mostly promoting legacy AVX and AVX-512 instructions.

- AVX512DQ - AVX-512 Doubleword and Quadword Instructions

- Adds new and supplemental 32-bit and 64-bit integer and floating point instructions.

- AVX512VL - AVX-512 Vector Length

- Adds vector length orthogonality. This is not an extension so much as a feature flag indicating that the supported AVX-512 instructions operating on 512-bit vectors can also operate on 128- and 256-bit vectors like SSE and AVX instructions.

- AVX512_IFMA - AVX-512 Integer Fused Multiply-Add

- Fused multiply-add of 52-bit integers.

- AVX512_VBMI - AVX-512 Vector Bit Manipulation Instructions

- Byte permutation instructions, including one shifting unaligned bytes.

- AVX512_VBMI2 - AVX-512 Vector Bit Manipulation Instructions 2

- Bytewise compress/expand instructions and bitwise funnel shifts.

- AVX512_BITALG - AVX-512 Bit Algorithms

- Parallel population count in bytes or words and a bit shuffle instruction.

- AVX512_VPOPCNTDQ - AVX-512 Vector Population Count Doubleword and Quadword

- Parallel population count in doublewords or quadwords.

- AVX512_4FMAPS - AVX-512 Fused Multiply-Accumulate Packed Single Precision

- Floating-point single precision multiply-accumulate, four iterations, instructions for deep learning.

- AVX512_4VNNIW - AVX-512 Vector Neural Network Instructions Word Variable Precision

- 16-bit integer multiply-accumulate, four iterations, instructions for deep learning.

- AVX512_FP16 - AVX-512 FP16 Instructions

- Adds support for 16-bit half precision floating point values, promoting nearly all floating point instructions introduced by AVX512F.

- AVX512_BF16 - AVX-512 BFloat16 Instructions

- BFloat16 multiply-add and conversion instructions for deep learning.

- AVX512_VNNI - AVX-512 Vector Neural Network Instructions

- 8- and 16-bit multiply-add instructions for deep learning.

- AVX512_VP2INTERSECT - AVX-512 Intersect Instructions

- ...

The following extensions add instructions in AVX-512, AVX, and (in the case of GFNI) SSE versions and may be available on x86 CPUs without AVX-512 or even AVX support.

- VAES - Vector AES Instructions

- Parallel AES decoding and encoding instructions. Expands the earlier AESNI extension, which adds SSE and AVX versions of these instructions operating on 128-bit vectors, with support for 256- and 512-bit vectors and AVX-512 features.

- VPCLMULQDQ - Vector Carry-Less Multiplication of Quadwords

- Expands the earlier PCLMULQDQ extension, which adds SSE and AVX instructions performing this operation on 128-bit vectors, with support for 256- and 512-bit vectors and AVX-512 features.

- GFNI - Galois Field New Instructions

- Adds Galois Field transformation instructions.

Note that,

- Formerly, the term AVX3.1 referred to

F + CD + ER + PF. - Formerly, the term AVX3.2 referred to

F + CD + BW + DQ + VL.

Registers[edit]

AVX-512 defines 32 512-bit vector registers ZMM0 ... ZMM31. They are shared by integer and floating point vector instructions and can contain 64 bytes, or 32 16-bit words, or 16 doublewords, or 8 quadwords, or 32 half precision, 16 single precision, 16 BFloat16, or 8 double precision IEEE 754 floating point values. If the integer values are interpreted as signed or unsigned depends on the instruction. As x86 is a little-endian architecture elements are numbered from zero beginning at the least significant byte of the register, and vectors are stored in memory LSB to MSB regardless of vector size and element type. Some instructions group elements in lanes. A pair of single precision values in the second 64-bit lane for instance refers to bits 64 ... 95 and 96 ... 127 of the register, again counting from the least significant bit.

The earlier AVX extension, which supports only 128- and 256-bit vectors, similarly defines 16 256-bit vector registers YMM0 ... YMM15. These are aliased to the lower half of the registers ZMM0 ... ZMM15. The still earlier SSE extension defines 16 128-bit vector registers XMM0 ... XMM15. These are aliased to the lowest quarter of the registers ZMM0 ... ZMM15. That means AVX-512 instructions can access the results of AVX and SSE instructions and to some degree vice versa. MMX instructions, the oldest vector instructions on x86 processors, use different registers. The full set of registers is only available in x86-64 mode. In other modes SSE, AVX, and AVX-512 instructions can only access the first eight registers.

AVX-512 instructions generally operate on 512-bit vectors. Often variants operating on 128- and 256-bit vectors are also available, and sometimes only a 128-bit variant. Shorter vectors are stored towards the LSB of the vector registers. Accordingly in assembler code the vector size is implied by register names XMM, YMM, and ZMM. AVX-512 instructions can of course access 32 of all these registers.

Use of 128- and 256-bit vectors can be beneficial to reduce memory traffic and achieve performance gains on implementations which complete 512-bit instructions as two or even four internal operations. This also explains why many horizontal operations are confined to 128-bit lanes. Motivations of such implementations can be a reduction of execution resources due to die size or complexity constraints, or performance optimizations where execution resources are dynamically disabled if power or thermal limits are reached instead of downclocking the CPU core which also affects uncritical instructions. If AVX-512 features are not needed shorter equivalent AVX instructions may also improve instruction cache utilization.

Write masking[edit]

Most AVX-512 instructions support write masking. That means they can write individual elements in the destination vector unconditionally, leave them unchanged, or zero them if the corresponding bit in a mask register supplied as an additional source operand is zero. The masking mode is encoded in the instruction opcode. AVX-512 defines 8 64-bit mask registers K0 ... K7. Masks can vary in width from 1 to 64 bits depending on the instruction (e.g. some operating on a single vector element) and the number of elements in the vector. They are likewise stored towards the LSB of the mask registers.

One application of write masking are predicated instructions. In vectorized code conditional operations on individual elements are not possible. A solution is to form predicates by comparing elements and execute the conditional operations regardless of outcome, but skip writes for results where the predicate is negative. This was already possible with MMX compare and bitwise logical instructions. Write masking improves the code density by blending results as a side effect. Another application are operations on arrays which do not match the vector sizes supported by AVX-512 or which are inconveniently aligned.

Memory fault suppression[edit]

AVX-512 supports memory fault suppression. A memory fault occurs for instance if a vector loaded from memory crosses a page boundary and one of the pages is unreadable because no physical memory was allocated to that virtual address. Programmers and compilers can avoid this by aligning vectors. A vectorized function served with pointers as parameters has to prepare code handling these edge cases at runtime. With memory fault suppression it can just mask out bytes it was not supposed to load from or store in memory and no exceptions will be generated for those bytes.

Integer instructions[edit]

Common aspects:

- Parallel operations are performed on the corresponding elements of the destination and source operands.

- A "word" is 16 bits wide, a "doubleword" 32 bits, a "quadword" 64 bits.

- Signed saturation: min(max(-2W-1, x), 2W-1 - 1),

- Unsigned saturation: min(max(0, x), 2W - 1), where W is the destination data type width in bits.

- Except as noted the destination operand and the first of two source operands is a vector register.

- If the destination is a vector register and the vector size is less than 512 bits AVX and AVX-512 instructions zero the unused higher bits to avoid a dependency on earlier instructions writing those bits. If the destination is a mask register unused higher mask bits due to the vector and element size are cleared.

- Except as noted the second or only source operand can be

- a vector register,

- a vector in memory,

- or a single element in memory broadcast to all elements in the vector. The broadcast option is limited to AVX-512 instructions operating on doublewords or quadwords.

- Some instructions use an immediate value as an additional operand, a byte which is part of the opcode.

The table below lists all AVX-512 instructions operating on integer values. The columns on the right show the x86 extension which introduced the instruction, broken down by instruction encoding and supported vector size in bits. For brevity mnemonics are abbreviated: "(V)PMULLW" means PMULLW (MMX/SSE variant) and VPMULLW (AVX/AVX-512). "P(MIN/MAX)(SD/UD)" means PMINSD, PMINUD, PMAXSD, and PMAXUD, instructions computing the minimum or maximum of signed or unsigned doublewords.

| Instruction | MMX | SSE | AVX | AVX-512 | |||

|---|---|---|---|---|---|---|---|

| 64 | 128 | 128 | 256 | 128 | 256 | 512 | |

(V)P(ADD/SUB)(/S/US)(B/W)

|

MMX | SSE2 | AVX | AVX2 | BW+VL | BW+VL | BW |

(V)PADD(D/Q)

|

MMX | SSE2 | AVX | AVX2 | F+VL | F+VL | F |

(V)PSUB(D/Q)

|

SSE2 | SSE2 | AVX | AVX2 | F+VL | F+VL | F |

| Parallel addition or subtraction (source1 - source2) of bytes, words, doublewords, or quadwords. The result is truncated (), or stored with signed (S) or unsigned saturation (US). | |||||||

(V)P(MIN/MAX)(SB/UW)

|

- | SSE4_1 | AVX | AVX2 | BW+VL | BW+VL | BW |

(V)P(MIN/MAX)(UB/SW)

|

SSE | SSE2 | AVX | AVX2 | BW+VL | BW+VL | BW |

(V)P(MIN/MAX)(SD/UD)

|

- | SSE4_1 | AVX | AVX2 | F+VL | F+VL | F |

(V)P(MIN/MAX)(SQ/UQ)

|

- | - | - | - | F+VL | F+VL | F |

| Parallel minimum or maximum of signed or unsigned bytes, words, doublewords, or quadwords. | |||||||

(V)PABS(B/W)

|

SSSE3 | SSSE3 | AVX | AVX2 | BW+VL | BW+VL | BW |

(V)PABSD

|

SSSE3 | SSSE3 | AVX | AVX2 | F+VL | F+VL | F |

VPABSQ

|

- | - | - | - | F+VL | F+VL | F |

| Parallel absolute value of signed bytes, words, doublewords, or quadwords. | |||||||

(V)PMULLW

|

MMX | SSE2 | AVX | AVX2 | BW+VL | BW+VL | BW |

(V)PMULLD, (V)PMULDQ

|

- | SSE4_1 | AVX | AVX2 | F+VL | F+VL | F |

(V)PMULLQ

|

- | - | - | - | DQ+VL | DQ+VL | DQ |

Parallel multiplication of unsigned words (PMULLW), signed doublewords (PMULDQ), unsigned doublewords (PMULLD), or unsigned quadwords (PMULLQ). The instructions store the lower half of the product in the corresponding words, doublewords, or quadwords of the destination vector.

| |||||||

(V)PMULHW

|

MMX | SSE2 | AVX | AVX2 | BW+VL | BW+VL | BW |

(V)PMULHUW

|

SSE | SSE2 | AVX | AVX2 | BW+VL | BW+VL | BW |

(V)PMULHRSW

|

SSSE3 | SSSE3 | AVX | AVX2 | BW+VL | BW+VL | BW |

Parallel multiplication of signed words (PMULHW, PMULHRSW) or unsigned words (PMULHUW). The instructions store the upper half of the 32-bit product in the corresponding words of the destination vector. The PMULHRSW instruction rounds the result:

dest = (source1 * source2 + 215) >> 16 | |||||||

(V)PMULUDQ

|

SSE2 | SSE2 | AVX | AVX2 | F+VL | F+VL | F |

| Parallel multiplication of unsigned doublewords. The instruction reads only the doublewords in the lower half of each 64-bit lane of the source operands and stores the 64-bit quadword product in the same lane of the destination vector. | |||||||

(V)PMADDWD

|

MMX | SSE2 | AVX | AVX2 | BW+VL | BW+VL | BW |

| Multiplies the corresponding signed words of the source operands, adds the 32-bit products from the even and odd lanes, and stores the results in the doublewords of the destination vector. The results overflow (wrap around to -231) if all four inputs are -32768. Broadcasting is not supported. | |||||||

(V)PMADDUBSW

|

- | SSSE3 | AVX | AVX2 | BW+VL | BW+VL | BW |

| Multiplies the unsigned bytes in the first source operand with the corresponding signed bytes in the second source operand, adds the 16-bit products from the even and odd lanes, and stores the results with signed saturation in the 16-bit words of the destination vector. | |||||||

VPMADD52(L/H)UQ

|

- | - | AVX-IFMA | AVX-IFMA | IFMA+VL | IFMA+VL | IFMA |

| Parallel multiply-accumulate. The instructions multiply the 52 least significant bits in corresponding unsigned quadwords of the source operands, then add the lower (L) or upper (H) half of the 104-bit product, zero-extended from 52 to 64 bits, to the corresponding quadword of the destination vector. | |||||||

VP4DPWSSD(/S)

|

- | - | - | - | - | - | 4VNNIW |

|

Dot product of signed 16-bit words, accumulated in doublewords, four iterations. These instructions use two 512-bit vector operands. The first one is a vector register, the second one is obtained by reading a 128-bit vector from memory and broadcasting it to the four 128-bit lanes of a 512-bit vector. The instructions multiply the 32 corresponding signed words in the source operands, then add the signed 32-bit products from the even lanes, odd lanes, and the 16 signed doublewords in the 512-bit destination vector register and store the sums in the destination. Finally the instructions increment the number of the source register by one modulo four, and repeat these operations three more times, reading four vector registers total in a 4-aligned block, e.g. ZMM12 ... ZMM15. Exceptions can occur in each iteration. Write masking is supported.

dest = min(max(-231, even + odd + dest), 231 - 1) Its VNNI equivalent is | |||||||

VPDPBUSD(/S)

|

- | - | AVX-VNNI | AVX-VNNI | VNNI+VL | VNNI+VL | VNNI |

| ... | |||||||

VPDPWSSD(/S)

|

- | - | AVX-VNNI | AVX-VNNI | VNNI+VL | VNNI+VL | VNNI |

Dot product of signed words, accumulated in doublewords. The instructions multiply the corresponding signed words of the source operands, add the signed 32-bit products from the even lanes, odd lanes, and the signed doublewords of the destination vector and store the sums in the destination. VPDPWSSDS performs the same operation except the 33-bit intermediate sum is stored with signed saturation:

dest = min(max(-231, even + odd + dest), 231 - 1)

| |||||||

(V)PCMP(EQ/GT)(B/W)

|

MMX | SSE2 | AVX | AVX2 | BW+VL | BW+VL | BW |

(V)PCMP(EQ/GT)D

|

MMX | SSE2 | AVX | AVX2 | F+VL | F+VL | F |

(V)PCMPEQQ

|

- | SSE4_1 | AVX | AVX2 | F+VL | F+VL | F |

(V)PCMPGTQ

|

- | SSE4_2 | AVX | AVX2 | F+VL | F+VL | F |

| Parallel compare with predicate equal or greater-than of the signed bytes, words, doublewords, or quadwords in the first and second source operand. The MMX, SSE, and AVX versions store the result, -1 = true or 0 = false, in the corresponding elements of the destination, a vector register. These can be used as masks in bitwise logical operations to emulate predicated vector instructions. The AVX-512 versions set the corresponding bits in the destination mask register to 1 = true or 0 = false. | |||||||

VCMP(B/UB/W/UW)

|

- | - | - | - | BW+VL | BW+VL | BW |

VCMP(D/UD/Q/UQ)

|

- | - | - | - | F+VL | F+VL | F |

| Parallel compare operation of the signed or unsigned bytes, words, doublewords, or quadwords in the first and second source operand. One of 8 operations (EQ, LT, LE, F, NEQ, NLT, NLE, T) is selected by an immediate byte. The instructions set the corresponding bits in the destination mask register to 1 = true or 0 = false. The instructions support write masking which performs a bitwise 'and' on the destination using a second mask register. | |||||||

VPTESTM(B/W), VPTESTNM(B/W)

|

- | - | - | - | BW+VL | BW+VL | BW |

VPTESTM(D/Q), VPTESTNM(D/Q)

|

- | - | - | - | F+VL | F+VL | F |

| These instructions perform a parallel bitwise 'and' (TESTM) or 'not and' (TESTNM), that is (not source1) and source2, on the bytes, words, doublewords, or quadwords of the source operands, then set the bits in the destination mask register, copying the most significant bit of the corresponding results. | |||||||

(V)P(AND/ANDN/OR/XOR)(/D/Q)

|

MMX | SSE2 | AVX | AVX2 | F+VL | F+VL | F |

Parallel bitwise logical operations. The ANDN operation is (not source1) and source2. The AVX-512 versions operate on doublewords or quadwords, the distinction is necessary because these instructions support write masking which observes the number of elements in the vector.

| |||||||

VPTERNLOG(D/Q)

|

- | - | - | - | F+VL | F+VL | F |

| Parallel bitwise ternary logical operations on doublewords or quadwords. These instructions concatenate the corresponding bits of the destination, first, and second source operand into a 3-bit index which selects a bit from an 8-bit lookup table provided by an immediate byte, and store it in the corresponding bit of the destination. In other words they can perform any bitwise boolean operation with up to three inputs. The data type distinction is necessary because these instructions support write masking which observes the number of elements in the vector. | |||||||

VPOPCNT(B/W)

|

- | - | - | - | BITALG+VL | BITALG+VL | BITALG |

VPOPCNT(D/Q)

|

- | - | - | - | VPOPC+VL | VPOPC+VL | VPOPC |

| Parallel count of the number of set bits in bytes, words, doublewords, or quadwords. | |||||||

VPLZCNT(D/Q)

|

- | - | - | - | CD+VL | CD+VL | CD |

| Parallel count of the number of leading (most significant) zero bits in doublewords or quadwords. | |||||||

(V)PS(LL/RA/RL)W

|

MMX | SSE2 | AVX | AVX2 | BW+VL | BW+VL | BW |

(V)PS(LL/RA/RL)(D/Q)

|

MMX | SSE2 | AVX | AVX2 | F+VL | F+VL | F |

| Parallel bitwise left shift (PSLL) or logical (unsigned) right shift (PSRL) or arithmetic (signed) right shift (PSRA) of the words, doublewords, or quadwords in the first or only source operand. The amount can be a constant provided by an immediate byte, or a single value provided by a second source operand, specifically the lowest quadword of a vector register or a 128-bit vector in memory. If the shift amount is greater than or equal to the element size in bits the destination element is zeroed (PSLL, PSRL) or set to -1 or 0 (PSRA). Broadcasting is supported by the constant amount AVX-512 instruction variants operating on doublewords or quadwords. | |||||||

VPS(LL/RA/RL)VW

|

- | - | - | - | BW+VL | BW+VL | BW |

VPS(LL/RL)V(D/Q)

|

- | - | AVX2 | AVX2 | F+VL | F+VL | F |

VPSRAVD

|

- | - | AVX2 | AVX2 | F+VL | F+VL | F |

VPSRAVQ

|

- | - | - | - | F+VL | F+VL | F |

| Parallel bitwise left shift (VPSLL) or logical (unsigned) right shift (VPSRL) or arithmetic (signed) right shift (VPSRA) of the words, doublewords, or quadwords in the first source operand by a per-element variable amount taken from the corresponding element of the second source operand. If the shift amount is greater than or equal to the element size in bits the destination element is zeroed (VPSLL, VPSRL) or set to -1 or 0 (VPSRA). | |||||||

VPRO(L/R)(/V)(D/Q)

|

- | - | - | - | F+VL | F+VL | F |

| Parallel bitwise rotate left or right of the doublewords or quadwords in the first or only source operand by a constant () or per-element variable number of bits (V) modulo element width in bits. The instructions take a constant amount from an immediate byte, a variable amount from the corresponding element of a second source operand. | |||||||

VPSH(L/R)D(W/D/Q)

|

- | - | - | - | VBMI2+VL | VBMI2+VL | VBMI2 |

| Parallel bitwise funnel shift left or right by a constant amount. The instructions concatenate each word, doubleword, or quadword of the first and second source operand, perform a bitwise logical left or right shift by a constant amount modulo element width in bits taken from an immediate byte, and store the upper (L) or lower (R) half of the result in the corresponding elements of the destination vector. | |||||||

VPSH(L/R)DV(W/D/Q)

|

- | - | - | - | VBMI2+VL | VBMI2+VL | VBMI2 |

| Parallel bitwise funnel shift left or right by a per-element variable amount. The instructions concatenate each word, doubleword, or quadword of the destination and first source operand, perform a bitwise logical left or right shift by a variable amount modulo element width in bits taken from the corresponding element of the second source operand, and store the upper (L) or lower (R) half of the result in the corresponding elements of the destination vector.> | |||||||

VPMULTISHIFTQB

|

- | - | - | - | VBMI+VL | VBMI+VL | VBMI |

|

Copies 8 consecutive bits from the second source operand into each byte of the destination vector using bit indices. For each destination byte the instruction obtains an index from the corresponding byte of the first source operand. The operation is confined to 64-bit quadwords so the indices can only address bits in the same 64-bit lane as the index and destination byte. The instruction increments the index for each bit modulo 64. In other words the operation for each destination byte is: dest.byte[i] = bitwise_rotate_right(source2.quadword[i / 8], source1.byte[i] and 63) and 255 The destination and first source operand is a vector register. The second source operand can be a vector register, a vector in memory, or one quadword broadcast to all 64-bit lanes of the vector. Write masking is supported with quadword granularity. | |||||||

(V)PS(LL/RL)DQ

|

- | SSE2 | AVX | AVX2 | BW+VL | BW+VL | BW |

| Parallel bytewise shift left or right of quadwords by a constant amount, shifting in zero bytes. The amount is taken from an immediate byte. If greater than 15 the instructions zero the destination element. Broadcasting and write masking is not supported. | |||||||

(V)PALIGNR

|

SSSE3 | SSSE3 | AVX | AVX2 | BW+VL | BW+VL | BW |

| Parallel bytewise funnel shift right by a constant amount, shifting in zero bytes. The instruction concatenates the data in corresponding 128-bit lanes of the first and second source operand, shifts the 256-bit intermediate values right by a constant amount times 8 and stores the lower half of the results in the corresponding 128-bit lanes of the destination. The amount is taken from an immediate byte. Broadcasting is not supported. Write masking is supported with byte granularity. | |||||||

VALIGN(D/Q)

|

- | - | - | - | F+VL | F+VL | F |

Element-wise funnel shift right by a constant amount, shifting in zeroed doublewords or quadwords. The instruction concatenates the first and second source operand, performs a bitwise logical right shift by a constant amount times 32 (VALIGND) or 64 (VALIGNQ), and stores the lower half of the vector in the destination vector register. The amount, modulo number of elements in the destination vector, is taken from an immediate byte.

| |||||||

(V)AESDEC, (V)AESDECLAST,(V)AESENC, (V)AESENCLAST

|

- | AESNI | AESNI+AVX | VAES | VAES+VL | VAES+VL | VAES+F |

| Performs one round, or the last round, of an AES decryption or encryption flow. ... Broadcasting and write masking is not supported. | |||||||

(V)PCLMULQDQ

|

- | PCLM | PCLM+AVX | VPCLM | VPCLM+VL | VPCLM+VL | VPCLM+F |

| Carry-less multiplication of quadwords. ... Broadcasting and write masking is not supported. | |||||||

GF2P8AFFINEQB

|

- | GFNI | GFNI+AVX | GFNI+AVX | GFNI+VL | GFNI+VL | GFNI+F |

| ... | |||||||

GF2P8AFFINEINVQB

|

- | GFNI | GFNI+AVX | GFNI+AVX | GFNI+VL | GFNI+VL | GFNI+F |

| ... | |||||||

GF2P8MULB

|

- | GFNI | GFNI+AVX | GFNI+AVX | GFNI+VL | GFNI+VL | GFNI+F |

| ... | |||||||

VPAVG(B/W)

|

SSE | SSE2 | AVX | AVX2 | F+VL | F+VL | F |

| Parallel average of unsigned bytes or words with rounding:

dest = (source1 + source2 + 1) >> 1 | |||||||

(V)PSADBW

|

SSE | SSE2 | AVX | AVX2 | BW+VL | BW+VL | BW |

| Computes the absolute difference of the corresponding unsigned bytes in the source operands, adds the eight results from each 64-bit lane and stores the sum in the corresponding 64-bit quadword of the destination vector. Write masking is not supported. | |||||||

VDBPSADBW

|

- | - | - | - | BW+VL | BW+VL | BW |

| ... | |||||||

VPMOV(B/W)2M

|

- | - | - | - | BW+VL | BW+VL | BW |

VPMOV(D/Q)2M

|

- | - | - | - | DQ+VL | DQ+VL | DQ |

| These instructions set the bits in a mask register, copying the most significant bit in the corresponding byte, word, doubleword, or quadword of the source vector in a vector register. | |||||||

VPMOVM2(B/W)

|

- | - | - | - | BW+VL | BW+VL | BW |

VPMOVM2(D/Q)

|

- | - | - | - | DQ+VL | DQ+VL | DQ |

| These instructions set the bits in each byte, word, doubleword, or quadword of the destination vector in a vector register to all ones or zeros, copying the corresponding bit in a mask register. | |||||||

VPMOV(/S/US)WB

|

- | - | - | - | BW+VL | BW+VL | BW |

VPMOV(/S/US)(DB/DW/QB/QW/QD)

|

- | - | - | - | F+VL | F+VL | F |

| Parallel down conversion of words, doublewords, or quadwords to bytes, words, or doublewords. The instructions truncate () the input or convert with signed (S) or unsigned saturation (US). The destination operand is a vector register or vector in memory. In the former case the instructions zero unused higher elements. The source operand is a vector register. | |||||||

(V)MOV(SX/ZX)BW

|

- | SSE4_1 | AVX | AVX2 | BW+VL | BW+VL | BW |

(V)MOV(SX/ZX)(BD/BQ/WD/WQ/DQ)

|

- | SSE4_1 | AVX | AVX2 | F+VL | F+VL | F |

| Parallel sign or zero extend of bytes, words, or doublewords to words, doublewords, or quadwords. The BW, WD, and DQ instruction variants read only the lower half of the source operand, the BD, WQ variants the lowest quarter, and the BQ variant the lowest one-eighth. The source operand can be a vector register or a vector in memory of the size above. Broadcasting is not supported. | |||||||

(V)MOVDQU, (V)MOVDQA

|

- | SSE2 | AVX | AVX | - | - | - |

VMOVDQU(8/16)

|

- | - | - | - | BW+VL | BW+VL | BW |

VMOVDQU(32/64), VMOVDQA(32/64)

|

- | - | - | - | F+VL | F+VL | F |

These instructions copy a vector of 8-bit bytes, 16-bit words, 32-bit doublewords, or 64-bit quadwords between vector registers, or from a vector register to memory or vice versa. The data type distinction is necessary because the AVX-512 versions of these instructions support write masking which observes the number of elements in the vector. For the MOVDQA ("move aligned") versions the memory address must be a multiple of the vector size in bytes or an exception is generated. Broadcasting is not supported.

| |||||||

(V)MOVNTDQA

|

- | SSE4_1 | AVX | AVX2 | F+VL | F+VL | F |

| Loads a vector from memory into a vector register with a non-temporal hint i.e. it is not beneficial to load the data into the cache hierarchy. The memory address must be a multiple of the vector size in bytes or an exception is generated. Broadcasting and write masking is not supported. | |||||||

(V)MOVNTDQ

|

- | SSE2 | AVX | AVX | F+VL | F+VL | F |

| Stores a vector from a vector register in memory with non-temporal hint i.e. it is not beneficial to perform a write-allocate and/or insert the data in the cache hierarchy. Write masking is not supported. | |||||||

(V)MOV(D/Q)

|

MMX | SSE2 | AVX | - | F | - | - |

| Copies a doubleword or quadword, the lowest element in a vector register, to a general purpose register or vice versa. If the destination is a vector register the instructions zero the higher elements of the vector. If the element is a doubleword and the destination a 64-bit GPR they zero the upper half. Write masking is not supported. | |||||||

VMOVW

|

- | - | - | - | FP16 | - | - |

| Copies a 16-bit word, the lowest element in a vector register, to a general purpose register or vice versa. Unused higher bits in the destination register are zeroed. Write masking is not supported. | |||||||

VPSCATTER(D/Q)(D/Q)

|

- | - | - | - | F+VL | F+VL | F |

| ... | |||||||

VPGATHER(D/Q)(D/Q)

|

- | - | - | - | F+VL | F+VL | F |

| ... | |||||||

(V)PACKSSWB, (V)PACKSSDW, (V)PACKUSWB

|

MMX | SSE2 | AVX | AVX2 | BW+VL | BW+VL | BW |

(V)PACKUSDW

|

- | SSE4_1 | AVX | AVX2 | BW+VL | BW+VL | BW |

| These instruction pack the signed words (WB) or doublewords (DW) in the source operands into the bytes or words, respectively, of the destination vector with signed (SS) or unsigned saturation (US). They interleave the results, packing data from the first source operand into the even 64-bit lanes of the destination, data from the second source operand into the odd lanes. Broadcasting is not supported by the WB variants. | |||||||

(V)PUNPCK(L/H)(BW/WD)

|

MMX | SSE2 | AVX | AVX2 | BW+VL | BW+VL | BW |

(V)PUNPCK(L/H)DQ

|

MMX | SSE2 | AVX | AVX2 | F+VL | F+VL | F |

(V)PUNPCK(L/H)QDQ

|

- | SSE2 | AVX | AVX2 | F+VL | F+VL | F |

These instructions interleave the bytes, words, doublewords, or quadwords of the first and second source operand. In order to fit the data into the destination vector PUNPCKL reads only the elements in the even 64-bit lanes, UNPCKH only the odd 64-bit lanes of the source operands. Broadcasting is not supported by the BW and WD variants.

| |||||||

VPEXTRB

|

- | SSE4_1 | AVX | - | BW | - | - |

VPEXTRW

|

SSE | SSE2/SSE4_1 | AVX | - | BW | - | - |

VPEXTR(D/Q)

|

- | SSE4_1 | AVX | - | DQ | - | - |

These instructions extract a byte, word, doubleword, or quadword using a constant index to select an element from the lowest 128-bit lane of a source vector register and store it in a general purpose register or in memory. A memory destination is not supported by the MMX version of VPEXTRW and by the SSE version introduced by the SSE2 extension. The index is provided by an immediate byte. If the destination register is wider than the element the instructions zero the unused higher bits.

| |||||||

VEXTRACTI32X4

|

- | - | - | - | - | F+VL | F |

VEXTRACTI32X8

|

- | - | - | - | - | - | DQ |

VEXTRACTI64X2

|

- | - | - | - | - | DQ+VL | DQ |

VEXTRACTI64X4

|

- | - | - | - | - | - | F |

VEXTRACTI128

|

- | - | - | AVX | - | - | - |

| These instructions extract four (I32X4) or eight (I32X8) doublewords, or two (I64X2) or four (I64X4) quadwords, or one block of 128 bits (I128), from a lane of that width (e.g. 128 bits for I32X4) of the source operand selected by a constant index and store the data in memory, or in the lowest lane of a vector register. Higher lanes of the destination register are zeroed. The index is provided by an immediate byte. Broadcasting is not supported. | |||||||

VPINSRB

|

- | SSE4_1 | AVX | - | BW | - | - |

VPINSRW

|

SSE | SSE2 | AVX | - | BW | - | - |

VPINSR(D/Q)

|

- | SSE4_1 | AVX | - | DQ | - | - |

| Inserts a byte, word, doubleword, or quadword taken from the lowest bits of a general purpose register or from memory, into the lowest 128-bit lane of the destination vector register using a constant index to select the element. The index is provided by an immediate byte. Write masking is not supported. | |||||||

VINSERTI32X4

|

- | - | - | - | - | F+VL | F |

VINSERTI32X8

|

- | - | - | - | - | - | DQ |

VINSERTI64X2

|

- | - | - | - | - | DQ+VL | DQ |

VINSERTI64X4

|

- | - | - | - | - | - | F |

VINSERTI128

|

- | - | - | AVX2 | - | - | - |

| These instructions insert four (I32X4) or eight (I32X8) doublewords, or two (I64X2) or four (I64X4) quadwords, or one block of 128 bits ($128), in a lane of that width (e.g. 128 bits for I32X4) of the destination vector selected by a constant index provided by an immediate byte. They load this data from memory, or the lowest lane of a vector register specified by the second source operand, and data for the remaining lanes of the destination vector from the corresponding lanes of the first source operand, a vector register. Broadcasting is not supported. | |||||||

(V)PSHUFB

|

SSSE3 | SSSE3 | AVX | AVX2 | BW+VL | BW+VL | BW |

| Shuffles the bytes of the first source operand using variable indices. For each byte of the destination vector the instruction obtains a source index modulo 16 from the corresponding byte of the second source operand. If the most significant bit of the index byte is set the instruction zeros the destination byte instead. The indices can only address a source byte in the same 128-bit lane as the destination byte. | |||||||

(V)PSHUF(L/H)W

|

- | SSE2 | AVX | AVX2 | BW+VL | BW+VL | BW |

These instructions shuffle the words of the source operand using constant indices. For each element of the destination vector, a 2-bit index selects an element in the same 64-bit lane of the source operand. For each 64-bit lane the same four indices are taken from an immediate byte. The PSHUFLW instruction writes only the even 64-bit lanes of the destination vector and leaves the remaining lanes unchanged, PSHUFHW writes the odd lanes.

| |||||||

(V)PSHUFD

|

- | SSE2 | AVX | AVX2 | F+VL | F+VL | F |

| Shuffles the doublewords of the source operand using constant indices. For each element of the destination vector, a 2-bit index selects an element in the same 128-bit lane of the source operand. For each 128-bit lane the same four indices are taken from an immediate byte. | |||||||

VSHUFI32X4

|

- | - | - | - | F+VL | F | |

VSHUFI64X2

|

- | - | - | - | F+VL | F | |

| Shuffles a 128-bit wide group of four doublewords or two quadwords of the source operands using constant indices. For each 128-bit destination lane an index selects one 128-bit lane of the source operands. The indices for even destination lanes can only address lanes in the first source operand, those for odd destination lanes only lanes in the second source operand. Instruction variants operating on 256-bit vectors use two 1-bit indices, those operating on 512-bit vectors four 2-bit indices, always taken from an immediate byte. | |||||||

VPSHUFBITQMB

|

- | - | - | - | BITALG+VL | BITALG+VL | BITALG |

| Shuffles the bits in the first source operand, a vector register, using bit indices. For each bit of the 16/32/64-bit mask in a destination mask register, the instruction obtains a source index modulo 64 from the corresponding byte in a second source operand, a 128/256/512-bit vector in a vector register or in memory. The operation is confined to quadwords so the indices can only select a bit from the same 64-bit lane where the index byte resides. For instance bytes 8 ... 15 can address bits 64 ... 127. The instruction supports write masking which means it optionally performs a bitwise 'and' on the destination using a second mask register. | |||||||

VPERMB

|

- | - | - | - | VBMI+VL | VBMI+VL | VBMI |

VPERM(W/D)

|

- | - | - | - | F+VL | F+VL | F |

| Permutes the bytes, words, or doublewords of the second source operand using element indices. For each element of the destination vector the instruction obtains a source index, modulo vector size in elements, from the corresponding element of the first source operand. | |||||||

VPERMQ

|

- | - | - | AVX2 | - | F+VL | F |

| Permutes the quadwords of a source operand within a 256-bit lane using constant or variable source element indices. For each destination element the constant index instruction variant obtains a 2-bit index from an immediate byte, and uses the same four indices in each 256-bit lane. The destination operand is a vector register. The source operand can be a vector register, a vector in memory, or one quadword in memory broadcast to all elements of the vector.

The variable index variant permutes the elements of a second source operand. For each destination element it obtains a 2-bit source index from the corresponding quadword of the first source operand. The destination and first source operand is a vector register. The second source operand can be a vector register, a vector in memory, or one quadword in memory broadcast to all elements of the vector. | |||||||

VPERM(I/T)2B

|

- | - | - | - | VBMI+VL | VBMI+VL | VBMI |

VPERM(I/T)2W

|

- | - | - | - | BW+VL | BW+VL | BW |

VPERM(I/T)2(D/Q)

|

- | - | - | - | F+VL | F+VL | F |

| The "I" instruction variant concatenates the second and first source operand and permutes their elements using element indices. For each element of the destination vector it obtains a source index, modulo twice the vector size in elements, from the byte, word, doubleword, or quadword in this lane and overwrites it. The "T" variant concatenates the second source and destination operand, and obtains the source indices from the first source operand. In other words the instructions perform the same operation, one overwriting the indices, the other one half of the data table. The destination and first source operand is a vector register. The second source operand can be a vector register, a vector in memory, or a single value broadcast to all elements of the vector if the element is 32 or 64 bits wide. | |||||||

VPCOMPRESS(B/W)

|

- | - | - | - | VBMI2+VL | VBMI2+VL | VBMI2 |

VPCOMPRESS(D/Q)

|

- | - | - | - | F+VL | F+VL | F |

| These instructions copy bytes, words, doublewords, or quadwords from a vector register to memory or another vector register. They copy each element in the source vector if the corresponding bit in a mask register is set, and only then increment the memory address or destination register element number for the next store. Remaining elements if the destination is a register are left unchanged or zeroed depending on the instruction variant. | |||||||

VPEXPAND(B/W)

|

- | - | - | - | VBMI2+VL | VBMI2+VL | VBMI2 |

VPEXPAND(D/Q)

|

- | - | - | - | F+VL | F+VL | F |

| These instructions copy bytes, words, doublewords, or quadwords from memory or a vector register to another vector register. They load each element of the destination register, if the corresponding bit in a mask register is set, from the source and only then increment the memory address or source register element number for the next load. Destination elements where the mask bit is cleared are left unchanged or zeroed depending on the instruction variant. | |||||||

VPBLENDM(B/W)

|

- | - | - | - | BW+VL | BW+VL | BW |

VPBLENDM(D/Q)

|

- | - | - | - | F+VL | F+VL | F |

| Parallel blend of the bytes, words, doublewords, or quadwords in the source operands. For each element in the destination vector the corresponding bit in a mask register selects the element in the first source operand (0) or second source operand (1). If no mask operand is provided the default is all ones. "Zero-masking" variants of the instructions zero the destination elements where the mask bit is 0. In other words these instructions are equivalent to copying the first source operand to the destination, then the second source operand with regular write masking. | |||||||

VPBROADCAST(B/W) from VR or memory

|

- | - | AVX2 | AVX2 | BW+VL | BW+VL | BW |

VPBROADCAST(B/W) from GPR

|

- | - | - | - | BW+VL | BW+VL | BW |

VPBROADCAST(D/Q) from VR or memory

|

- | - | AVX2 | AVX2 | F+VL | F+VL | F |

VPBROADCAST(D/Q) from GPR

|

- | - | - | - | F+VL | F+VL | F |

VPBROADCASTI32X2 from VR or memory

|

- | - | - | - | DQ+VL | DQ+VL | DQ |

VPBROADCASTI32X4 from VR or memory

|

- | - | - | - | - | F+VL | F |

VPBROADCASTI32X8 from VR or memory

|

- | - | - | - | - | - | DQ |

VPBROADCASTI64X2 from VR or memory

|

- | - | - | - | - | DQ+VL | DQ |

VPBROADCASTI64X4 from VR or memory

|

- | - | - | - | - | - | F |

VPBROADCASTI128 from memory

|

- | - | - | AVX2 | - | - | - |

| These instructions broadcast one byte, or one word, or one (D), two (I32X2), four (I32X4), or eight (I32X8) doublewords, or one (Q), two (I64X2), or four (I64X4) quadwords, or one block of 128 bits (I128), from the lowest lane of that width (e.g. 64 bits for I32X2) of a source vector register, or from memory, or from a general purpose register to all lanes of that width in the destination vector. The AVX-512 instructions support write masking with byte, word, doubleword, or quadword granularity. | |||||||

VPBROADCASTM(B2Q/W2D)

|

- | - | - | - | CD+VL | CD+VL | CD |

| These instructions broadcast the lowest byte or word in a mask register to all doublewords or quadwords of the destination vector in a vector register. Write masking is not supported. | |||||||

VP2INTERSECT(D/Q)

|

- | - | - | - | VP2IN+VL | VP2IN+VL | VP2IN+F |

| ... | |||||||

VPCONFLICT(D/Q)

|

- | - | - | - | CD+VL | CD+VL | CD |

| ... | |||||||

| Total: 310 | |||||||

Floating point instructions[edit]

Common aspects:

- Parallel operations are performed on the corresponding elements of the destination and source operands.

- "Packed" instructions with mnemonics ending in PH/PS/PD write all elements of the destination vector. "Scalar" instructions ending in SH/SS/SD write only the lowest element and leave the higher elements unchanged.

- Except as noted the destination operand and the first of two source operands is a vector register.

- If the destination is a vector register and the vector size is less than 512 bits AVX and AVX-512 instructions zero the unused higher bits to avoid a dependency on earlier instructions writing those bits.

- Except as noted the second or only source operand can be

- a vector register,

- a vector in memory for "packed" instructions,

- a single element in memory for "scalar" instructions,

- or a single element in memory broadcast to all elements in the vector for "packed" AVX-512 instructions.

- Some instructions use an immediate value as an additional operand, a byte which is part of the opcode.

Some floating point instructions which merely copy data or perform bitwise logical operations duplicate the functionality of integer instructions. AVX-512 implementations may actually execute vector integer and floating point instructions in separate execution units. Mixing those instructions is not advisable because results will be transferred automatically but this may incur a delay.

The table below lists all AVX-512 instructions operating on floating point values. The columns on the right show the x86 extension which introduced the instruction, broken down by instruction encoding and supported vector size in bits. For brevity "SSE/SSE2" means the single precision variant of the instruction was introduced by the SSE, the double precision variant by the SSE2 extension. Similarly "F/FP16" means the single and double precision variant was introduced by the AVX512F (Foundation) extension, the half precision variant by the AVX512_FP16 extension.

| Instruction | SSE | AVX | AVX-512 | |||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 128 | 128 | 256 | 128 | 256 | 512 | |||||||||||||

(V)(ADD/DIV/MAX/MIN/MUL/SUB)(PS/PD)

|

SSE/SSE2 | AVX | AVX | F+VL | F+VL | F | ||||||||||||

(V)(ADD/DIV/MAX/MIN/MUL/SUB)(SS/SD)

|

SSE/SSE2 | AVX | - | F | - | - | ||||||||||||

V(ADD/DIV/MAX/MIN/MUL/SUB)PH

|

- | - | - | FP16+VL | FP16+VL | FP16 | ||||||||||||

V(ADD/DIV/MAX/MIN/MUL/SUB)SH

|

- | - | - | FP16 | - | - | ||||||||||||

| Parallel addition, division (source1 / source2), maximum, minimum, multiplication, or subtraction (source1 - source2), with desired rounding if applicable, of half, single, or double precision values. | ||||||||||||||||||

VRANGE(PS/PD)

|

- | - | - | DQ+VL | DQ+VL | DQ | ||||||||||||

VRANGE(SD/SS)

|

- | - | - | DQ | - | - | ||||||||||||

|

These instructions perform a parallel minimum or maximum operation on single or double precision values, either on their original or absolute values. They optionally change the sign of all results to positive or negative, or copy the sign of the corresponding element in the first source operand. The operation is selected by an immediate byte. A saturation operation like min(max(-limit, value), +limit) for instance can be expressed as minimum of absolute values with sign copying. | ||||||||||||||||||

VF(/N)(MADD/MSUB)(132/213/231)(PS/PD),VF(MADDSUB/MSUBADD)(132/213/231)(PS/PD)

|

- | FMA | FMA | F+VL | F+VL | F | ||||||||||||

VF(/N)(MADD/MSUB)(132/213/231)(SS/SD)

|

- | FMA | FMA | F | - | - | ||||||||||||

VF(/N)(MADD/MSUB)(132/213/231)PH,VF(MADDSUB/MSUBADD)(132/213/231)PH

|

- | - | - | FP16+VL | FP16+VL | FP16 | ||||||||||||

VF(/N)(MADD/MSUB)(132/213/231)SH

|

- | - | - | FP16 | - | - | ||||||||||||

Parallel fused multiply-add of half, single, or double precision values. These instructions require three source operands, the first source operand is also the destination operand. The numbers in the mnemonic specify the operand order, e.g. 132 means src1 = src1 * src3 + src2. The operations are:

| ||||||||||||||||||

VF(/C)MADDC(PH/SH),VF(/C)MULC(PH/SH)

|

- | - | - | FP16+VL | FP16+VL | FP16 | ||||||||||||

| Parallel complex multiplication of half precision pairs. The instructions multiply corresponding pairs of half precision values in the first and second source operand:

The | ||||||||||||||||||

V4F(/N)MADD(PS/SS)

|

- | - | - | - | - | 4FMAPS | ||||||||||||

|

Parallel fused multiply-accumulate of single precision values, four iterations. In each iteration the instructions source 16 multiplicands from a 512-bit vector register, and one multiplier from memory which is broadcast to all 16 elements of a second vector. They add the 16 products and the 16 values in the corresponding elements of the 512-bit destination register, round the sums as desired, and store them in the destination. Finally the instructions increment the number of the source register by one modulo four, and the memory address by four bytes. Exceptions can occur in each iteration. Write masking is supported. In total these instructions perform 64 multiply-accumulate operations, reading 64 single precision multiplicands from four source registers in a 4-aligned block, e.g. ZMM12 ... ZMM15, four single precision multipliers consecutive in memory, and accumulate 16 single precision results four times, also rounding four times.

The "packed" variants (PS) perform the operations above, the "scalar" variants (SS) yield only a single result in the lowest element of the 128-bit destination vector, leaving the three higher elements unchanged. As usual if the vector size is less than 512 bits the instructions zero the unused higher bits in the destination register to avoid a dependency on earlier instructions writing those bits. In total the "scalar" instructions sequentially perform four multiply-accumulate operations, read a single precision multiplicand from four source registers, four single precision multipliers from memory, and accumulate one single precision result four times in the destination register, also rounding four times. | ||||||||||||||||||

VDPBF16PS

|

- | - | - | BF16+VL | BF16+VL | BF16 | ||||||||||||

| Dot product of BFloat16 values, accumulated in single precision elements. The instruction multiplies the corresponding BF16 values of the source operands, converted to single precision, then adds the products from the even lanes, odd lanes, and the single precision values in the destination operand and stores the sums in the destination. | ||||||||||||||||||

VRCP14(PS/PD)

|

- | - | - | F+VL | F+VL | F | ||||||||||||

VRCP28(PS/PD)

|

- | - | - | - | - | ER | ||||||||||||

VRCPPH

|

- | - | - | FP16+VL | FP16+VL | FP16 | ||||||||||||

VRCP14(SS/SD)

|

- | - | - | F | - | - | ||||||||||||

VRCP28(SS/SD)

|

- | - | - | ER | - | - | ||||||||||||

VRCPSH

|

- | - | - | FP16 | - | - | ||||||||||||

| Parallel approximate reciprocal of half, single, or double precision values. The maximum relative error is less than 2-14 or 2-28 (RCP28), see Intel's reference implementation for exact values.

Multiplication by a reciprocal can be faster than the | ||||||||||||||||||

(V)SQRT(PS/PD)

|

SSE/SSE2 | AVX | AVX | F+VL | F+VL | F | ||||||||||||

VSQRTPH

|

- | - | - | FP16+VL | FP16+VL | FP16 | ||||||||||||

(V)SQRT(SS/SD)

|

SSE/SSE2 | AVX | - | F | - | - | ||||||||||||

VSQRTSH

|

- | - | - | FP16 | - | - | ||||||||||||

| Parallel square root with desired rounding of half, single, or double precision values. | ||||||||||||||||||

VRSQRT14(PS/PD)

|

- | - | - | F+VL | F+VL | F | ||||||||||||

VRSQRT28(PS/PD)

|

- | - | - | - | - | ER | ||||||||||||

VRSQRTPH

|

- | - | - | FP16+VL | FP16+VL | FP16 | ||||||||||||

VRSQRT14(SS/SD)

|

- | - | - | F | - | - | ||||||||||||

VRSQRT28(SS/SD)

|

- | - | - | ER | - | - | ||||||||||||

VRSQRTSH

|

- | - | - | FP16 | - | - | ||||||||||||

| Parallel approximate reciprocal of the square root of half, single, or double precision values. The maximum relative error is less than 2-14 or 2-28 (RSQRT28), see Intel's reference implementation for exact values.

Multiplication by a reciprocal square root can be faster than the | ||||||||||||||||||

VEXP2(PS/PD)

|

- | - | - | - | - | ER | ||||||||||||

| Parallel approximation of 2x of single or double precision values. The maximum relative error is less than 2-23, see Intel's reference implementation for exact values. | ||||||||||||||||||

(V)CMP*(PS/PD)

|

SSE/SSE2 | AVX | AVX | F+VL | F+VL | F | ||||||||||||

(V)CMP*(SS/SD)

|

SSE/SSE2 | AVX | - | F | - | - | ||||||||||||

VCMP*PH

|

- | - | - | FP16+VL | FP16+VL | FP16 | ||||||||||||

VCMP*SH

|

- | - | - | FP16 | - | - | ||||||||||||

| Parallel compare operation of the half, single, or double precision values in the first and second source operand. One of 32 operations (CMPEQ - equal, CMPLT - less than, CMPEQ_UO - equal unordered, ...) is selected by an immediate byte. The results, 1 = true or 0 = false, are stored in the destination.

For AVX-512 versions of the instructions the destination is a mask register with bits corresponding to the source elements. The "scalar" instructions (SH/SS/SD) set only the lowest bit. Unused bits due to the vector and element size are zeroed. The instructions support write masking which performs a bitwise 'and' on the destination using a second mask register. For SSE/AVX versions the negated results (-1 or 0) are stored in a vector of doublewords (PS/SS) or quadwords (PD/SD) corresponding to the source elements. These can be used as masks in bitwise logical operations to emulate predicated vector instructions. | ||||||||||||||||||

(V)(/U)COMI(SS/SD)

|

SSE/SSE2 | AVX | - | F | - | - | ||||||||||||

V(/U)COMISH

|

- | - | - | FP16 | - | - | ||||||||||||

| Compares the half, single, or double precision values in the lowest element of the first and second source operand and stores the result in the ZF (equal), CF (less than), and PF (unordered) for branch instructions. UCOMI instructions perform an unordered compare and only generate an exception if a source operand is a SNaN, COMI instructions also for QNaNs. Broadcasting and write masking is not supported. | ||||||||||||||||||

VFPCLASS(PH/PS/PD)

|

- | - | - | DQ/FP16+VL | DQ/FP16+VL | DQ/FP16 | ||||||||||||

VFPCLASS(SH/SS/SD)

|

- | - | - | DQ/FP16 | - | - | ||||||||||||

| These instructions test if the half, single, or double precision values in the source operand belong to certain classes and set the bit corresponding to each element in the destination mask register to 1 = true or 0 = false. The "packed" instructions (PH/PS/PD) operate on all elements, the "scalar" instructions (SH/SS/SD) only on the lowest element and set a single mask bit. Unused higher bits of the 64-bit mask register are cleared. The instructions support write masking which means they optionally perform a bitwise 'and' on the destination using a second mask register. The class is selected by an immediate byte and can be: QNaN, +0, -0, +∞, -∞, denormal, negative, SNaN, or any combination. | ||||||||||||||||||

(V)(AND/ANDN/OR/XOR)(PS/PD)

|

SSE/SSE2 | AVX | AVX | DQ+VL | DQ+VL | DQ | ||||||||||||

Parallel bitwise logical operations on single or double precision values. There are no "scalar" variants operating on a single element. The ANDN operation is (not source1) and source2. The precision distinction is necessary because all these instructions support write masking which observes the number of elements in the vector.

| ||||||||||||||||||

VRNDSCALE(PH/PS/PD)

|

- | - | - | F/FP16+VL | F/FP16+VL | F/FP16 | ||||||||||||

VRNDSCALE(SH/SS/SD)

|

- | - | - | F/FP16 | - | - | ||||||||||||

| Parallel rounding to a given number of fractional bits on half, single, or double precision values. The operation is

dest = round(2M * source) * 2-M with desired rounding mode and M a constant in range 0 ... 15. | ||||||||||||||||||

VREDUCE(PH/PS/PD)

|

- | - | - | DQ/FP16+VL | DQ/FP16+VL | DQ/FP16 | ||||||||||||

VREDUCE(SH/SS/SD)

|

- | - | - | DQ/FP16 | - | - | ||||||||||||

|

Parallel reduce transformation on half, single, or double precision values. The operation is dest = source – round(2M * source) * 2-M with desired rounding mode and M a constant in range 0 ... 15. These instructions can be used to accelerate transcendental functions. | ||||||||||||||||||

VSCALEF(PH/PS/PD)

|

- | - | - | F/FP16+VL | F/FP16+VL | F/FP16 | ||||||||||||

VSCALEF(SH/SS/SD)

|

- | - | - | F/FP16 | - | - | ||||||||||||

| Parallel scale operation on half, single, or double precision values. The operation is:

dest = source1 * 2floor(source2) | ||||||||||||||||||

VGETEXP(PH/PS/PD)

|

- | - | - | F/FP16+VL | F/FP16+VL | F/FP16 | ||||||||||||

VGETEXP(SH/SS/SD)

|

- | - | - | F/FP16 | - | - | ||||||||||||

| ... | ||||||||||||||||||

VGETMANT(PH/PS/PD)

|

- | - | - | F/FP16+VL | F/FP16+VL | F/FP16 | ||||||||||||

VGETMANT(SH/SS/SD)

|

- | - | - | F/FP16 | - | - | ||||||||||||

| ... | ||||||||||||||||||

VFIXUPIMM(PS/PD)

|

- | - | - | F+VL | F+VL | F | ||||||||||||

VFIXUPIMM(SS/SD)

|

- | - | - | F | - | - | ||||||||||||

| ... | ||||||||||||||||||

(V)CVTPS2PD - (V)CVTPD2PS

|

SSE2 | AVX | AVX | F+VL | F+VL | F | ||||||||||||

(V)CVT(PS/PD)2DQ - (V)CVTDQ2(PS/PD)

|

SSE/SSE2 | AVX | AVX | F+VL | F+VL | F | ||||||||||||

(V)CVTT(PS/PD)2DQ

|

SSE2 | AVX | AVX | F+VL | F+VL | F | ||||||||||||

VCVT(/T)(PS/PD)2UDQ - VCVTUDQ2(PS/PD)

|

- | - | - | F+VL | F+VL | F | ||||||||||||

VCVT(/T)(PS/PD)2(QQ/UQQ) - VCVT(QQ/UQQ)2(PS/PD)

|

- | - | - | DQ+VL | DQ+VL | DQ | ||||||||||||

(V)CVTSD2(SI/SS) - (V)CVT(SI/SS)2SD, (V)CVTTSD2SI

|

SSE2 | AVX | - | F | - | - | ||||||||||||

(V)CVT(/T)SS2SI - (V)CVTSI2SS

|

SSE | AVX | - | F | - | - | ||||||||||||

VCVT(/T)(SS/SD)2USI - VCVTUSI2(SS/SD)

|

- | - | - | F | - | - | ||||||||||||

VCVTPH2PD - VCVTPD2PH

|

- | - | - | FP16+VL | FP16+VL | FP16 | ||||||||||||

VCVTPH2PSX - VCVTPS2PHX

|

- | - | - | FP16+VL | FP16+VL | FP16 | ||||||||||||

VCVTPH2PS - VCVTPS2PH

|

- | F16C | F16C | F+VL | F+VL | F | ||||||||||||

VCVT(/T)PH2(W/UW/DQ/UDQ/QQ/UQQ) - VCVT(W/UW/DQ/UDQ/QQ/UQQ)2PH

|

- | - | - | FP16+VL | FP16+VL | FP16 | ||||||||||||

VCVT(SS/SD)2SH - VCVTSH2(SS/SD)

|

- | - | - | FP16 | - | - | ||||||||||||

VCVT(/T)SH2(SI/USI) - VCVT(SI/USI)2SH

|

- | - | - | FP16 | - | - | ||||||||||||

Parallel conversion with desired rounding. Supported data types are

The destination operand is generally a vector register. The "packed" instructions (PH/PS/PD source or destination) write all elements of the destination vector. If the source and destination data types differ in size, instructions with a 16 bit source or 64 bit destination type leave some higher source elements unconverted. Those with a 16 bit destination or 64 bit source type process all source elements and set excess elements in the destination vector to zero. The "scalar" instructions (SH/SS/SD source or destination) write only the lowest element and leave the higher elements unchanged. Instructions with SI/USI destination store the result in a 32- or 64-bit general purpose register instead. All conversion instructions with a vector destination except The source operand can be a vector register, or for "packed" instructions a vector in memory, or one value in memory broadcast to all elements of the source vector, or for "scalar" instructions with SI/USI source a 32- or 64-bit general purpose register. The broadcast option is not available for | ||||||||||||||||||

VCVT(NE/NE2)PS2BF16

|

- | - | - | BF16+VL | BF16+VL | BF16 | ||||||||||||

Parallel conversion with desired rounding of single precision to BFloat16 values. The VCVTNEPS2BF16 instruction uses one source operand, writes only to the lower half of the destination vector and zeros the remaining elements. The VCVTNE2PS2BF16 instruction stores converted elements from the first source operand in the upper half, the second source operand in the lower half of the destination vector.

| ||||||||||||||||||

(V)MOV(A/U)(PS/PD)

|

SSE/SSE2 | AVX | AVX | F+VL | F+VL | F | ||||||||||||

| Copies a vector of single or double precision values between vector registers or from a vector register to memory or vice versa. The precision distinction is necessary because these instructions support write masking which observes the number of elements in the vector. For the MOVA ("move aligned") variants the memory address must be a multiple of the vector size in bytes or an exception is generated. | ||||||||||||||||||

(V)MOVNT(PS/PD)

|

SSE/SSE2 | AVX | AVX | F+VL | F+VL | F | ||||||||||||

| Stores a vector in memory with non-temporal hint i.e. it is not beneficial to perform a write-allocate and/or insert the data in the cache hierarchy. The source operand is a vector register. Write masking is not supported. | ||||||||||||||||||

(V)MOV(SS/SD)

|

SSE/SSE2 | AVX | - | F | - | - | ||||||||||||

| Copies one single or double precision value between vector registers or from a vector register to memory or vice versa. The instructions read and write the lowest element in a vector. They zero higher elements of the destination vector if the source is memory and leave them unchanged otherwise. Broadcasting is not supported, but write masking is. | ||||||||||||||||||

VMOVSH

|

- | - | - | FP16 | - | - | ||||||||||||

| Parallel copy of half precision values between vector registers, or from a vector register to memory or vice versa. The reg-reg variant copies the lowest element from a second source operand, the higher elements from the first source operand. The memory load variant writes the lowest element in the destination vector and leaves the higher elements unchanged. The memory store variant stores the lowest element of the source operand in memory. | ||||||||||||||||||

VGATHER(D/Q)(PS/PD)

|

- | - | - | F+VL | F+VL | F | ||||||||||||

| ... | ||||||||||||||||||

VGATHERPF(0/1)(D/Q)(PS/PD)

|

- | - | - | PF | PF | PF | ||||||||||||

| ... | ||||||||||||||||||

VSCATTER(D/Q)(PS/PD)

|

- | - | - | F+VL | F+VL | F | ||||||||||||

| ... | ||||||||||||||||||

VSCATTERPF(0/1)(D/Q)(PS/PD)

|

- | - | - | PF | PF | PF | ||||||||||||

| ... | ||||||||||||||||||

(V)MOVDDUP

|

SSE3 | AVX | AVX | F+VL | F+VL | F | ||||||||||||

| Copies the double precision values in the even lanes of the source operand to the even and odd lanes of the destination operand. Broadcasting is not supported. | ||||||||||||||||||

(V)MOVS(L/H)DUP

|

SSE3 | AVX | AVX | F+VL | F+VL | F | ||||||||||||

| Copies the single precision values in the even (MOVSL) or odd lanes (MOVSH) of the source operand to the even and odd lanes of the destination operand. Broadcasting is not supported. | ||||||||||||||||||

(V)UNPCK(L/H)(PS/PD)

|

SSE/SSE2 | AVX | AVX | F+VL | F+VL | F | ||||||||||||

These instructions interleave the single or double precision value values of the first and second source operand. In order to fit the data into the destination vector PUNPCKL reads only the elements in the even 64-bit lanes, UNPCKH only the odd 64-bit lanes of the source operands.

| ||||||||||||||||||

(V)MOV(L/H/HL/LH)(PS/PD)

|

SSE/SSE2 | AVX | - | F | - | - | ||||||||||||

These instructions copy a 64-bit pair of single precision values (PS) or one 64-bit double precision value (PD). If the source operand is a vector register the MOVL and MOVLH instructions copy the lower half, MOVH and MOVHL the upper half of the 128-bit source vector. If the destination operand is a vector register they copy the data to both halves. For MOVLH and MOVHL both operands are vector registers. For MOVL and MOVH the source or destination is memory. Broadcasting and write masking is not supported.

| ||||||||||||||||||

(V)EXTRACTPS

|

SSE4_1 | AVX | - | F | - | - | ||||||||||||

| Extracts a single precision value using a constant index to select an element of the source operand and stores it in a general purpose register or in memory. If the destination is a 64-bit register the instruction zeros the upper half. | ||||||||||||||||||

VEXTRACTF32X4

|

- | - | - | - | F+VL | F | ||||||||||||

VEXTRACTF32X8

|

- | - | - | - | - | DQ | ||||||||||||

VEXTRACTF64X2

|

- | - | - | - | DQ+VL | DQ | ||||||||||||

VEXTRACTF64X4

|

- | - | - | - | - | F | ||||||||||||

VEXTRACTF128

|

- | - | AVX | - | - | - | ||||||||||||

| These instructions extract four (F32X4) or eight (F32X8) single precision values, or two (F64X2) or four (F64X4) double precision values, or one block of 128 bits (F128), from a lane of that width (e.g. 128 bits for F32X4) of the source operand selected by a constant index and store the data in memory, or in the lowest lane of a vector register. Higher lanes of the destination register are zeroed. The index is provided by an immediate byte. Broadcasting is not supported. | ||||||||||||||||||

(V)INSERTPS

|

SSE4_1 | AVX | - | F | - | - | ||||||||||||

| Inserts a single precision value using two indices to select an element in the source operand and the destination operand. Additionally zeros the elements in the destination where the corresponding bit in a 4-bit mask is set. The indices and the mask are constants in an immediate byte. Broadcasting and AVX-512 write masking is not supported. | ||||||||||||||||||

VINSERTF32X4

|

- | - | - | - | F+VL | F | ||||||||||||

VINSERTF32X8

|

- | - | - | - | - | DQ | ||||||||||||

VINSERTF64X2

|

- | - | - | - | DQ+VL | DQ | ||||||||||||

VINSERTF64X4

|

- | - | - | - | - | F | ||||||||||||

VINSERTF128

|

- | - | AVX | - | - | - | ||||||||||||

| These instructions insert four (F32X4) or eight (F32X8) single precision values, or two (F64X2) or four (F64X4) double precision values, or one block of 128 bits ($128), in a lane of that width (e.g. 128 bits for F32X4) of the destination vector selected by a constant index provided by an immediate byte. They load this data from memory, or the lowest lane of a vector register specified by the second source operand, and data for the remaining lanes of the destination vector from the corresponding lanes of the first source operand, a vector register. Broadcasting is not supported. | ||||||||||||||||||

(V)SHUFPS

|

SSE | AVX | AVX | F+VL | F+VL | F | ||||||||||||

| Shuffles the single precision values of the source operands using constant indices. For each element of the destination vector, a 2-bit index selects an element in the same 128-bit lane of the source operands. The indices for even 64-bit destination lanes can only address elements in the first source operand, those for odd lanes only elements in the second source operand. For each 128-bit lane the same four indices are taken from an immediate byte. | ||||||||||||||||||

(V)SHUFPD

|

SSE2 | AVX | AVX | F+VL | F+VL | F | ||||||||||||

| Shuffles the double precision values of the source operands using constant indices. For each element of the destination vector, a 1-bit index selects an element in the same 128-bit lane of the source operands. The indices for even 64-bit destination lanes can only address elements in the first source operand, those for odd lanes only elements in the second source operand. The indices are taken from an immediate byte. | ||||||||||||||||||

VSHUFF32X4

|

- | - | - | - | F+VL | F | ||||||||||||

VSHUFF64X2

|

- | - | - | - | F+VL | F | ||||||||||||

| Shuffles a 128-bit wide group of four single precision or two double precision values of the source operands using constant indices. For each 128-bit destination lane an index selects one 128-bit lane of the source operands. The indices for even destination lanes can only address lanes in the first source operand, those for odd destination lanes only lanes in the second source operand. Instruction variants operating on 256-bit vectors use two 1-bit indices, those operating on 512-bit vectors four 2-bit indices, always taken from an immediate byte. | ||||||||||||||||||

VPERM(PS/PD)

|

- | - | AVX2 | - | F+VL | F | ||||||||||||

Permutes the single or double precision values of the source operand within a 256-bit lane using constant or variable source element indices. For each destination element the VPERMPD instruction with constant indices obtains a 2-bit index from an immediate byte and uses the same four indices in each 256-bit lane. VPERMPS does not support constant indices.

The variable index variants permute the elements of a second source operand. For each destination element they obtain a 3-bit (PS) or 2-bit (PD) source index from the corresponding doubleword or quadword of the first source operand. | ||||||||||||||||||

VPERM(I/T)2(PS/PD)

|

- | - | - | F+VL | F+VL | F | ||||||||||||

| The "I" instruction variant concatenates the second and first source operand and permutes their elements using element indices. For each element of the destination vector it obtains a source index, modulo twice the vector size in elements, from the doubleword or quadword in this lane and overwrites it. The "T" variant concatenates the second source and destination operand, and obtains the source indices from the first source operand. In other words the instructions perform the same operation, one overwriting the indices, the other one half of the data table. The destination and first source operand is a vector register. The second source operand can be a vector register, a vector in memory, or a single value broadcast to all elements of the vector. | ||||||||||||||||||

VPERMIL(PS/PD)

|

- | AVX | AVX | F+VL | F+VL | F | ||||||||||||

Permutes the single or double precision values of the first source operand within a 128-bit lane using constant or variable source element indices. For each destination element the VPERMILPD instruction with constant indices obtains a 1-bit index from an immediate byte. The VPERMILPS instruction selects elements with a 2-bit index from an immediate byte and uses the same four indices for each 128-bit lane of the destination. The destination is a vector register. The source can be a vector register or a vector in memory.

For each destination element the variable index variants obtain a source index from the lowest two bits (PS) or the second lowest bit (PD) of the corresponding doubleword or quadword of a second source operand. The first source operand is a vector register. The second source operand can be a vector register, a vector in memory, or one doubleword or quadword in memory broadcast to all elements of the vector. | ||||||||||||||||||

VCOMPRESS(PS/PD)

|

- | - | - | F+VL | F+VL | F | ||||||||||||

| These instructions copy single or double precision values from a vector register to memory or another vector register. They copy each element in the source vector if the corresponding bit in a mask register is set, and only then increment the memory address or destination register element number for the next store. Remaining elements if the destination is a register are left unchanged or zeroed depending on the instruction variant. | ||||||||||||||||||

VEXPAND(PS/PD)

|

- | - | - | F+VL | F+VL | F | ||||||||||||

| These instructions copy single or double precision values from memory or a vector register to another vector register. They load each element of the destination register, if the corresponding bit in a mask register is set, from the source and only then increment the memory address or source register element number for the next load. Destination elements where the mask bit is cleared are left unchanged or zeroed depending on the instruction variant. | ||||||||||||||||||

VBLENDM(PS/PD)

|

- | - | - | F+VL | F+VL | F | ||||||||||||

| Parallel blend of the single or double precision value in the source operands. For each element in the destination vector the corresponding bit in a mask register selects the element in the first source operand (0) or second source operand (1). If no mask operand is provided the default is all ones. "Zero-masking" variants of the instructions zero the destination elements where the mask bit is 0. In other words these instructions are equivalent to copying the first source operand to the destination, then the second source operand with regular write masking. | ||||||||||||||||||

VBROADCASTSS, memory source

|

- | AVX | AVX | F+VL | F+VL | F | ||||||||||||

VBROADCASTSS, register source

|

- | AVX2 | AVX2 | F+VL | F+VL | F | ||||||||||||

VBROADCASTF32X2

|

- | - | - | - | DQ+VL | DQ | ||||||||||||

VBROADCASTF32X4

|

- | - | - | - | F+VL | F | ||||||||||||

VBROADCASTF32X8

|

- | - | - | - | - | DQ | ||||||||||||

VBROADCASTSD, memory source

|

- | - | AVX | - | F+VL | F | ||||||||||||

VBROADCASTSD, register source

|

- | - | AVX2 | - | F+VL | F | ||||||||||||

VBROADCASTF64X2

|

- | - | - | - | DQ+VL | DQ | ||||||||||||

VBROADCASTF64X4

|

- | - | - | - | - | F | ||||||||||||

VBROADCASTF128

|

- | - | AVX | - | - | - | ||||||||||||

| These instructions broadcast one (SS), two (F32X2), four (F32X4), or eight (F32X8) single precision values, or one (SD), two (F64X2), or four (F64X4) double precision values, or one block of 128 bits (F128), from the lowest lane of that width (e.g. 64 bits for F32X2) of a source vector register, or from memory to all lanes of that width in the destination vector. The AVX-512 instructions support write masking with single or double precision granularity. | ||||||||||||||||||

| Total: 574 | ||||||||||||||||||

Mask register instructions[edit]

Except as noted the destination and source operands of all these instructions are mask registers. Byte, word, and doubleword instructions zero-extend the result to 64 bits.

| Instruction | AVX-512 | |||

|---|---|---|---|---|

| B | W | D | Q | |

KADD(B/W/D/Q)

|

DQ | DQ | BW | BW |

| Add two masks. | ||||

K(AND/ANDN/NOT/OR/XNOR/XOR)(B/W/D/Q)

|

DQ | F | BW | BW |

Bitwise logical operations. ANDN is (not source1) and source2, XNOR is not (source1 xor source2).

| ||||

KTEST(B/W/D/Q)

|

DQ | DQ | BW | BW |

| Performs bitwise operations temp1 = source1 and source2, temp2 = (not source1) and source2, sets the ZF and CF (for branch instructions) to indicate if the respective result is all zeros. | ||||

KORTEST(B/W/D/Q)

|

DQ | F | BW | BW |

| Performs bitwise operation temp = source1 or source2, sets ZF to indicate if the result is all zeros, CF if all ones. | ||||

KSHIFT(L/R)(B/W/D/Q)

|

DQ | F | BW | BW |

| Bitwise logical shift left/right by a constant. | ||||

KMOV(B/W/D/Q)

|

DQ | F | BW | BW |

| Copies a bit mask from a mask register to another mask register, a 32- or 64-bit GPR, or memory, or from a GPR or memory to a mask register. The mask is zero extended if the destination register is wider. | ||||

KUNPCK(BW/WD/DQ)

|

- | F | BW | BW |

| Interleaves the lower halves of the second and first source operand. | ||||

| Total: 51 | ||||

Detection[edit]

| CPUID | Instruction Set | |

|---|---|---|

| Input | Output | |

| EAX=07H, ECX=0 | EBX[bit 16] | AVX512F |

| EBX[bit 17] | AVX512DQ | |

| EBX[bit 21] | AVX512_IFMA | |

| EBX[bit 26] | AVX512PF | |

| EBX[bit 27] | AVX512ER | |

| EBX[bit 28] | AVX512CD | |

| EBX[bit 30] | AVX512BW | |

| EBX[bit 31] | AVX512VL | |

| ECX[bit 01] | AVX512_VBMI | |

| ECX[bit 06] | AVX512_VBMI2 | |

| ECX[bit 08] | GFNI | |

| ECX[bit 09] | VAES | |

| ECX[bit 10] | VPCLMULQDQ | |

| ECX[bit 11] | AVX512_VNNI | |

| ECX[bit 12] | AVX512_BITALG | |

| ECX[bit 14] | AVX512_VPOPCNTDQ | |

| EDX[bit 02] | AVX512_4VNNIW | |

| EDX[bit 03] | AVX512_4FMAPS | |

| EDX[bit 08] | AVX512_VP2INTERSECT | |

| EDX[bit 23] | AVX512_FP16 | |

| EAX=07H, ECX=1 | EAX[bit 05] | AVX512_BF16 |

Implementation[edit]

| Designer | Microarchitecture | Year | Support Level | ||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| F | CD | ER | PF | BW | DQ | VL | FP16 | IFMA | VBMI | VBMI2 | BITALG | VPOPCNTDQ | VP2INTERSECT | 4VNNIW | 4FMAPS | VNNI | BF16 | ||||

| Intel | Knights Landing | 2016 | ✔ | ✔ | ✔ | ✔ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | |

| Knights Mill | 2017 | ✔ | ✔ | ✔ | ✔ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✔ | ✘ | ✔ | ✔ | ✘ | ✘ | ||

| Skylake (server) | 2017 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ||

| Cannon Lake | 2018 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✘ | ✔ | ✔ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ||

| Cascade Lake | 2019 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✔ | ✘ | ||

| Cooper Lake | 2020 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✔ | ✔ | ||

| Tiger Lake | 2020 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✘ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✘ | ✘ | ✔ | ✘ | ||

| Rocket Lake | 2021 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✘ | ✔ | ✔ | ✔ | ✔ | ✔ | ✘ | ✘ | ✘ | ✔ | ✘ | ||

| Alder Lake | 2021 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ||