| Edit Values | |

| Skylake (server) µarch | |

| General Info | |

| Arch Type | CPU |

| Designer | Intel |

| Manufacturer | Intel |

| Introduction | May 4, 2017 |

| Process | 14 nm |

| Core Configs | 4, 6, 8, 10, 12, 14, 16, 18, 20, 22, 24, 26, 28 |

| Pipeline | |

| Type | Superscalar |

| OoOE | Yes |

| Speculative | Yes |

| Reg Renaming | Yes |

| Stages | 14-19 |

| Instructions | |

| ISA | x86-16, x86-32, x86-64 |

| Extensions | MOVBE, MMX, SSE, SSE2, SSE3, SSSE3, SSE4.1, SSE4.2, POPCNT, AVX, AVX2, AES, PCLMUL, FSGSBASE, RDRND, FMA3, F16C, BMI, BMI2, VT-x, VT-d, TXT, TSX, RDSEED, ADCX, PREFETCHW, CLFLUSHOPT, XSAVE, SGX, MPX, AVX-512 |

| Cache | |

| L1I Cache | 32 KiB/core 8-way set associative |

| L1D Cache | 32 KiB/core 8-way set associative |

| L2 Cache | 1 MiB/core 16-way set associative |

| L3 Cache | 1.375 MiB/core 11-way set associative |

| Cores | |

| Core Names | Skylake X, Skylake SP |

| Succession | |

Skylake (SKL) Server Configuration is Intel's successor to Broadwell, an enhanced 14nm+ process microarchitecture for enthusiasts and servers. Skylake succeeded Broadwell. Skylake is the "Architecture" phase as part of Intel's PAO model. The microarchitecture was developed by Intel's R&D center in Haifa, Israel.

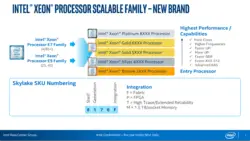

For desktop enthusiasts, Skylake is branded Core i7, and Core i9 processors (under the Core X series). For scalable server class processors, Intel branded it as Xeon Bronze, Xeon Silver, Xeon Gold, and Xeon Platinum.

There are a fair number of major differences in the Skylake server configuration vs the client configuration.

Contents

Codenames

- See also: Client Skylake's Codenames

| Core | Abbrev | Target |

|---|---|---|

| Skylake X | SKL-X | High-end desktops & enthusiasts market |

| Skylake SP | SKL-SP | Server Scalable Processors |

Brands

- See also: Client Skylake's Brands

Intel introduced a number of new server chip families with the introduction of Skylake SP as well as a new enthusiasts family with the introduction of Skylake X.

| Logo | Family | General Description | Differentiating Features | ||||||

|---|---|---|---|---|---|---|---|---|---|

| Cores | HT | AVX | AVX2 | AVX-512 | TBT | ECC | |||

|

Core i7 | Enthusiasts/High Performance (X) | 6 - 8 | ✔ | ✔ | ✔ | ✔ | ✔ | ✘ |

|

Core i9 | Enthusiasts/High Performance | 10 - 18 | ✔ | ✔ | ✔ | ✔ | ✔ | ✘ |

| Logo | Family | General Description | Differentiating Features | ||||||

| Cores | HT | TBT | AVX-512 | AVX-512 Units | UPI links | Scalability | |||

|

Xeon Bronze | Entry-level performance / Cost-sensitive |

6 - 8 | ✘ | ✘ | ✔ | 1 | 2 | Up to 2 |

|

Xeon Silver | Mid-range performance / Efficient lower power |

4 - 12 | ✔ | ✔ | ✔ | 1 | 2 | Up to 2 |

|

Xeon Gold 5000 | High performance | 4 - 14 | ✔ | ✔ | ✔ | 1 | 2 | Up to 4 |

| Xeon Gold 6000 | Higher performance | 6 - 22 | ✔ | ✔ | ✔ | 2 | 3 | Up to 4 | |

|

Xeon Platinum | Highest performance / flexibility | 4 - 28 | ✔ | ✔ | ✔ | 2 | 3 | Up to 8 |

Release Dates

Skylake-based Core X was introduced in May 2017 while Skylake SP was introduced in July 2017.

Process Technology

- Main article: Kaby Lake § Process Technology

Unlike mainstream Skylake models, all Skylake server configuration models are fabricated on Intel's enhanced 14+ nm process which is used by Kaby Lake (see Kaby Lake § Process Technology for more info).

Compatibility

| Vendor | OS | Version | Notes |

|---|---|---|---|

| Microsoft | Windows | Windows Server 2008 | Support |

| Windows Server 2008 R2 | |||

| Windows Server 2012 | |||

| Windows Server 2012 R2 | |||

| Windows Server 2016 | |||

| Linux | Linux | Kernel 3.19 | Initial Support (MPX support) |

Compiler support

| Compiler | Arch-Specific | Arch-Favorable |

|---|---|---|

| ICC | -march=skylake-avx512 |

-mtune=skylake-avx512

|

| GCC | -march=skylake-avx512 |

-mtune=skylake-avx512

|

| LLVM | -march=skylake-avx512 |

-mtune=skylake-avx512

|

| Visual Studio | /arch:AVX2 |

/tune:skylake

|

CPUID

| Core | Extended Family |

Family | Extended Model |

Model |

|---|---|---|---|---|

| X | 0 | 0x6 | 0x5 | 0xE |

| Family 6 Model 94 | ||||

| SP | 0 | 0x6 | 0x5 | 0x5 |

| Family 6 Model 85 | ||||

Architecture

Skylake server configuration introduces a number of significant changes from both Intel's previous microarchitecture, Broadwell, as well as the Skylake (client) architecture. Unlike client models, Skylake servers and HEDT models will still incorporate the fully integrated voltage regulator (FIVR) on-die. Those chips also have an entirely new multi-core architecture along with a new mesh topology interconnect network (from ring topology).

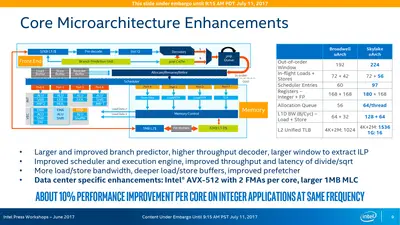

Key changes from Broadwell

- Improved "14 nm+" process (see Kaby Lake § Process Technology)

- Omni-Path Architecture (OPA)

- Mesh architecture

- Sub-NUMA Clustering (SNC) support (replaces the Cluster-on-Die (COD) implementation)

- Chipset

- Core

- Front End

- LSD is disabled (Likely due to a bug)

- Larger legacy pipeline delivery (5 µOPs, up from 4)

- Another simple decoder has been added.

- Allocation Queue (IDQ)

- Larger delivery (6 µOPs, up from 4)

- 2.28x larger buffer (64/thread, up from 56)

- Partitioned for each active threads (from unified)

- Improved branch prediction unit

- reduced penalty for wrong direct jump target

- No specifics were disclosed

- µOP Cache

- instruction window is now 64 Bytes (from 32)

- 1.5x bandwidth (6 µOPs/cycle, up from 4)

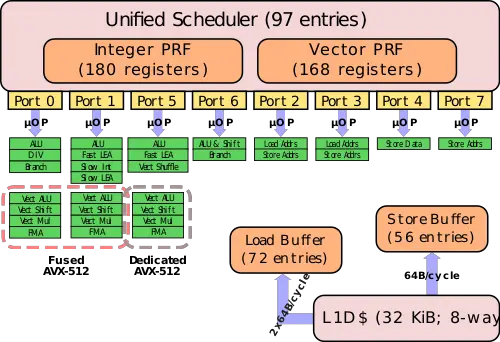

- Execution Engine

- Larger re-order buffer (224 entries, up from 192)

- Larger scheduler (97 entries, up from 64)

- Larger Integer Register File (180 entries, up from 168)

- Back-end

- Port 4 now performs 512b stores (from 256b)

- Port 0 & Port 1 can now be fused to perform AVX-512

- Port 5 now can do full 512b operations (not on all models)

- Memory Subsystem

- Larger store buffer (56 entries, up from 42)

- Page split load penalty reduced 20-fold

- Larger Write-back buffer

- Store is now 64B/cycle (from 32B/cycle)

- Load is now 2x64B/cycle (from 2x32B/cycle)

- Front End

- Memory

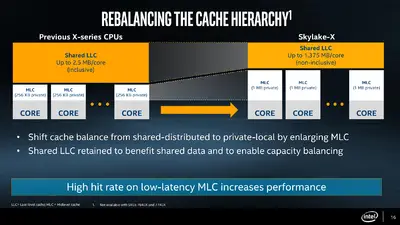

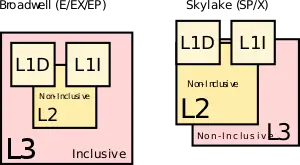

- L2$

- Increased to 1 MiB/core (from 250 KiB/core)

- L3$

- Was made non-inclusive (from inclusive)

- Reduced to 1.375 MiB/core (from 2.5 MiB/core)

- DRAM

- hex-channel DDR4-2666 (from quad-channel)

- L2$

- Support for faster DDR-2666 memory

- TLBs

- ITLB

- 4 KiB page translations was changed from 4-way to 8-way associative

- STLB

- 4 KiB + 2 MiB page translations was changed from 6-way to 12-way associative

- DMI/PEG are now on a discrete clock domain with BCLK sitting on its own domain with full-range granularity (1 MHz intervals)

- ITLB

- Testability

- New support for Direct Connect Interface (DCI), a new debugging transport protocol designed to allow debugging of closed cases (e.g. laptops, embedded) by accessing things such as JTAG through any USB 3 port.

CPU changes

- Most ALU operations have 4 op/cycle 1 for 8 and 32-bit registers. 64-bit ops are still limited to 3 op/cycle. (16-bit throughput varies per op, can be 4, 3.5 or 2 op/cycle).

- MOVSX and MOVZX have 4 op/cycle throughput for 16->32 and 32->64 forms, in addition to Haswell's 8->32, 8->64 and 16->64 bit forms.

- ADC and SBB have throughput of 1 op/cycle, same as Haswell.

- Vector moves have throughput of 4 op/cycle (move elimination).

- Not only zeroing vector vpXORxx and vpSUBxx ops, but also vPCMPxxx on the same register, have throughput of 4 op/cycle.

- Vector ALU ops are often "standardized" to latency of 4. for example, vADDPS and vMULPS used to have L of 3 and 5, now both are 4.

- Fused multiply-add ops have latency of 4 and throughput of 0.5 op/cycle.

- Throughput of vADDps, vSUBps, vCMPps, vMAXps, their scalar and double analogs is increased to 2 op/cycle.

- Throughput of vPSLxx and vPSRxx with immediate (i.e. fixed vector shifts) is increased to 2 op/cycle.

- Throughput of vANDps, vANDNps, vORps, vXORps, their scalar and double analogs, vPADDx, vPSUBx is increased to 3 op/cycle.

- vDIVPD, vSQRTPD have approximately twice as good throughput: from 8 to 4 and from 28 to 12 cycles/op.

- Throughput of some MMX ALU ops (such as PAND mm1, mm2) is decreased to 2 or 1 op/cycle (users are expected to use wider SSE/AVX registers instead).

New instructions

- See also: Client Skylake's New instructions

Skylake server introduced a number of new instructions:

-

SGX1- Software Guard Extensions, Version 1 -

MPX-Memory Protection Extensions -

XSAVEC- Save processor extended states with compaction to memory -

XSAVES- Save processor supervisor-mode extended states to memory. -

CLFLUSHOPT- Flush & Invalidates memory operand and its associated cache line (All L1/L2/L3 etc..) -

AVX-512, specifically: -

PKU- Memory Protection Keys for Userspace -

PCOMMIT- PCOMMIT instruction -

CLWB- CLWB instruction

Block Diagram

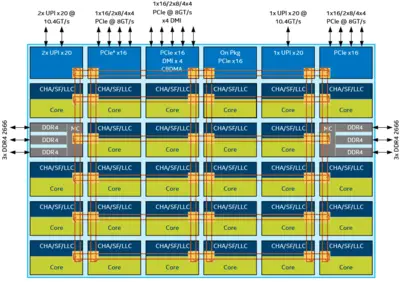

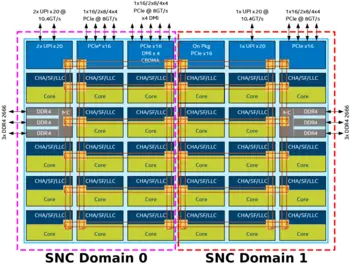

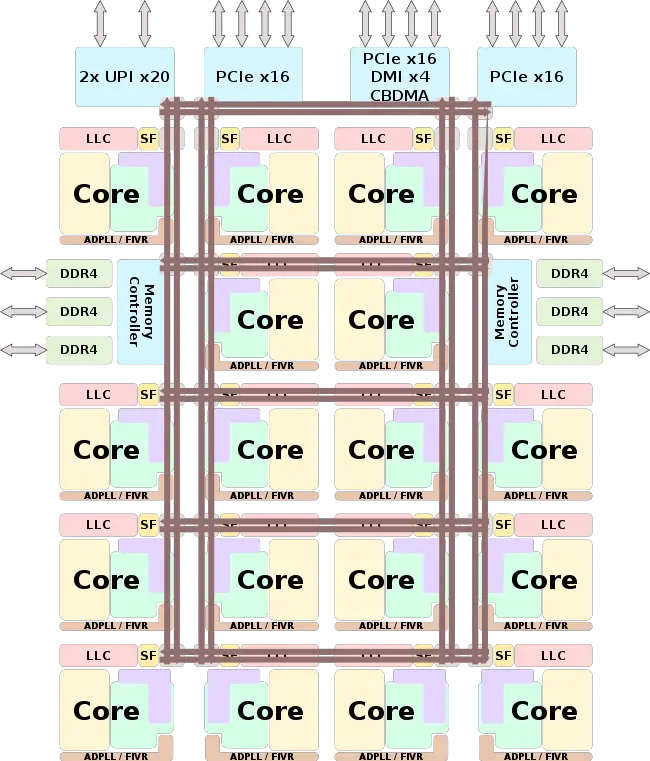

Entire SoC Overview

Note that the LCC die is identical without the two bottom rows. The XCC (28-core) die has one additional row and two additional columns of cores. Otherwise the die is identical.

- CHA - Caching and Home Agent

- SF - Snooping Filter

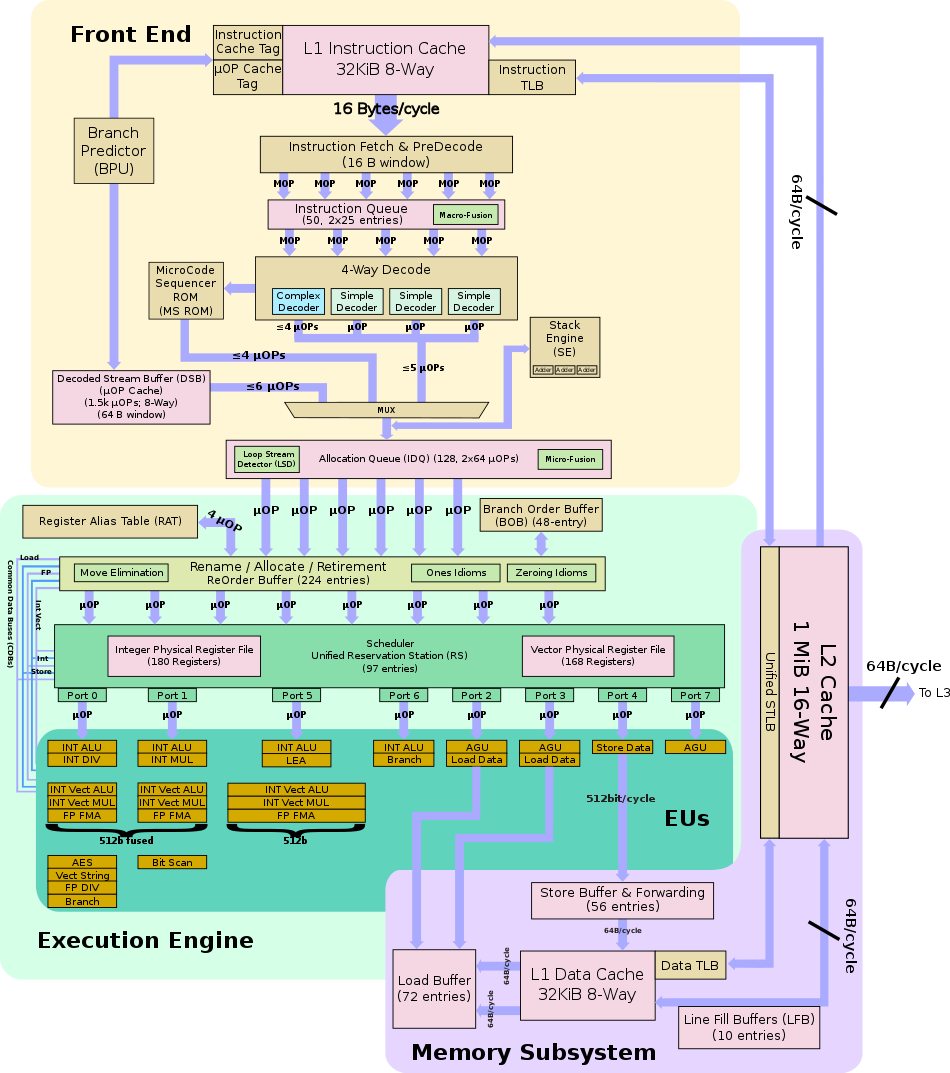

Individual Core

Memory Hierarchy

Client

Some major organizational changes were done to the cache hierarchy in Skylake server configuration vs Broadwell/Haswell. The memory hierarchy for Skylake's server and HEDT processors has been rebalanced. Note that the L3 is now non-inclusive and some of the SRAM from the L3 cache was moved into the private L2 cache.

- Cache

- L0 µOP cache:

- 1,536 µOPs, 8-way set associative

- 32 sets, 6-µOP line size

- statically divided between threads, per core, inclusive with L1I

- 1,536 µOPs, 8-way set associative

- L1I Cache:

- 32 KiB, 8-way set associative

- 64 sets, 64 B line size

- shared by the two threads, per core

- 32 KiB, 8-way set associative

- L1D Cache:

- 32 KiB, 8-way set associative

- 64 sets, 64 B line size

- shared by the two threads, per core

- 4 cycles for fastest load-to-use (simple pointer accesses)

- 5 cycles for complex addresses

- 64 B/cycle load bandwidth

- 32 B/cycle store bandwidth

- Write-back policy

- L2 Cache:

- Unified, 1 MiB, 16-way set associative

- 64 B line size

- Non-inclusive

- 64 B/cycle bandwidth to L1$

- Write-back policy

- 14 cycles latency

- L3 Cache:

- 1.375 MiB/s, shared across all cores

- Note that some models have non-default cache sizes which are larger due to some disabled cores

- 64 B line size

- 11-way set associative

- Non-Inclusive

- Write-back policy

- 50-70 cycles latency

- 1.375 MiB/s, shared across all cores

- L0 µOP cache:

Skylake TLB consists of dedicated L1 TLB for instruction cache (ITLB) and another one for data cache (DTLB). Additionally there is a unified L2 TLB (STLB).

- TLBs:

- ITLB

- 4 KiB page translations:

- 128 entries; 8-way set associative

- dynamic partitioning

- 2 MiB / 4 MiB page translations:

- 8 entries per thread; fully associative

- Duplicated for each thread

- 4 KiB page translations:

- DTLB

- 4 KiB page translations:

- 64 entries; 4-way set associative

- fixed partition

- 2 MiB / 4 MiB page translations:

- 32 entries; 4-way set associative

- fixed partition

- 1G page translations:

- 4 entries; fully associative

- fixed partition

- 4 KiB page translations:

- STLB

- 4 KiB + 2 MiB page translations:

- 1536 entries; 12-way set associative

- fixed partition

- 1 GiB page translations:

- 16 entries; 4-way set associative

- fixed partition

- 4 KiB + 2 MiB page translations:

- ITLB

Overview

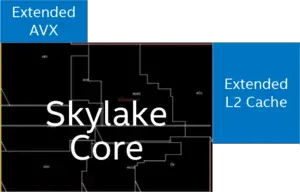

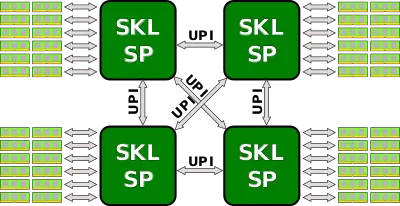

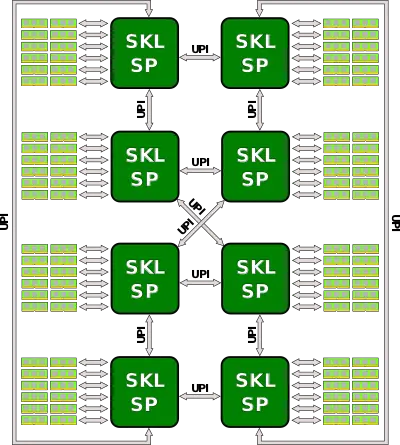

Skylake-based servers have been entirely re-architected to meet the need for increased scalabiltiy and performance all while meeting power requirements. A superset model is shown on the right. Skylake-based servers are the first mainstream servers to make use of Intel's new mesh interconnect architecture, an architecture that was previously explored, experimented with, and enhanced with Intel's Phi many-core processors. Those processors are offered from 4 cores up to 28 cores with 8 to 56 threads. With Skylake, Intel now has a separate core architecture for those chips which incorporate a plethora of new technologies and features including support for the new AVX-512 instruction set extension.

All models incorporate 6 channels of DDR4 supporting up to 12 DIMMS for a total of 768 GiB (with extended models support 1.5 TiB). For I/O all models incorporate 48x (3x16) lanes of PCIe 3.0. There is an additional x4 lanes PCIe 3.0 reserved exclusively for DMI for the the Lewisburg chipset. For a selected number of models (specifically those with F suffix) have an Omni-Path Host Fabric Interface (HFI) on-package (see Integrated Omni-Path).

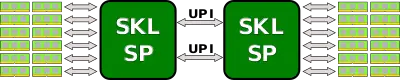

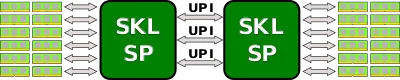

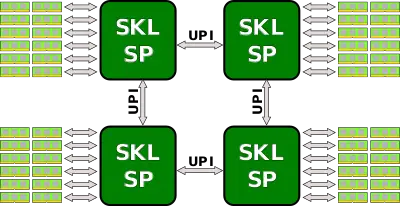

Skylake processors are designed for scalability, supporting 2-way, 4-way, and 8-way multiprocessing through Intel's new Ultra Path Interconnect (UPI) interconnect links, with two to three links being offered (see § Scalability). High-end models have node controller support allowing higher way (e.g., 32-way multiprocessing).

Pipeline

- See also: Client Architecture

All the changes and improvements done to the client pipeline is also found in the server configuration.

Front-end

Other than the large amount of changes described in client front-end, there are no server-specific changes that were done to the front-end with the exception of the LSD. The Loop Stream Detector (LSD) has been disabled. While the exact reason is not known, it might be related to a severe issue that was experienced by the OCaml Development Team. The issue was patched via microcode on the client platform, however this change might indicate it was possibly disabled on there as well. The exact implications of this are unknown.

Execution engine

This is the first implementation to incorporate AVX-512, a 512-bit SIMD x86 instruction set extension. Intel introduced AVX-512 in two different ways:

In the simple implementation, the variants used in the entry-level and mid-range Xeon servers, AVX-512 fuses Port 0 and Port 1 to form a 512-bit unit. Since those two ports are 256-wide, an AVX-512 option that is dispatched by the scheduler to port 0 will execute on both ports. Note that unrelated operations can still execute in parallel. For example, an AVX-512 operation and an Int ALU operation may execute in parallel - the AVX-512 is dispatched on port 0 and use the AVX unit on port 1 as well and the Int ALU operation will execute independently in parallel on port 1.

In the high-end and highest performance Xeons, Intel added a second dedicated AVX-512 unit in addition to the fused Port0-1 operations described above. The dedicated unit is situated on Port 5.

Implementation

Physically, Intel added 768 KiB L2 cache and the second AVX-512 VPU externally to the core.

Memory subsystem

The medium level cache (MLC) and last level cache (LLC) was rebalanced. Traditionally, Intel had a 256 KiB L2 cache which was duplicated along with the L1s over in the LLC which was 2.5 MiB. That is, prior to Skylake, the 256 KiB L2 cache actually took up 512 KiB of space for a total of 2.25 mebibytes effective cache per core. In Skylake Intel doubled the L2 and quadrupled the effective capacity to 1 MiB while decreasing the LLC to 1.375 MiB. The LLC is also now made non-inclusive, i.e., the L2 may or may not be in the L3 (no guarantee is made); what stored where will depend on the particular access pattern of the executing application, the size of code and data accessed, and the inter-core sharing behavior. Having an inclusive L3 makes cache coherence considerably easier to implement. Snooping only requires checking the L3 cache tags to know if the data is on board and in which core. It also makes passing data around a bit more efficient. It's currently unknown what mechanism is being used to reduce snooping. In the past, Intel has discussed a couple of additional options they were researching such as NCID (non-inclusive cache, inclusive directory architecture). It's possible that a NCID is being used in Skylake or a related derivative. These changes also mean that software optimized for data placing in the various caches needs to be revised for the new changes, particularly in situations where data is not shared, the overall capacity can be treated as L2+L3 for a total of 2.375 MiB.

Mesh Architecture

On the previous number of generations, Intel has been adding cores onto the die and connecting them via a ring architecture. This was sufficient until recently. With each generation, the added cores increased the access latency while lowering the available bandwidth per core. Intel mitigated this problem by splitting up the die into two halves each on its own ring. This reduced hopping distance and added additional bandwidth but it did not solve the growing fundamental inefficiencies of the ring architecture.

This was completely addressed with the new mesh architecture that is implemented in the Skylake server processors. The mesh is arranged as a matrix of vertical and horizontal communication paths which allow communication to take the shortest path to the correct node. The new mesh architecture implements a modular design for the routing resources in order to remove the various bottlenecks. That is, the mesh architecture now integrates the caching agent, the home agent, and the IO subsystem on the mesh interconnect distributed across all the cores. Each core now has its own associated LLC slice as well as the snooping filter and the Caching and Home Agent (CHA). Additional nodes such as the two memory controllers, the Ultra Path Interconnect (UPI) nodes and PCIe are not independent node on the mesh as well and they now behave identically to any other node/core in the network. This means that in addition to the performance increase expected from core-to-core and core-to-memory latency, there should be substantial increase in I/O performance. The CHA which is found on each of the LLC slices now maps addresses being accessed to the specific LLC bank, memory controller, or I/O subsystem. This provides the necessary information required for the routing to take place.

Cache Coherency

Given the new mesh architecture, new tradeoffs were involved. The new UPI inter-socket links are a valuable resource that could bottlenecked when flooded with unnecessary cross-socket snoop requests. There's also considerably higher memory bandwidth with Skylake which can impact performance. As a compromise, the previous four snoop modes (no-snoop, early snoop, home snoop, and directory) have been reduced to just directory-base coherency. This also alleviates the implementation complex (which is already complex enough in itself).

It should be pointed out that the directory-base coherency optimizations that were done in previous generations have been furthered improved with Skylake - particularly OSB, HitME cache, IO directory cache. Skylake maintained support for Opportunistic Snoop Broadcast (OSB) which allows the network to opportunistically make use of the UPI links when idle or lightly loaded thereby avoiding an expensive memory directory lookup. With the mesh network and distributed CHAs, HitME is now distributed and scales with the CHAs, enhancing the speeding up of cache-to-cache transfers (Those are your migratory cache lines that frequently get transferred between nodes). Specifically for I/O operations, the I/O directory cache (IODC), which was introduced with Haswell, improves stream throughput by eliminating directory reads for InvItoE from snoopy caching agent. Previously this was implemented as a 64-entry directory cache to complement the directory in memory. In Skylake, with a distributed CHA at each node, the IODC is implemented as an eight-entry directory cache per CHA.

Sub-NUMA Clustering

In previous generations Intel had a feature called cluster-on-die (COD) which was introduced with Haswell. With Skylake, there's a similar feature called sub-NUMA cluster (SNC). With a memory controller physically located on each side of the die, SNC allows for the creation of two localized domains with each memory controller belonging to each domain. The processor can then map the addresses from the controller to the distributed home ages and LLC in its domain. This allows executing code to experience lower LLC and memory latency within its domain compared to accesses outside of the domain.

It should be pointed out that in contrast to COD, SNC has a unique location for every adddress in the LCC and is evenr duplicated across LLC banks (previously, COD cache lines could have copies). Additionally, on multiprocessor system, address mapped to memory on remote sockets are still uniformally distributed across all LLC banks irrespective of the localized SNC domain.

Scalability

- See also: QuickPath Interconnect and Ultra Path Interconnect

In the last couple of generations, Intel has been utilizing QuickPath Interconnect (QPI) which served as a high-speed point-to-point interconnect. QPI has been replaced the Ultra Path Interconnect (UPI) which is higher-efficiency coherent interconnect for scalable systems, allowing multiple processors to share a single shared address space. Depending on the exact model, each processor can have either either two or three UPI links connecting to the other processors.

UPI links eliminate some of the scalability limited that surfaced in QPI. They use directory-based home snoop coherency protocol and operate at up either 10.4 GT/s or 9.6 GT/s. This is quite a bit different form previous generations. In addition to the various improvements done to the protocol layer, Skylake SP now implements a distributed CHA that is situated along with the LLC bank on each core. It's in charge of tracking the various requests form the core as well as responding to snoop requests from both local and remote agents. The ease of distributing the home agent is a result of Intel getting rid of the requirement on preallocation of resources at the home agent. This also means that future architectures should be able to scale up well.

Depending on the exact model, Skylake processors can scale from 2-way all the way up to 8-way multiprocessing. Note that the high-end models that support 8-way multiprocessing also only come with three UPI links for this purpose while the lower end processors can have either two or three UPI links. Below are the typical configurations for those processors.

Integrated Omni-Path

- See also: Intel's Omni-Path

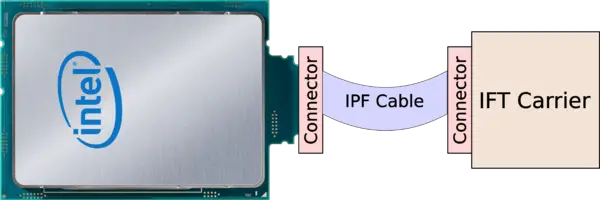

A number of Skylake SP models (specifically those with the "F" suffix) incorporate the Omni-Path Host Fabric Interface (HFI) on-package. This was previously done with the Knights Landing ("F" suffixed) models. This, in addition to improving cost and power efficiencies, also eliminates the dependency on the x16 PCIe lanes on the motherboard. With the HFI on package, the chip can be plugged in directly to the IFT (Internal Faceplate Transition) carrier via a separate IFP (Internal Faceplate-to-Processor) 1-port cable (effectively a Twinax cable).

See also

| codename | Skylake (server) + |

| core count | 4 +, 6 +, 8 +, 10 +, 12 +, 14 +, 16 +, 18 +, 20 +, 22 +, 24 +, 26 + and 28 + |

| designer | Intel + |

| first launched | May 4, 2017 + |

| full page name | intel/microarchitectures/skylake (server) + |

| instance of | microarchitecture + |

| instruction set architecture | x86-16 +, x86-32 + and x86-64 + |

| manufacturer | Intel + |

| microarchitecture type | CPU + |

| name | Skylake (server) + |

| pipeline stages (max) | 19 + |

| pipeline stages (min) | 14 + |

| process | 14 nm (0.014 μm, 1.4e-5 mm) + |