(Replaced support matrix, added missing intrinsics.) |

|||

| (11 intermediate revisions by 2 users not shown) | |||

| Line 1: | Line 1: | ||

| − | {{x86 title| | + | {{x86 title|AVX-512 Vector Neural Network Instructions (VNNI)}}{{x86 isa main}} |

'''{{x86|AVX-512}} Vector Neural Network Instructions''' ('''AVX512 VNNI''') is an [[x86]] extension, part of the {{x86|AVX-512}}, designed to accelerate [[convolutional neural network]]-based [[algorithms]]. | '''{{x86|AVX-512}} Vector Neural Network Instructions''' ('''AVX512 VNNI''') is an [[x86]] extension, part of the {{x86|AVX-512}}, designed to accelerate [[convolutional neural network]]-based [[algorithms]]. | ||

| Line 6: | Line 6: | ||

* <code>VPDPBUSD</code> - Multiplies the individual bytes (8-bit) of the first source operand by the corresponding bytes (8-bit) of the second source operand, producing intermediate word (16-bit) results which are summed and accumulated in the double word (32-bit) of the destination operand. | * <code>VPDPBUSD</code> - Multiplies the individual bytes (8-bit) of the first source operand by the corresponding bytes (8-bit) of the second source operand, producing intermediate word (16-bit) results which are summed and accumulated in the double word (32-bit) of the destination operand. | ||

| − | * <code>VPDPBUSDS</code> - Same as above except on intermediate sum overflow which saturates to 0x7FFF_FFFF/0x8000_0000 for positive/negative numbers. | + | ** <code>VPDPBUSDS</code> - Same as above except on intermediate sum overflow which saturates to 0x7FFF_FFFF/0x8000_0000 for positive/negative numbers. |

* <code>VPDPWSSD</code> - Multiplies the individual words (16-bit) of the first source operand by the corresponding word (16-bit) of the second source operand, producing intermediate word results which are summed and accumulated in the double word (32-bit) of the destination operand. | * <code>VPDPWSSD</code> - Multiplies the individual words (16-bit) of the first source operand by the corresponding word (16-bit) of the second source operand, producing intermediate word results which are summed and accumulated in the double word (32-bit) of the destination operand. | ||

| − | * <code>VPDPWSSDS</code> - Same as above except on intermediate sum overflow which saturates to 0x7FFF_FFFF/0x8000_0000 for positive/negative numbers. | + | ** <code>VPDPWSSDS</code> - Same as above except on intermediate sum overflow which saturates to 0x7FFF_FFFF/0x8000_0000 for positive/negative numbers. |

| − | [[ | + | == Motivation == |

| + | The major motivation behind the AVX512 VNNI extension is the observation that many tight [[convolutional neural network]] loops require the repeated multiplication of two 16-bit values or two 8-bit values and accumulate the result to a 32-bit accumulator. Using the {{x86|AVX512F|foundation AVX-512}}, for 16-bit, this is possible using two instructions - <code>VPMADDWD</code> which is used to multiply two 16-bit pairs and add them together followed a <code>VPADDD</code> which adds the accumulate value. | ||

| + | |||

| + | :[[File:vnni-vpdpwssd.svg|600px]] | ||

| + | |||

| + | Likewise, for 8-bit values, three instructions are needed - <code>VPMADDUBSW</code> which is used to multiply two 8-bit pairs and add them together, followed by a <code>VPMADDWD</code> with the value <code>1</code> in order to simply up-convert the 16-bit values to 32-bit values, followed by the <code>VPADDD</code> instruction which adds the result to an accumulator. | ||

| + | |||

| + | :[[File:vnni-vpdpbusd.svg|600px]] | ||

| + | |||

| + | To address those two common operations, two new instructions were added (as well as two saturated versions): <code>VPDPBUSD</code> fuses <code>VPMADDUBSW</code>, <code>VPMADDWD</code>, and <code>VPADDD</code> and <code>VPDPWSSD</code> fuses <code>VPMADDWD</code> and <code>VPADDD</code>. | ||

| + | |||

| + | :[[File:vnni-vpdpbusd-i.svg|400px]] [[File:vnni-vpdpwssd-i.svg|400px]] | ||

| + | |||

| + | == Detection == | ||

| + | Support for these instructions is indicated by the AVX512_VNNI feature flag. 128- and 256-bit vectors are supported if the AVX512VL flag is set as well. | ||

| + | |||

| + | The {{x86|AVX-VNNI}} extension adds AVX (VEX encoded) versions of these instructions operating on 128- and 256-bit vectors. | ||

| + | |||

| + | {| class="wikitable" | ||

| + | ! colspan="2" | {{x86|CPUID}} !! rowspan="2" | Instruction Set | ||

| + | |- | ||

| + | ! Input !! Output | ||

| + | |- | ||

| + | | EAX=07H, ECX=0 || EBX[bit 31] || AVX512VL | ||

| + | |- | ||

| + | | EAX=07H, ECX=0 || ECX[bit 11] || AVX512_VNNI | ||

| + | |} | ||

| + | |||

| + | == Microarchitecture support == | ||

| + | <!-- Wrong/incomplete? Visit https://en.wikichip.org/wiki/Template:avx512_support_matrix --> | ||

| + | {{avx512 support matrix|em=VL+VNNI}} | ||

| + | |||

| + | == Intrinsic functions == | ||

| + | <source lang=c> | ||

| + | // VPDPBUSD | ||

| + | __m128i _mm_dpbusd_epi32(__m128i, __m128i, __m128i); | ||

| + | __m128i _mm_mask_dpbusd_epi32(__m128i, __mmask8, __m128i, __m128i); | ||

| + | __m128i _mm_maskz_dpbusd_epi32(__mmask8, __m128i, __m128i, __m128i); | ||

| + | __m256i _mm256_dpbusd_epi32(__m256i, __m256i, __m256i); | ||

| + | __m256i _mm256_mask_dpbusd_epi32(__m256i, __mmask8, __m256i, __m256i); | ||

| + | __m256i _mm256_maskz_dpbusd_epi32(__mmask8, __m256i, __m256i, __m256i); | ||

| + | __m512i _mm512_dpbusd_epi32 (__m512i src, __m512i a, __m512i b); | ||

| + | __m512i _mm512_mask_dpbusd_epi32 (__m512i src, __mmask16 k, __m512i a, __m512i b); | ||

| + | __m512i _mm512_maskz_dpbusd_epi32 (__mmask16 k, __m512i src, __m512i a, __m512i b); | ||

| + | // VPDPBUSDS | ||

| + | __m128i _mm_dpbusds_epi32(__m128i, __m128i, __m128i); | ||

| + | __m128i _mm_mask_dpbusds_epi32(__m128i, __mmask8, __m128i, __m128i); | ||

| + | __m128i _mm_maskz_dpbusds_epi32(__mmask8, __m128i, __m128i, __m128i); | ||

| + | __m256i _mm256_dpbusds_epi32(__m256i, __m256i, __m256i); | ||

| + | __m256i _mm256_mask_dpbusds_epi32(__m256i, __mmask8, __m256i, __m256i); | ||

| + | __m256i _mm256_maskz_dpbusds_epi32(__mmask8, __m256i, __m256i, __m256i); | ||

| + | __m512i _mm512_dpbusds_epi32 (__m512i src, __m512i a, __m512i b); | ||

| + | __m512i _mm512_mask_dpbusds_epi32 (__m512i src, __mmask16 k, __m512i a, __m512i b); | ||

| + | __m512i _mm512_maskz_dpbusds_epi32 (__mmask16 k, __m512i src, __m512i a, __m512i b); | ||

| + | // VPDPWSSD | ||

| + | __m128i _mm_dpwssd_epi32(__m128i, __m128i, __m128i); | ||

| + | __m128i _mm_mask_dpwssd_epi32(__m128i, __mmask8, __m128i, __m128i); | ||

| + | __m128i _mm_maskz_dpwssd_epi32(__mmask8, __m128i, __m128i, __m128i); | ||

| + | __m256i _mm256_dpwssd_epi32(__m256i, __m256i, __m256i); | ||

| + | __m256i _mm256_mask_dpwssd_epi32(__m256i, __mmask8, __m256i, __m256i); | ||

| + | __m256i _mm256_maskz_dpwssd_epi32(__mmask8, __m256i, __m256i, __m256i); | ||

| + | __m512i _mm512_dpwssd_epi32 (__m512i src, __m512i a, __m512i b); | ||

| + | __m512i _mm512_mask_dpwssd_epi32 (__m512i src, __mmask16 k, __m512i a, __m512i b); | ||

| + | __m512i _mm512_maskz_dpwssd_epi32 (__mmask16 k, __m512i src, __m512i a, __m512i b); | ||

| + | // VPDPWSSDS | ||

| + | __m128i _mm_dpwssds_epi32(__m128i, __m128i, __m128i); | ||

| + | __m128i _mm_mask_dpwssds_epi32(__m128i, __mmask8, __m128i, __m128i); | ||

| + | __m128i _mm_maskz_dpwssds_epi32(__mmask8, __m128i, __m128i, __m128i); | ||

| + | __m256i _mm256_dpwssds_epi32(__m256i, __m256i, __m256i); | ||

| + | __m256i _mm256_mask_dpwssds_epi32(__m256i, __mmask8, __m256i, __m256i); | ||

| + | __m256i _mm256_maskz_dpwssds_epi32(__mmask8, __m256i, __m256i, __m256i); | ||

| + | __m512i _mm512_dpwssds_epi32 (__m512i src, __m512i a, __m512i b); | ||

| + | __m512i _mm512_mask_dpwssds_epi32 (__m512i src, __mmask16 k, __m512i a, __m512i b); | ||

| + | __m512i _mm512_maskz_dpwssds_epi32 (__mmask16 k, __m512i src, __m512i a, __m512i b); | ||

| + | </source> | ||

| + | |||

| + | == Bibliography == | ||

| + | * {{bib|hc|30|Intel}} | ||

| + | * Rodriguez, Andres, et al. "[https://ai.intel.com/nervana/wp-content/uploads/sites/53/2018/05/Lower-Numerical-Precision-Deep-Learning-Inference-Training.pdf Lower numerical precision deep learning inference and training]." Intel White Paper (2018). | ||

| + | * {{cite techdoc|title=Intel® Architecture Instruction Set Extensions and Future Features|url=https://www.intel.com/content/www/us/en/developer/articles/technical/intel-sdm.html|publ=Intel|pid=319433|rev=047|date=2022-12}} | ||

| + | |||

| + | [[Category:x86_extensions]] | ||

Latest revision as of 16:52, 15 March 2023

Instruction Set Architecture

- Instructions

- Addressing Modes

- Calling Convention

- Microarchitectures

- Model-Specific Register

- CPUID

- Assembly

- Interrupts

- Registers

- Micro-Ops

- Timer

AVX-512 Vector Neural Network Instructions (AVX512 VNNI) is an x86 extension, part of the AVX-512, designed to accelerate convolutional neural network-based algorithms.

Contents

Overview[edit]

The AVX512 VNNI x86 extension extends AVX-512 Foundation by introducing four new instructions for accelerating inner convolutional neural network loops.

-

VPDPBUSD- Multiplies the individual bytes (8-bit) of the first source operand by the corresponding bytes (8-bit) of the second source operand, producing intermediate word (16-bit) results which are summed and accumulated in the double word (32-bit) of the destination operand.-

VPDPBUSDS- Same as above except on intermediate sum overflow which saturates to 0x7FFF_FFFF/0x8000_0000 for positive/negative numbers.

-

-

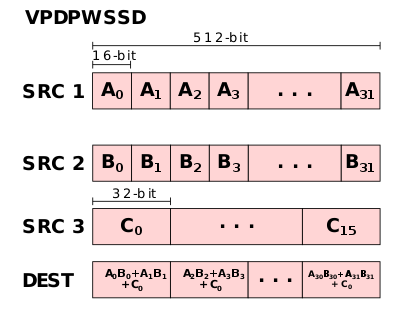

VPDPWSSD- Multiplies the individual words (16-bit) of the first source operand by the corresponding word (16-bit) of the second source operand, producing intermediate word results which are summed and accumulated in the double word (32-bit) of the destination operand.-

VPDPWSSDS- Same as above except on intermediate sum overflow which saturates to 0x7FFF_FFFF/0x8000_0000 for positive/negative numbers.

-

Motivation[edit]

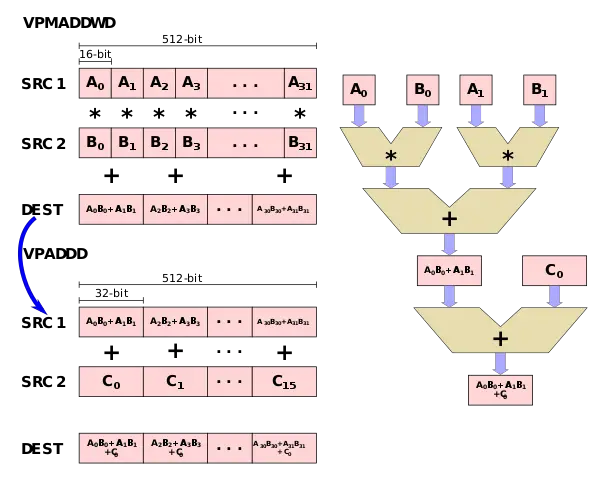

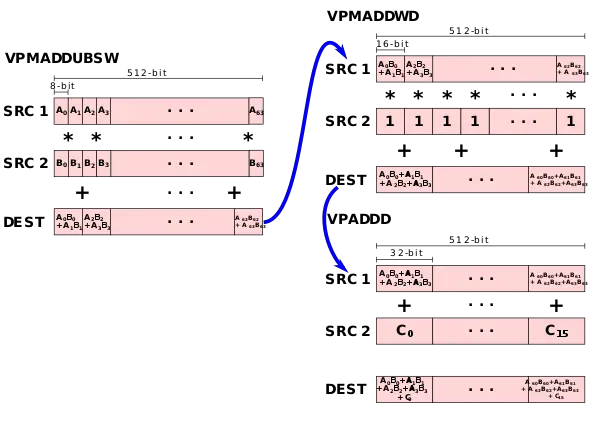

The major motivation behind the AVX512 VNNI extension is the observation that many tight convolutional neural network loops require the repeated multiplication of two 16-bit values or two 8-bit values and accumulate the result to a 32-bit accumulator. Using the foundation AVX-512, for 16-bit, this is possible using two instructions - VPMADDWD which is used to multiply two 16-bit pairs and add them together followed a VPADDD which adds the accumulate value.

Likewise, for 8-bit values, three instructions are needed - VPMADDUBSW which is used to multiply two 8-bit pairs and add them together, followed by a VPMADDWD with the value 1 in order to simply up-convert the 16-bit values to 32-bit values, followed by the VPADDD instruction which adds the result to an accumulator.

To address those two common operations, two new instructions were added (as well as two saturated versions): VPDPBUSD fuses VPMADDUBSW, VPMADDWD, and VPADDD and VPDPWSSD fuses VPMADDWD and VPADDD.

Detection[edit]

Support for these instructions is indicated by the AVX512_VNNI feature flag. 128- and 256-bit vectors are supported if the AVX512VL flag is set as well.

The AVX-VNNI extension adds AVX (VEX encoded) versions of these instructions operating on 128- and 256-bit vectors.

| CPUID | Instruction Set | |

|---|---|---|

| Input | Output | |

| EAX=07H, ECX=0 | EBX[bit 31] | AVX512VL |

| EAX=07H, ECX=0 | ECX[bit 11] | AVX512_VNNI |

Microarchitecture support[edit]

| Designer | Microarchitecture | Year | Support Level | ||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| F | CD | ER | PF | BW | DQ | VL | FP16 | IFMA | VBMI | VBMI2 | BITALG | VPOPCNTDQ | VP2INTERSECT | 4VNNIW | 4FMAPS | VNNI | BF16 | ||||

| Intel | Knights Landing | 2016 | ✔ | ✔ | ✔ | ✔ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | |

| Knights Mill | 2017 | ✔ | ✔ | ✔ | ✔ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✔ | ✘ | ✔ | ✔ | ✘ | ✘ | ||

| Skylake (server) | 2017 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ||

| Cannon Lake | 2018 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✘ | ✔ | ✔ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ||

| Cascade Lake | 2019 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✔ | ✘ | ||

| Cooper Lake | 2020 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✔ | ✔ | ||

| Tiger Lake | 2020 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✘ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✘ | ✘ | ✔ | ✘ | ||

| Rocket Lake | 2021 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✘ | ✔ | ✔ | ✔ | ✔ | ✔ | ✘ | ✘ | ✘ | ✔ | ✘ | ||

| Alder Lake | 2021 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ||

| Ice Lake (server) | 2021 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✘ | ✔ | ✔ | ✔ | ✔ | ✔ | ✘ | ✘ | ✘ | ✔ | ✘ | ||

| Sapphire Rapids | 2023 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✘ | ✘ | ✘ | ✔ | ✔ | ||

| AMD | Zen 4 | 2022 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✘ | ✔ | ✔ | ✔ | ✔ | ✔ | ✘ | ✘ | ✘ | ✔ | ✔ | |

| Zen 5 | 2024 | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✘ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ||

| Centaur | CHA | ✔ | ✔ | ✘ | ✘ | ✔ | ✔ | ✔ | ✘ | ✔ | ✔ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ||

Intrinsic functions[edit]

// VPDPBUSD

__m128i _mm_dpbusd_epi32(__m128i, __m128i, __m128i);

__m128i _mm_mask_dpbusd_epi32(__m128i, __mmask8, __m128i, __m128i);

__m128i _mm_maskz_dpbusd_epi32(__mmask8, __m128i, __m128i, __m128i);

__m256i _mm256_dpbusd_epi32(__m256i, __m256i, __m256i);

__m256i _mm256_mask_dpbusd_epi32(__m256i, __mmask8, __m256i, __m256i);

__m256i _mm256_maskz_dpbusd_epi32(__mmask8, __m256i, __m256i, __m256i);

__m512i _mm512_dpbusd_epi32 (__m512i src, __m512i a, __m512i b);

__m512i _mm512_mask_dpbusd_epi32 (__m512i src, __mmask16 k, __m512i a, __m512i b);

__m512i _mm512_maskz_dpbusd_epi32 (__mmask16 k, __m512i src, __m512i a, __m512i b);

// VPDPBUSDS

__m128i _mm_dpbusds_epi32(__m128i, __m128i, __m128i);

__m128i _mm_mask_dpbusds_epi32(__m128i, __mmask8, __m128i, __m128i);

__m128i _mm_maskz_dpbusds_epi32(__mmask8, __m128i, __m128i, __m128i);

__m256i _mm256_dpbusds_epi32(__m256i, __m256i, __m256i);

__m256i _mm256_mask_dpbusds_epi32(__m256i, __mmask8, __m256i, __m256i);

__m256i _mm256_maskz_dpbusds_epi32(__mmask8, __m256i, __m256i, __m256i);

__m512i _mm512_dpbusds_epi32 (__m512i src, __m512i a, __m512i b);

__m512i _mm512_mask_dpbusds_epi32 (__m512i src, __mmask16 k, __m512i a, __m512i b);

__m512i _mm512_maskz_dpbusds_epi32 (__mmask16 k, __m512i src, __m512i a, __m512i b);

// VPDPWSSD

__m128i _mm_dpwssd_epi32(__m128i, __m128i, __m128i);

__m128i _mm_mask_dpwssd_epi32(__m128i, __mmask8, __m128i, __m128i);

__m128i _mm_maskz_dpwssd_epi32(__mmask8, __m128i, __m128i, __m128i);

__m256i _mm256_dpwssd_epi32(__m256i, __m256i, __m256i);

__m256i _mm256_mask_dpwssd_epi32(__m256i, __mmask8, __m256i, __m256i);

__m256i _mm256_maskz_dpwssd_epi32(__mmask8, __m256i, __m256i, __m256i);

__m512i _mm512_dpwssd_epi32 (__m512i src, __m512i a, __m512i b);

__m512i _mm512_mask_dpwssd_epi32 (__m512i src, __mmask16 k, __m512i a, __m512i b);

__m512i _mm512_maskz_dpwssd_epi32 (__mmask16 k, __m512i src, __m512i a, __m512i b);

// VPDPWSSDS

__m128i _mm_dpwssds_epi32(__m128i, __m128i, __m128i);

__m128i _mm_mask_dpwssds_epi32(__m128i, __mmask8, __m128i, __m128i);

__m128i _mm_maskz_dpwssds_epi32(__mmask8, __m128i, __m128i, __m128i);

__m256i _mm256_dpwssds_epi32(__m256i, __m256i, __m256i);

__m256i _mm256_mask_dpwssds_epi32(__m256i, __mmask8, __m256i, __m256i);

__m256i _mm256_maskz_dpwssds_epi32(__mmask8, __m256i, __m256i, __m256i);

__m512i _mm512_dpwssds_epi32 (__m512i src, __m512i a, __m512i b);

__m512i _mm512_mask_dpwssds_epi32 (__m512i src, __mmask16 k, __m512i a, __m512i b);

__m512i _mm512_maskz_dpwssds_epi32 (__mmask16 k, __m512i src, __m512i a, __m512i b);

Bibliography[edit]

- Intel, IEEE Hot Chips 30 Symposium (HCS) 2018.

- Rodriguez, Andres, et al. "Lower numerical precision deep learning inference and training." Intel White Paper (2018).

- "Intel® Architecture Instruction Set Extensions and Future Features", Intel Order Nr. 319433, Rev. 047, December 2022