From WikiChip

Difference between revisions of "nervana/nnp/nnp-i 1300"

(nnpi 1300) |

|||

| Line 1: | Line 1: | ||

{{nervana title|NNP-I 1300}} | {{nervana title|NNP-I 1300}} | ||

| − | {{chip}} | + | {{chip |

| + | |name=NNP-I 1300 | ||

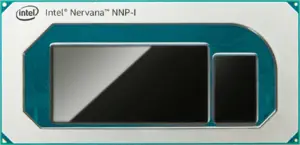

| + | |image=spring_hill_package_(front).png | ||

| + | |back image=spring_hill_package_(back).png | ||

| + | |designer=Intel | ||

| + | |manufacturer=Intel | ||

| + | |model number=NNP-I 1300 | ||

| + | |market=Server | ||

| + | |market 2=Edge | ||

| + | |first announced=November 12, 2019 | ||

| + | |first launched=November 12, 2019 | ||

| + | |family=NNP | ||

| + | |series=NNP-I | ||

| + | |microarch=Spring Hill | ||

| + | |process=10 nm | ||

| + | |transistors=8,500,000,000 | ||

| + | |technology=CMOS | ||

| + | |die area=239 mm² | ||

| + | |core count=24 | ||

| + | |tdp=75 W | ||

| + | }} | ||

'''NNP-I 1300''' is an inference [[neural processor]] designed by [[Intel Nervana]] and introduced in late 2019. Fabricated on [[Intel's 10 nm process]] based on the {{intel|Spring Hill|l=arch}} microarchitecture, the NNP-I 1300 comes in a PCIe Gen 3.0 [[accelerator card]] form factor with two NPU chips, each with all 24 {{intel|Spring Hill#Inference Compute Engine (ICE)|ICEs|l=arch}} enabled for a peak performance of 170 [[TOPS]] at a TDP of 75 W. | '''NNP-I 1300''' is an inference [[neural processor]] designed by [[Intel Nervana]] and introduced in late 2019. Fabricated on [[Intel's 10 nm process]] based on the {{intel|Spring Hill|l=arch}} microarchitecture, the NNP-I 1300 comes in a PCIe Gen 3.0 [[accelerator card]] form factor with two NPU chips, each with all 24 {{intel|Spring Hill#Inference Compute Engine (ICE)|ICEs|l=arch}} enabled for a peak performance of 170 [[TOPS]] at a TDP of 75 W. | ||

Revision as of 00:42, 1 February 2020

| Edit Values | |

| NNP-I 1300 | |

| |

| General Info | |

| Designer | Intel |

| Manufacturer | Intel |

| Model Number | NNP-I 1300 |

| Market | Server, Edge |

| Introduction | November 12, 2019 (announced) November 12, 2019 (launched) |

| Shop | Amazon |

| General Specs | |

| Family | NNP |

| Series | NNP-I |

| Microarchitecture | |

| Microarchitecture | Spring Hill |

| Process | 10 nm |

| Transistors | 8,500,000,000 |

| Technology | CMOS |

| Die | 239 mm² |

| Cores | 24 |

| Electrical | |

| TDP | 75 W |

| Packaging | |

| |

NNP-I 1300 is an inference neural processor designed by Intel Nervana and introduced in late 2019. Fabricated on Intel's 10 nm process based on the Spring Hill microarchitecture, the NNP-I 1300 comes in a PCIe Gen 3.0 accelerator card form factor with two NPU chips, each with all 24 ICEs enabled for a peak performance of 170 TOPS at a TDP of 75 W.

Facts about "NNP-I 1300 - Intel Nervana"

| back image |  + + |

| core count | 24 + |

| designer | Intel + |

| die area | 239 mm² (0.37 in², 2.39 cm², 239,000,000 µm²) + |

| family | NNP + |

| first announced | November 12, 2019 + |

| first launched | November 12, 2019 + |

| full page name | nervana/nnp/nnp-i 1300 + |

| instance of | microprocessor + |

| ldate | November 12, 2019 + |

| main image |  + + |

| manufacturer | Intel + |

| market segment | Server + and Edge + |

| microarchitecture | Spring Hill + |

| model number | NNP-I 1300 + |

| name | NNP-I 1300 + |

| process | 10 nm (0.01 μm, 1.0e-5 mm) + |

| series | NNP-I + |

| tdp | 75 W (75,000 mW, 0.101 hp, 0.075 kW) + |

| technology | CMOS + |

| transistor count | 8,500,000,000 + |