(bm1680) |

|||

| (13 intermediate revisions by 4 users not shown) | |||

| Line 1: | Line 1: | ||

{{bitmain title|Sophon BM1680}} | {{bitmain title|Sophon BM1680}} | ||

| − | {{ | + | {{chip |

| − | '''Sophon BM1680''' is a [[neural processor]] designed by [[Bitmain]] capable of performing both network inference and network training. | + | |name=Sophon BM1680 |

| + | |image=bitmain sophon bm1680.png | ||

| + | |designer=Bitmain | ||

| + | |manufacturer=TSMC | ||

| + | |model number=BM1680 | ||

| + | |market=Artificial Intelligence | ||

| + | |first announced=October 25, 2017 | ||

| + | |first launched=November 8, 2017 | ||

| + | |family=Sophon | ||

| + | |process=28 nm | ||

| + | |technology=CMOS | ||

| + | |max memory=16 GiB | ||

| + | |v core=0.9 V | ||

| + | |v core tolerance=5% | ||

| + | |v io=1.8 V | ||

| + | |v io tolerance=5% | ||

| + | |tdp=41 W | ||

| + | |tdp typical=25 W | ||

| + | |temp min=0 °C | ||

| + | |temp max=125 °C | ||

| + | |package module 1={{packages/bitmain/fcbga-1599}} | ||

| + | }} | ||

| + | '''Sophon BM1680''' is a [[neural processor]] designed by [[Bitmain]] and introduced in 2017. The BM1680 is capable of performing both network inference and network training. Capable of delivering up to [[peak flops (single-precision)::2 TFLOPS]] (SP), the BM1680 has a typical power dissipation of 25 W with a peak power of 41 W. The chip was [[taped-out]] in April 2017 and began sampling in June. | ||

| + | |||

| + | Manufactured on [[TSMC]]'s [[28 nm|28HPC+]] process, the BM1680 is capable of 80 billion algorithmic operations per second. Bitmain claims the chip is designed not only for inference, but also for training of neural networks, suitable for working with the common [[artificial neural network|ANNs]] such as [[convolutional neural network|CNN]], [[recurrent neural network|RNN]], and [[deep neural network|DNN]]. | ||

| + | |||

| + | == Overview == | ||

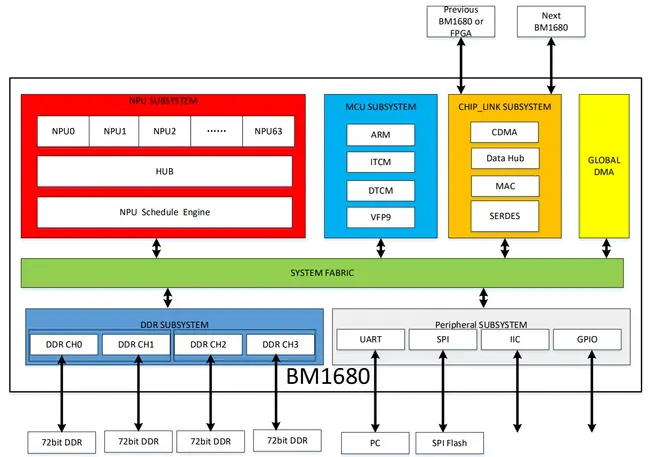

| + | The chip consists of five subsystems: [[NPU]], [[MCU]], Chip Link, Memory, and Peripherals. | ||

| + | |||

| + | The MCU Subsystem is a low-power {{arch|32}} embedded [[ARM]] microcontroller which can be boot from SPI Flash (ITCM interface). The microcontroller has an 8 KiB of [[L1I$]] and 8 KiB of [[L1D$]]. Additionally, there is also a VPFv2 coprocessor for floating point operations support. | ||

| + | |||

| + | The NPU Subsystem consists of 64 [[neural processor|NPUs]], the hub, and an NPU Schedule Engine. The scheduling engine is in charge of controlling the data flow to the individual NPUs. Bitmain has not many intimate details of the NPU cores but each core is known to have 512 KiB of program-visible [[SRAM]] and supports 64 single-precision operations. With a total of 64 NPUs, the chip has a total of 32 [[MiB]] of [[cache]] and a peak performance of 2 [[TFLOPS]] (single-precision). | ||

| + | |||

| + | :[[File:BM1680 block diagram.png|650px]] | ||

| + | |||

| + | |||

| + | === BMDNN Chiplink === | ||

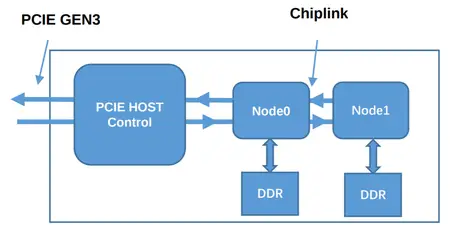

| + | The chip incorporates Bitmain's proprietary fabric called ''BMDNN Chiplink technology''. The [[fabric]] is a flexible, low latency link that communicates over a high-speed [[SerDes]] PHY. Two ports are available on each chip which allows multiple BM1680s to be daisy chained together to form a larger network. | ||

| + | |||

| + | In a typical configuration (i.e., a PCIe [[accelerator]] [[accelerator card|card]]), two BM1680s chips are wired together using chiplink. The first node in the chain is then connected to a host control (e.g., a custom [[ASIC]] unit or simply an [[FPGA]]) which provides a PCIe interface to the host processor (e.g., on a typical server a {{intel|Xeon}}). Data from the host processor is then sent to the host controller on the accelerator unit which is then distributed across all the [[NPU]]s on all the available nodes. | ||

| + | |||

| + | ::[[File:BM1680 chiplink topology.png|450px]] | ||

| + | |||

| + | Current offering by [[Bitmain]] is limited to two BM1680s chips per [[accelerator card]], however in theory the chip can be chained to form a much larger network. The primary limitations of the network are power consumption and thermal dissipation as well as PCIe bandwidth which Bitmain requires to be x4 lanes per node (or 3.9 GB/s, 8 GT/s). For example, current offerings by Bitmain features two nodes on a PCIe Gen3 x8 card. | ||

| + | |||

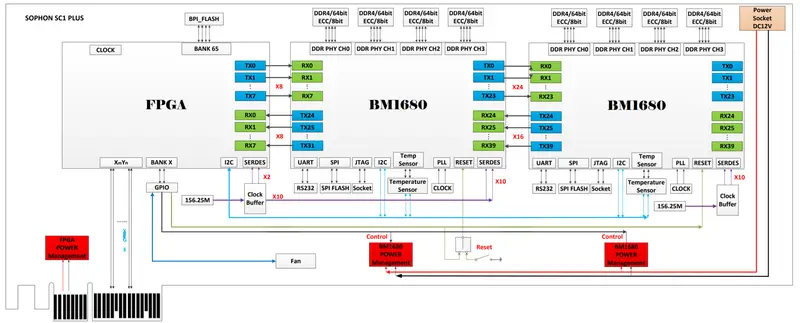

| + | Below is a schematic of Bitmain's SC+ PCIe Gen 3 accelerator card. | ||

| + | |||

| + | |||

| + | ::[[File:BM1680 SC+ card schematic.png|800px]] | ||

| + | |||

| + | == Memory controller == | ||

| + | {{memory controller | ||

| + | |type=DDR4-2666 | ||

| + | |ecc=Yes | ||

| + | |max mem=16 GiB | ||

| + | |controllers=1 | ||

| + | |channels=4 | ||

| + | |max bandwidth=79.47 GiB/s | ||

| + | |bandwidth schan=19.87 GiB/s | ||

| + | |bandwidth dchan=39.74 GiB/s | ||

| + | |bandwidth qchan=79.47 GiB/s | ||

| + | }} | ||

| + | |||

| + | == Expansions == | ||

| + | * SPI Flash Controller | ||

| + | * UART | ||

| + | * Two-wire I2C | ||

Latest revision as of 08:59, 11 August 2018

| Edit Values | |||||||||

| Sophon BM1680 | |||||||||

| |||||||||

| General Info | |||||||||

| Designer | Bitmain | ||||||||

| Manufacturer | TSMC | ||||||||

| Model Number | BM1680 | ||||||||

| Market | Artificial Intelligence | ||||||||

| Introduction | October 25, 2017 (announced) November 8, 2017 (launched) | ||||||||

| General Specs | |||||||||

| Family | Sophon | ||||||||

| Microarchitecture | |||||||||

| Process | 28 nm | ||||||||

| Technology | CMOS | ||||||||

| Max Memory | 16 GiB | ||||||||

| Electrical | |||||||||

| Vcore | 0.9 V ± 5% | ||||||||

| VI/O | 1.8 V ± 5% | ||||||||

| TDP | 41 W | ||||||||

| TDP (Typical) | 25 W | ||||||||

| OP Temperature | 0 °C – 125 °C | ||||||||

| Packaging | |||||||||

| |||||||||

2,000,000,000 KFLOPS

2,000,000 MFLOPS

2,000 GFLOPS

0.002 PFLOPS

Manufactured on TSMC's 28HPC+ process, the BM1680 is capable of 80 billion algorithmic operations per second. Bitmain claims the chip is designed not only for inference, but also for training of neural networks, suitable for working with the common ANNs such as CNN, RNN, and DNN.

Overview[edit]

The chip consists of five subsystems: NPU, MCU, Chip Link, Memory, and Peripherals.

The MCU Subsystem is a low-power 32-bit embedded ARM microcontroller which can be boot from SPI Flash (ITCM interface). The microcontroller has an 8 KiB of L1I$ and 8 KiB of L1D$. Additionally, there is also a VPFv2 coprocessor for floating point operations support.

The NPU Subsystem consists of 64 NPUs, the hub, and an NPU Schedule Engine. The scheduling engine is in charge of controlling the data flow to the individual NPUs. Bitmain has not many intimate details of the NPU cores but each core is known to have 512 KiB of program-visible SRAM and supports 64 single-precision operations. With a total of 64 NPUs, the chip has a total of 32 MiB of cache and a peak performance of 2 TFLOPS (single-precision).

BMDNN Chiplink[edit]

The chip incorporates Bitmain's proprietary fabric called BMDNN Chiplink technology. The fabric is a flexible, low latency link that communicates over a high-speed SerDes PHY. Two ports are available on each chip which allows multiple BM1680s to be daisy chained together to form a larger network.

In a typical configuration (i.e., a PCIe accelerator card), two BM1680s chips are wired together using chiplink. The first node in the chain is then connected to a host control (e.g., a custom ASIC unit or simply an FPGA) which provides a PCIe interface to the host processor (e.g., on a typical server a Xeon). Data from the host processor is then sent to the host controller on the accelerator unit which is then distributed across all the NPUs on all the available nodes.

Current offering by Bitmain is limited to two BM1680s chips per accelerator card, however in theory the chip can be chained to form a much larger network. The primary limitations of the network are power consumption and thermal dissipation as well as PCIe bandwidth which Bitmain requires to be x4 lanes per node (or 3.9 GB/s, 8 GT/s). For example, current offerings by Bitmain features two nodes on a PCIe Gen3 x8 card.

Below is a schematic of Bitmain's SC+ PCIe Gen 3 accelerator card.

Memory controller[edit]

|

Integrated Memory Controller

|

||||||||||||||

|

||||||||||||||

Expansions[edit]

- SPI Flash Controller

- UART

- Two-wire I2C

| Has subobject "Has subobject" is a predefined property representing a container construct and is provided by Semantic MediaWiki. | Sophon BM1680 - Bitmain#package + |

| core voltage | 0.9 V (9 dV, 90 cV, 900 mV) + |

| core voltage tolerance | 5% + |

| designer | Bitmain + |

| family | Sophon + |

| first announced | October 25, 2017 + |

| first launched | November 8, 2017 + |

| full page name | bitmain/sophon/bm1680 + |

| has ecc memory support | true + |

| instance of | microprocessor + |

| io voltage | 1.8 V (18 dV, 180 cV, 1,800 mV) + |

| io voltage tolerance | 5% + |

| ldate | November 8, 2017 + |

| main image |  + + |

| manufacturer | TSMC + |

| market segment | Artificial Intelligence + |

| max memory | 16,384 MiB (16,777,216 KiB, 17,179,869,184 B, 16 GiB, 0.0156 TiB) + |

| max memory bandwidth | 79.47 GiB/s (81,377.28 MiB/s, 85.33 GB/s, 85,330.263 MB/s, 0.0776 TiB/s, 0.0853 TB/s) + |

| max memory channels | 4 + |

| max operating temperature | 125 °C + |

| min operating temperature | 0 °C + |

| model number | BM1680 + |

| name | Sophon BM1680 + |

| package | FCBGA-1599 + |

| peak flops (single-precision) | 2,000,000,000,000 FLOPS (2,000,000,000 KFLOPS, 2,000,000 MFLOPS, 2,000 GFLOPS, 2 TFLOPS, 0.002 PFLOPS, 2.0e-6 EFLOPS, 2.0e-9 ZFLOPS) + |

| process | 28 nm (0.028 μm, 2.8e-5 mm) + |

| supported memory type | DDR4-2666 + |

| tdp | 41 W (41,000 mW, 0.055 hp, 0.041 kW) + |

| tdp (typical) | 25 W (25,000 mW, 0.0335 hp, 0.025 kW) + |

| technology | CMOS + |