(→Overview) |

(→Overview) |

||

| Line 32: | Line 32: | ||

There are eight TPCs integrated on Gaudi, each with its own local memory and without caches. The amount of local memory has been withheld. The on-die caches and memory can be either hardware-managed or fully software-managed, allowing the compiler to optimize the residency of data and reducing movement. Each of the individual TPCs is a VLIW DSP design that has been optimized for AI applications. This includes AI-specific instructions and operations. The design itself is actually an enhanced version of the TPCs found in the company's prior inference accelerator design, {{\\|Goya}}. | There are eight TPCs integrated on Gaudi, each with its own local memory and without caches. The amount of local memory has been withheld. The on-die caches and memory can be either hardware-managed or fully software-managed, allowing the compiler to optimize the residency of data and reducing movement. Each of the individual TPCs is a VLIW DSP design that has been optimized for AI applications. This includes AI-specific instructions and operations. The design itself is actually an enhanced version of the TPCs found in the company's prior inference accelerator design, {{\\|Goya}}. | ||

| − | The TPC supports mixed- | + | The TPC supports mixed-precision operations including 8-bit, 16-bit, and 32-bit SIMD vector operations for both integer and floating-point. This was done in order to allow accuracy loss tolerance to be controlled on a per-model design by the programmer. Goya offers both coarse-grained precision control and fine-grained down to the tensor level. Compared to {{\\|Goya}}, the TPC in Gaudi also adds supports for [[bfloat16]] and adds additional operations and functionality more desired in training. |

=== High-Bandwidth Memory (HBM2) === | === High-Bandwidth Memory (HBM2) === | ||

Revision as of 14:29, 6 February 2020

| Edit Values | |

| Gaudi µarch | |

| General Info | |

| Arch Type | NPU |

| Designer | Habana |

| Manufacturer | TSMC |

| Introduction | 2019 |

| Process | 16 nm |

| PE Configs | 8 |

| Contemporary | |

| Goya | |

Gaudi is a 16-nanometer microarchitecture for training neural processors designed by Habana Labs.

Contents

Process Technology

Gaudi-based processors are fabricated on TSMC 16-nanometer process.

Architecture

Block Diagram

Overview

Gaudi was designed as a microarchitecture for the acceleration of training in the data center. It is offered as a PCIe-based accelerator card or, alternatively, as an OCP Open Accelerator Module (OAM).

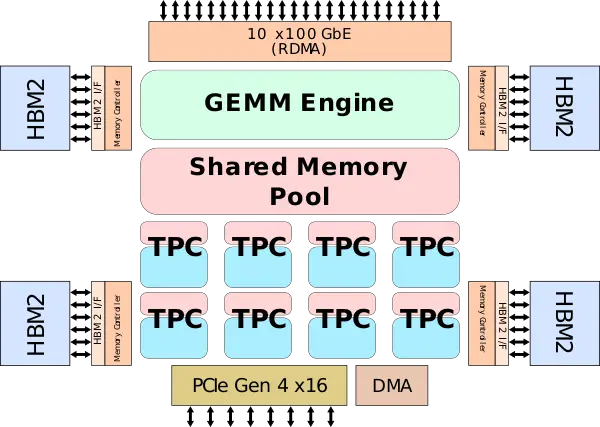

The design itself is based on the company's first inference chip, Goya, but adds additional components to facilitates efficient scale-out capabilities. To that end, Gaudi features eight Tensor Processing Cores (TPCs), a General Matrix Multiply (GEMM) engine, and a large pool of shared memory. In order to facilitate large scale-out capabilities, Gaudi integrates a large set of ethernet ports and high-bandwidth memory.

General Matrix Multiply (GEMM) engine

Gaudi incorporates a large, shared General Matrix Multiply (GEMM) engine. The GEMM operates on 16-bit integers. Habana withheld most of the information regarding the GEMM engine. Contemporary chips such as Google's TPU feature a systolic array of 128x128 in size. It's not unreasonable to expect Habana to feature a similarly-built GEMM engine. A similar architecture (128x128) at 1.2-1.5 GHz will peak at 50 teraFLOPS while a twice as large array of 256x256 at 1 GHz will peak at 131 teraFLOPS.

Tensor Processing Cores (TPC)

There are eight TPCs integrated on Gaudi, each with its own local memory and without caches. The amount of local memory has been withheld. The on-die caches and memory can be either hardware-managed or fully software-managed, allowing the compiler to optimize the residency of data and reducing movement. Each of the individual TPCs is a VLIW DSP design that has been optimized for AI applications. This includes AI-specific instructions and operations. The design itself is actually an enhanced version of the TPCs found in the company's prior inference accelerator design, Goya.

The TPC supports mixed-precision operations including 8-bit, 16-bit, and 32-bit SIMD vector operations for both integer and floating-point. This was done in order to allow accuracy loss tolerance to be controlled on a per-model design by the programmer. Goya offers both coarse-grained precision control and fine-grained down to the tensor level. Compared to Goya, the TPC in Gaudi also adds supports for bfloat16 and adds additional operations and functionality more desired in training.

High-Bandwidth Memory (HBM2)

- See also: CoWoS and High-Bandwidth Memory

Gaudi is fabricated on TSMC 16 nm process (16FF+) and utilizes its 2.5D CoWoS interposer technology in order to integrate four stacks of HBM2 memory. Each stack has 8 GiB in capacity and operates at a signaling rate of 2 GT/s for a total bandwidth of 1 TiB/s.

Scale-Out

Habana made high throughput and low latency one of its key requirements. For the inter-processor communication, Gaudi relies on standard Ethernet. This was done to avoid developing its own proprietary interconnect. It also allows customers to use off-the-shelf products from an existing ecosystem. To that end, Gaudi integrates 10 ports of 100 Gigabit Ethernet (or 20x50 GbE). The chips also integrate RDMA over Converged Ethernet (RoCE) directly on-die along with the ports. The higher integration allows for the elimination of both the PCIe switch and an RDMA switch. Since this is done on per-port, the aggregated bandwidth for Gaudi is around ten times that of a solution (such as that found in the Nvidia V100 that do not use NVlink).

From a programmability point of view, Gaudi supports parameters, tensor, and sub-tensor transfers over Ethernet. For quality of service, there are hardware hooks for supporting congestion control and congestion avoidance. Additionally, the fabric has both lossless and lossy support.

Package

PCIe

OAM

Bibliography

- Habana, IEEE Hot Chips 31 Symposium (HCS) 2019.

- Habana, AI Hardware Summit 2019

- Habana, Linley Fall Processor Conference 2019

See also

| codename | Gaudi + |

| designer | Habana + |

| first launched | 2019 + |

| full page name | habana/microarchitectures/gaudi + |

| instance of | microarchitecture + |

| manufacturer | TSMC + |

| name | Gaudi + |

| process | 16 nm (0.016 μm, 1.6e-5 mm) + |

| processing element count | 8 + |