Astra is a petascale ARM supercomputer designed for Sandia National Laboratories expeced to be deployed in mid-2018. This is the first ARM-based supercomputer to exceed 1 petaFLOPS.

Contents

History

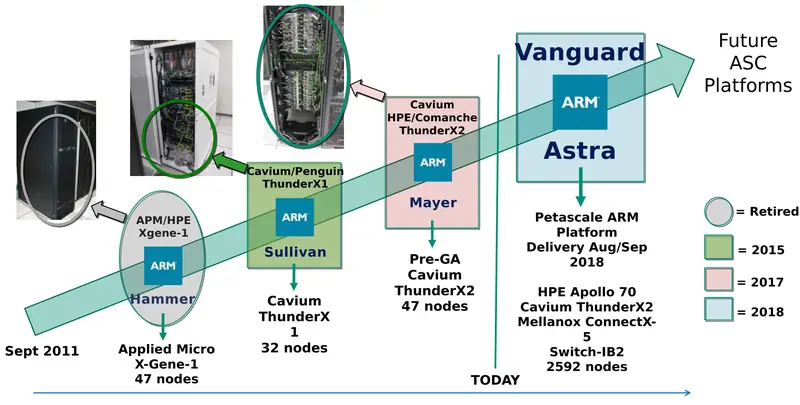

Astra is an ARM-based supercomputer expected to be deployed at Sandia National Laboratories. The computer is one of a series of prototypes commissioned by the U.S. Department of Energy as part of a program that evaluates the feasibility of emerging high-performance computing architectures as production platforms to support NNSA's mission. Specifically, Astra is designed to demonstrate the viability of ARM for DOE NNSA Supercomputing. Astra is the fourth prototype as part of the Vanguard project initiative and is by far the largest ARM-based supercomputer designed to that point.

Overview

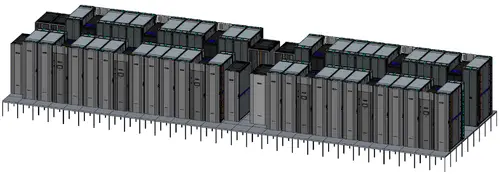

Astra is the first ARM-based petascale supercomputer. The system consists of 5,184 Cavium ThunderX2 CN9975 processors with slightly over 1.2 MW power consumption for a peak performance of 2.322 petaFLOPS. Each ThunderX2 CN9975 has 28 cores operating at 2 GHz. Astra has close around 700 terabytes of memory and uses a 3-level fat tree interconnect.

| Components | Total Memory | ||||

| Processors | 5,184 2 x 72 x 36 | Type | DDR4 | NVMe | |

| Racks | 36 | Node | 128 GiB | ? | |

| Peak FLOPS | 2.322 petaFLOPS (SP) 1.161 petaFLOPS (DP) | Astra | 324 TiB | 403 TB | |

Architecture

System

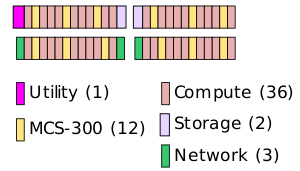

Astra consists of 36 compute racks, 12 cooling racks, 3 networking racks, 2 storage racks, and a single utility rack.

The system has a peak Wall power of 1.6 MW.

| Total Power (kW) | |||

|---|---|---|---|

| Wall | Peak | Nominal (LINPACK) | Idle |

| 1,631.5 | 1,440.3 | 1,357.3 | 274.9 |

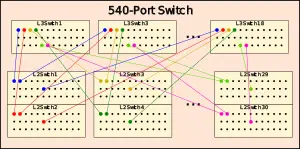

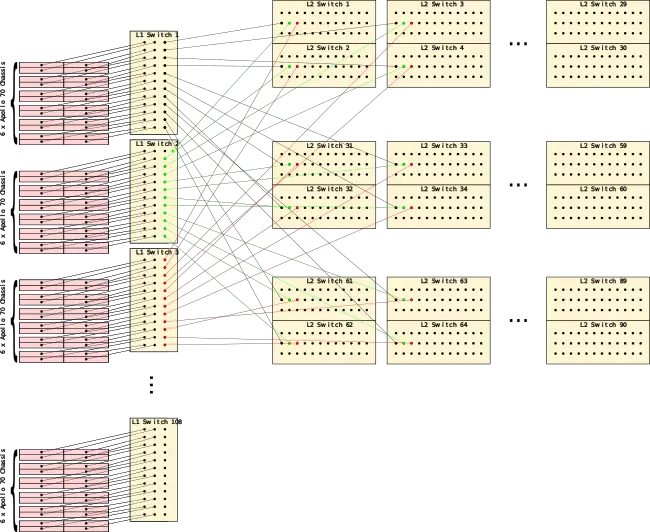

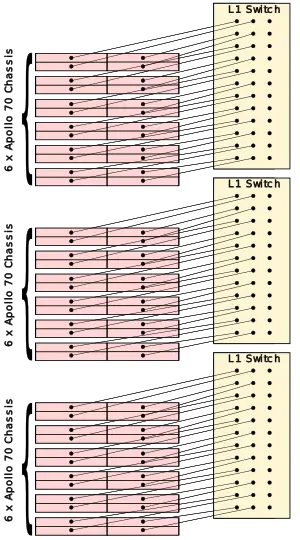

Servers are linked via Mellanox IB EDR interconnect in a three-level fat tree topology with a 2:1 tapered fat-tree at L1. Astra uses three 540-port switches. Those are formed from 30 level 2 switches that provide 18 ports each (540 in total) with the remaining 18 links going for each of the 18 level 3 switches.

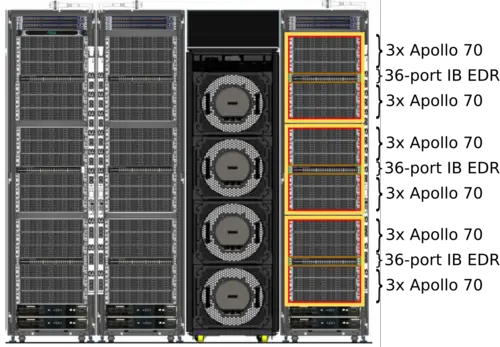

Compute Rack

Each of the 36 compute racks consist of 18 of HPE's Apollo 70 Chassis along with 3 36-port InfiniBand EDR switches. There is a single switch for every 6 chassis taking 24 ports.

Every IB link from each of the 24 nodes go directly to the 36-port switch. There are 12 remaining ports that go to the level 2 switches (discussed later). A full rack with 72 nodes has 4,032 cores yielding a peak performance of 64.51 teraFLOPS with over 24.57 TB/s of peak bandwidth.

| Full Rack Capabilities | ||||

|---|---|---|---|---|

| Processors | 144 72 × 2 × CPU | |||

| Core | 4,032 (16,128 threads) 72 × 56 (224 threads) | |||

| FLOPS (SP) | 64.51 TFLOPS 72 × 2 × 28 × 16 GFLOPS | |||

| FLOPS (DP) | 32.26 TFLOPS 72 × 2 × 28 × 8 GFLOPS | |||

| Memory | 9 TiB (DRR4) 72 × 2 × 8 × 8 GiB | |||

| Memory BW | 24.57 TB/s 72 × 16 × 21.33 GB/s | |||

Each compute rack has a projected peak power of 1295.8 kW (1436.0 kW Wall) with a nominal 1217.0 kW of power under linpack.

Compute Node

The basic compute server is the HPE Apollo 70. Those use a highly dense chassis system architecture that fit in just 2U and consist of four dual-socket compute nodes.

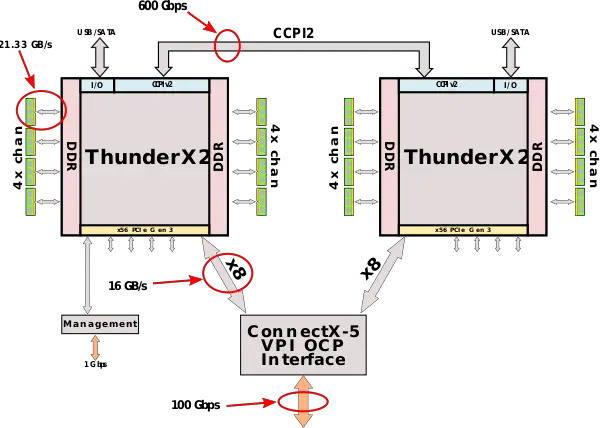

Each node has two 1,600 W power supplies, 1 Gbps Ethernet management port, and a Mellanox ConnectX-5 EDR link. Each node has a dual-socket Cavium ThunderX2 ThunderX2 CN9975 (Vulcan) processor with 28 cores operating at 2 GHz. For Astra, Sandia is using 28-core parts operating at 2 GHz, likely due to a better performance/power efficiency design point. Each chip supports up to eight channels of DDR4 DIMMs with rates up to 2666 MT/s as well as 56 PCIe 3 lanes.

The ThunderX2 CN9975 supports two-way multiprocessing. Communication is done over second-generation Cavium Coherent Processor Interconnect (CCPI2) which provides 600 Gbps of aggregated bandwidth. For the Astra supercomputer, each node uses 8 GiB DDR4-2666 dual-rank DIMM per controller for a total of 64 GiB and 170.7 GB/s of aggregated memory bandwidth per socket. For each node, there is a single Mellanox EDR InfiniBand ConnectX-5 VPI card designed for the Open Compute Project (OCP) providing the 100 Gb/s link.

With eight DIMMs per controller, each node has 128 GiB of memory feeding 56 cores with a total bandwidth of 341.33 GB/s per node. Those cores operate at up to 2 GHz, each with 2 NEON 128-bit units providing a peak theoretical performance of 8 single-precision FLOPS/cycle. This works out to 16 GFLOPS per core.

| Full Node Capabilities | ||||

|---|---|---|---|---|

| Socket | Node | |||

| Processors | 1 × CPU | 2 × CPU | ||

| Core | 28 (112 threads) | 56 (224 threads) | ||

| FLOPS (SP) | 448 GFLOPS 28 × 16 GFLOPS | 896 GFLOPS 2 × 28 × 16 GFLOPS | ||

| FLOPS (SP) | 224 GFLOPS 28 × 16 GFLOPS | 448 GFLOPS 2 × 28 × 8 GFLOPS | ||

| Memory | 64 GiB (DRR4) 8 × 8 GiB | 128 GiB (DRR4) 2 × 8 × 8 GiB | ||

| Bandwidth | 170.7 GB/s 8 × 21.33 GB/s | 341.33 GB/s 16 × 21.33 GB/s | ||

Power & Cooling

Astra has a nominal power consumption of 1.35 MW under LINPACK. Cooling the entire system are just 12 MCS-300 fan coil racks.

| Projected power of the system by component | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Per constituent rack type (W) | Total (kW) | |||||||||

| Rack | Wall | Peak | Nominal (LINPACK) | Idle | Racks | Wall | Peak | Nominal (LINPACK) | idle | |

| Compute | 39,888 | 359,93 | 33,805 | 6,761 | 36 | 1436.0 | 1295.8 | 1217.0 | 243.4 | |

| MCS-300 | 10,500 | 7,400 | 7,400 | 170 | 12 | 126.0 | 88.8 | 88.8 | 2.0 | |

| Network | 12,624 | 10,023 | 9,021 | 9,021 | 3 | 37.9 | 30.1 | 27.1 | 27.1 | |

| Storage | 11,520 | 10,000 | 10,000 | 1,000 | 2 | 23.0 | 20.0 | 20.0 | 2.0 | |

| Utility | 8,640 | 5,625 | 4,500 | 450 | 1 | 8.6 | 5.6 | 4.5 | 0.5 | |

| 1,631.5 | 1,440.3 | 1,357.3 | 274.9 | |||||||

Bibliography

- SNL (personal communication, August 2018).

- DOE. (June 18, 2018). "Arm-based supercomputer prototype to be deployed at Sandia National Laboratories" [Press Release]

- Kevin Pedretti, Jim H. Laros III, Si Hammond. (June 28, 2018). "ISC 2018". "Vanguard Astra: Maturing the ARM Software Ecosystem for U.S. DOE/ASC Supercomputing"

- Schor, David. (August, 2018). "Cavium Takes ARM to Petascale with Astra"