(→Bibliography) |

|||

| (7 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

| + | {{habana title|Goya|arch}} | ||

{{microarchitecture | {{microarchitecture | ||

|atype=NPU | |atype=NPU | ||

| Line 10: | Line 11: | ||

|contemporary link=habana/microarchitectures/gaudi | |contemporary link=habana/microarchitectures/gaudi | ||

}} | }} | ||

| − | |||

| − | |||

[[File:Habana GOYA.png|right|thumb|Goya Logo]] | [[File:Habana GOYA.png|right|thumb|Goya Logo]] | ||

'''Goya''' is a [[16-nanometer]] microarchitecture for inference [[neural processors]] designed by [[Habana Labs]]. | '''Goya''' is a [[16-nanometer]] microarchitecture for inference [[neural processors]] designed by [[Habana Labs]]. | ||

| + | |||

| + | == Process Technology == | ||

| + | Goya-based processors are fabricated on [[TSMC]] [[16-nanometer]] process. | ||

| + | |||

| + | ==Architecture == | ||

| + | === Block Diagram === | ||

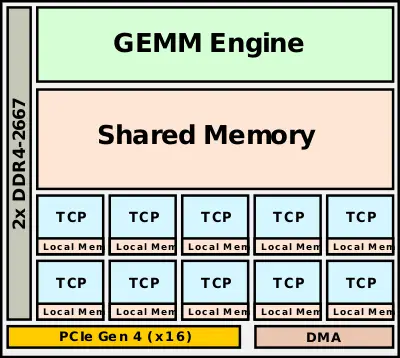

| + | :[[File:habana goya block diagram.svg|400px]] | ||

| + | |||

| + | == Overview == | ||

| + | Goya is designed as a microarchitecture for the [[acceleration]] of inference. Since the target market is the data center, the [[thermal design point]] for those chips was relatively high - at around 200 W. Goya relies on [[PCIe]] 4.0 to interface to a host processor. Habana's software compiles the models and associated instructions into independent recipes which can then be sent to the accelerator for execution. The design itself uses a heterogenous approach comprising of a large General Matrix Multiply (GMM) engine, Tensor Processor Cores (TPCs), and a large shared memory pool. | ||

| + | |||

| + | === Tensor Processor Cores (TPC) === | ||

| + | [[File:habana hl-100.jpg|right|thumb|{{habana|HL|HL-100/102}} PCIe Card]] | ||

| + | There are eight TPCs. Each TPC also incorporates its own local memory but omits caches. The on-die caches and memory can be either hardware-managed or fully software-managed, allowing the compiler to optimize the residency of data and reducing [[data movement|movement]]. Each of the individual TPCs is a [[VLIW]] DSP design that has been optimized for AI applications. This includes [[AI]]-specific [[instructions]] and operations. The TPCs are designed for flexibility and can be programmed in plain [[C]]. The TPC supports mixed-prevision operations including 8-bit, 16-bit, and 32-bit SIMD vector operations for both [[integer]] and [[floating-point]]. This was done in order to allow accuracy loss tolerance to be controlled on a per-model design by the programmer. Goya offers both coarse-grained precision control and fine-grained down to the tensor level. | ||

| + | |||

| + | == Bibliography == | ||

| + | * {{bib|hc|31|Habana}} | ||

| + | * Habana, AI Hardware Summit 2019 | ||

| + | * Habana, Linley Fall Processor Conference 2019 | ||

| + | |||

| + | == See also == | ||

| + | * {{\\|Gaudi}} | ||

| + | * {{habana|HL}} series | ||

Latest revision as of 15:29, 28 December 2019

| Edit Values | |

| Goya µarch | |

| General Info | |

| Arch Type | NPU |

| Designer | Habana |

| Manufacturer | TSMC |

| Introduction | 2018 |

| Process | 16 nm |

| PE Configs | 8 |

| Contemporary | |

| Gaudi | |

Goya is a 16-nanometer microarchitecture for inference neural processors designed by Habana Labs.

Contents

Process Technology[edit]

Goya-based processors are fabricated on TSMC 16-nanometer process.

Architecture[edit]

Block Diagram[edit]

Overview[edit]

Goya is designed as a microarchitecture for the acceleration of inference. Since the target market is the data center, the thermal design point for those chips was relatively high - at around 200 W. Goya relies on PCIe 4.0 to interface to a host processor. Habana's software compiles the models and associated instructions into independent recipes which can then be sent to the accelerator for execution. The design itself uses a heterogenous approach comprising of a large General Matrix Multiply (GMM) engine, Tensor Processor Cores (TPCs), and a large shared memory pool.

Tensor Processor Cores (TPC)[edit]

There are eight TPCs. Each TPC also incorporates its own local memory but omits caches. The on-die caches and memory can be either hardware-managed or fully software-managed, allowing the compiler to optimize the residency of data and reducing movement. Each of the individual TPCs is a VLIW DSP design that has been optimized for AI applications. This includes AI-specific instructions and operations. The TPCs are designed for flexibility and can be programmed in plain C. The TPC supports mixed-prevision operations including 8-bit, 16-bit, and 32-bit SIMD vector operations for both integer and floating-point. This was done in order to allow accuracy loss tolerance to be controlled on a per-model design by the programmer. Goya offers both coarse-grained precision control and fine-grained down to the tensor level.

Bibliography[edit]

- Habana, IEEE Hot Chips 31 Symposium (HCS) 2019.

- Habana, AI Hardware Summit 2019

- Habana, Linley Fall Processor Conference 2019

See also[edit]

| codename | Goya + |

| designer | Habana + |

| first launched | 2018 + |

| full page name | habana/microarchitectures/goya + |

| instance of | microarchitecture + |

| manufacturer | TSMC + |

| name | Goya + |

| process | 16 nm (0.016 μm, 1.6e-5 mm) + |

| processing element count | 8 + |