(→Overview) |

|||

| Line 17: | Line 17: | ||

== Overview == | == Overview == | ||

| − | NVDLA is a microarchitecture designed by [[Nvidia]] for the acceleration of deep learning workloads. Since the original implementation targeted Nvidia's own {{nvidia|Xavier}} SoC, the architecture is specifically optimized for [[convolutional neural networks]] (CNNs) as the main types of workloads deal with images and videos, although other [[neural networks|networks]] are also support. | + | NVDLA is a microarchitecture designed by [[Nvidia]] for the acceleration of deep learning workloads. Since the original implementation targeted Nvidia's own {{nvidia|Xavier}} SoC, the architecture is specifically optimized for [[convolutional neural networks]] (CNNs) as the main types of workloads deal with images and videos, although other [[neural networks|networks]] are also support. NVDLA primarily targets edge devices, IoT applications, and other lower-power inference designs. |

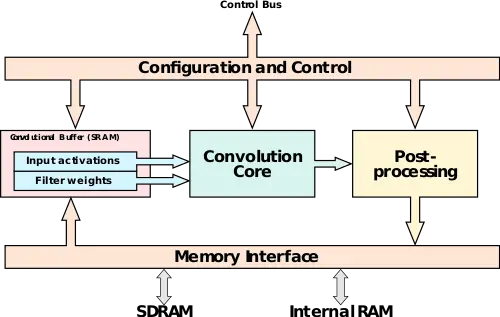

At a high level, NVDLA stores both the activation and the inputs in a convolutional [[buffer]]. Both are fed into a convolutional core which consists of a large array of [[multiply-accumulate]] units. The final result gets sent into a post-processing unit which writes it back to memory. The processing elements are encapsulated by control logic as well as a memory interface ([[DMA]]). | At a high level, NVDLA stores both the activation and the inputs in a convolutional [[buffer]]. Both are fed into a convolutional core which consists of a large array of [[multiply-accumulate]] units. The final result gets sent into a post-processing unit which writes it back to memory. The processing elements are encapsulated by control logic as well as a memory interface ([[DMA]]). | ||

=== Memory Interface === | === Memory Interface === | ||

| − | + | [[File:conv core.svg|thumb|right|200px|Convolution Core Example]] | |

| + | NVDLA is highly parameterizable. The size of the convolutional buffer can be adjusted depending on the kind of tradeoffs required (e.q. die size, memory bandwidth, neural network characteristics). To aid with the buffer is a memory interface which supports both internal RAM and SDRAM support. With a smaller convolutional buffer size, the second level of cache can be supported via the memory interface and a further off-chip memory can be used to extend the cache. | ||

=== Convolution Core === | === Convolution Core === | ||

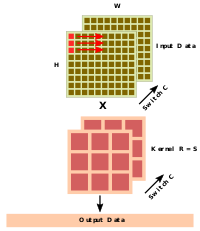

| − | + | For the convolutional core there is usually one input activation along with a set of kernels. As with the memory interface, the number of pixels taken from the input is parameterizable along with the number of kernels. Typically, a strip of 16-32 outputs is calculated at a time. In order to safe power, the one weights of the MACs remain constant for a number of cycles. This also helps reduces data transfers. | |

== Bibliography == | == Bibliography == | ||

* IEEE Hot Chips 30 Symposium (HCS) 2018. | * IEEE Hot Chips 30 Symposium (HCS) 2018. | ||

Revision as of 13:29, 3 September 2018

| Edit Values | |

| NVDLA µarch | |

| General Info | |

| Arch Type | NPU |

| Designer | Nvidia |

| Manufacturer | TSMC |

| Introduction | 2018 |

NVDLA (NVIDIA Deep Learning Accelerator) is a neural processor microarchitecture designed by Nvidia. Originally designed for their own Xavier SoC, the architecture has been made open source.

Contents

History

NVDLA was originally designed for their own Xavier SoC. Following the Xavier implementation, Nvidia open sourced the architecture. The architecture was made more parameterizable, given the designer the tradeoff choice between power, performance, and area.

Architecture

Block Diagram

Overview

NVDLA is a microarchitecture designed by Nvidia for the acceleration of deep learning workloads. Since the original implementation targeted Nvidia's own Xavier SoC, the architecture is specifically optimized for convolutional neural networks (CNNs) as the main types of workloads deal with images and videos, although other networks are also support. NVDLA primarily targets edge devices, IoT applications, and other lower-power inference designs.

At a high level, NVDLA stores both the activation and the inputs in a convolutional buffer. Both are fed into a convolutional core which consists of a large array of multiply-accumulate units. The final result gets sent into a post-processing unit which writes it back to memory. The processing elements are encapsulated by control logic as well as a memory interface (DMA).

Memory Interface

NVDLA is highly parameterizable. The size of the convolutional buffer can be adjusted depending on the kind of tradeoffs required (e.q. die size, memory bandwidth, neural network characteristics). To aid with the buffer is a memory interface which supports both internal RAM and SDRAM support. With a smaller convolutional buffer size, the second level of cache can be supported via the memory interface and a further off-chip memory can be used to extend the cache.

Convolution Core

For the convolutional core there is usually one input activation along with a set of kernels. As with the memory interface, the number of pixels taken from the input is parameterizable along with the number of kernels. Typically, a strip of 16-32 outputs is calculated at a time. In order to safe power, the one weights of the MACs remain constant for a number of cycles. This also helps reduces data transfers.

Bibliography

- IEEE Hot Chips 30 Symposium (HCS) 2018.

| codename | NVDLA + |

| designer | Nvidia + |

| first launched | 2018 + |

| full page name | nvidia/microarchitectures/nvdla + |

| instance of | microarchitecture + |

| manufacturer | TSMC + |

| name | NVDLA + |