(→Overview) |

|||

| Line 11: | Line 11: | ||

== Overview == | == Overview == | ||

[[File:csa overview block.svg|thumb|right|Overview]] | [[File:csa overview block.svg|thumb|right|Overview]] | ||

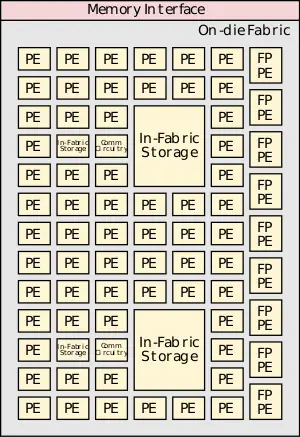

| − | CSA claims to offer an order-of-magnitude gain in energy efficiency and performance relative to existing HPC microprocessors through the use of highly-dense and efficient atomic compute units. The basic structure of the configurable spatial accelerator (CSA) is a dense heterogeneous array of processing elements (PEs) tiles along with an on-die interconnect network and a memory interface. The array of processing elements can include integer arithmetic PEs, [[floating-point arithmetic]] PEs, communication circuitry, and in-fabric storage. This group of tiles may be stand-alone or may be part of an even larger tile. The accelerator executes [[data flow graphs]] directly instead traditional sequential [[instruction streams]]. | + | CSA claims to offer an order-of-magnitude gain in energy efficiency and performance relative to existing HPC microprocessors through the use of highly-dense and efficient atomic compute units. The basic structure of the configurable spatial accelerator (CSA) is a dense heterogeneous array of processing elements (PEs) tiles along with an on-die interconnect network and a memory interface. The array of processing elements can include integer arithmetic PEs, [[floating-point arithmetic]] PEs, communication circuitry, and in-fabric storage. This group of tiles may be stand-alone or may be part of an even larger tile. The accelerator executes [[data flow graphs]] directly instead traditional sequential [[instruction streams]]. Unlike an [[FPGA]], the CSA supports full languages such as C++ and executes full programs. There is a one-to-one correspondence between the nodes in the compiler-generated graph and the CSA dataflow operator processing elements. |

Each of the processing elements supports a few highly efficient operations, thus they can only handle a few of the dataflow graph operations. Different PEs support different operations of the dataflow graph. PEs execute simultaneously irrespective of the operations of the other PEs. PEs can execute a dataflow operation whenever it has input data available and there is sufficient space available for storing the output data. PE can execute immediately as soon as they are configured by the fabric. | Each of the processing elements supports a few highly efficient operations, thus they can only handle a few of the dataflow graph operations. Different PEs support different operations of the dataflow graph. PEs execute simultaneously irrespective of the operations of the other PEs. PEs can execute a dataflow operation whenever it has input data available and there is sufficient space available for storing the output data. PE can execute immediately as soon as they are configured by the fabric. | ||

| Line 19: | Line 19: | ||

[[File:csa overview full chip.svg|400px]] | [[File:csa overview full chip.svg|400px]] | ||

| − | |||

== Architecture == | == Architecture == | ||

Revision as of 23:23, 30 September 2018

Configurable Spatial Accelerator (CSA) is an explicit data graph execution architecture designed by Intel for the high-performance computing and data center markets.

Contents

Motivation

The push to exascale computing demands a very high floating-point performance while maintaining a very aggressive power budget. Intel claims that the CSA architecture is capable of replacing the traditional out-of-order superscalar while surpassing it in performance and power. CSA supports the same HPC programming models supported by traditional microprocessors.

Overview

CSA claims to offer an order-of-magnitude gain in energy efficiency and performance relative to existing HPC microprocessors through the use of highly-dense and efficient atomic compute units. The basic structure of the configurable spatial accelerator (CSA) is a dense heterogeneous array of processing elements (PEs) tiles along with an on-die interconnect network and a memory interface. The array of processing elements can include integer arithmetic PEs, floating-point arithmetic PEs, communication circuitry, and in-fabric storage. This group of tiles may be stand-alone or may be part of an even larger tile. The accelerator executes data flow graphs directly instead traditional sequential instruction streams. Unlike an FPGA, the CSA supports full languages such as C++ and executes full programs. There is a one-to-one correspondence between the nodes in the compiler-generated graph and the CSA dataflow operator processing elements.

Each of the processing elements supports a few highly efficient operations, thus they can only handle a few of the dataflow graph operations. Different PEs support different operations of the dataflow graph. PEs execute simultaneously irrespective of the operations of the other PEs. PEs can execute a dataflow operation whenever it has input data available and there is sufficient space available for storing the output data. PE can execute immediately as soon as they are configured by the fabric.

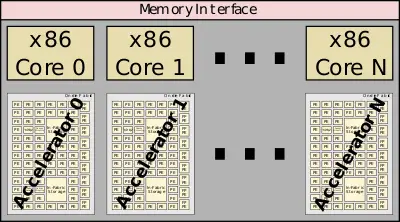

The configurable spatial accelerators are designed alongside a traditional x86 core. This allows the cores maintain legacy support and handle additional tasks that might be easier on a traditional core.

Architecture

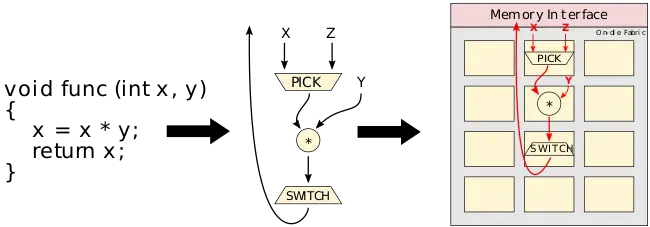

The CSA executes dataflow graphs directly. Those tend to look very similar to the compiler's own internal representation (IR) of compiled programs. The graph consists of operators and edges which are the transfer of data between operators. Dataflow tokens representing data values are injected into the dataflow graph for executions. Tokens flow between nodes to form a complete computation.

Suppose func is executed with 1 for X and 2 for Y. The value for X (a 1) may be a constant or a result of some other operation. A pick operation selects that correct input value and provides it to the downstream destination processing element. In this case, the pick outputs a data value of 1 to the multiplier to be multiplied by the value of Y (a 2). The multiplier outputs the value of 2 to the switch node which completes the operation and passes the value onward. Many such operations may be executed concurrently.

Communications arcs

Communications arcs are latency insensitive, asynchronous, point-to-point communications channels. Can be back-pressured by a processing element. When this happens data cannot pass (either producing or sending output). This may occur because the PE is simply unconfigured or there is no storage space available.

Memory

Memory operations are treated just like any other processing element as basic dataflow operators such as a load and a store. There are also some more complex operations such as in-memory atomics and consistency operators with similar semantics to their von Neumann counterparts. For example, a load takes an address channel and populates a response channel with the output.

In the case of memory aliasing, since the CSA has no notion of a program counter, there are dependency tokens which allow compilers to define the order of memory accesses. A dependency token behaves like any other token but prevents memory operations from executing until a dependency token is received.

Runtime services

CSA provides a number of architectural facilities for dealing with things such as context switches, exceptions, and errors.

- Configuration - dataflow graph is loaded into the CSA

- Extraction - dataflow graph execution state is saved to memory

- Exceptions - handling mechanism for math, soft, and other errors

Configuration loads the dataflow graph and state from memory into the interconnect and processing elements. This also includes any dataflow tokens live in that graph that may have been in operation prior a context switch. PEs can start executing right away as soon as they are configured by the fabric. Any unconfigured PE simply backpressures their channel until they are configured. The CSA can be partitioned into privileged and user-level state. This can allow a primary configuration of the fabric to run without invoking the operating system.

Extraction saves the dataflow graph execution state to memory. Extractions are caused by similar events to exceptions such as illegal operator argument or RAS events. Exceptions may occur at the dataflow-operator level. On such exceptions, the operator halts and emits an exception message containing the operation identifier and details of the nature of the problem. The exception message can then be communicated to an associated core for service.

Bibliography

- Kermin E.F. et al. (July 5, 2018). US Patent No. US20180189231A1.