Infinity Fabric (IF) is a system of transmissions and controls that underpin AMD's recent microarchitectures for both CPU (i.e., Zen) and graphics (e.g., Vega), and any other additional accelerators they might add in the future. The interconnections were first announced and detailed in April 2017 by Mark Papermaster, AMD's SVP and CTO.

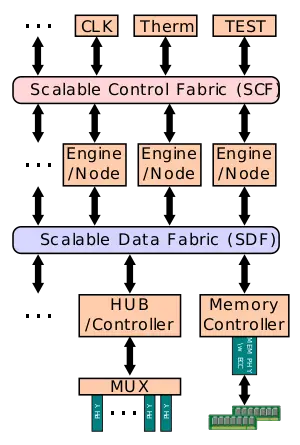

The Infinity Fabric consists of two separate communication interconnections: Infinity Scalable Data Fabric (SDF) and the Infinity Scalable Control Fabric (SCF). The SDF is the primary means by which data flows around the system between end point (e.g. NUMA nodes, PHYs). The SDF might have dozens of connecting points hooking together things such as PCIe PHYs, memory controllers, USB hub, and the various computing and execution units. The SDF is a superset of what was previously HyperTransport. The SCF handles the transmission of the many miscellaneous system control signals - this includes things such as thermal and power management, tests, security, and 3rd party IP. With those two interconnections, AMD can efficiently scale up many of the basic computing blocks.

Inter-/Intra- communication

A key feature of the coherent data fabric is that it's not limited to a single die and can extend over multiple dies in an MCP as well as multiple sockets over PCIe links (possibly even across independent systems, although that's speculation). There's also no constraint on the topology of the nodes connected over the fiber, communication can be done directly node-to-node, island-hopping in a bus topology, or as a mesh topology system.

- Dual-socket, 2-Die multi-chip package:

- 600px

- Dual-socket, 4-Die multi-chip package:

- 650px

- Rates assumes DDR4-2666 is used.

Note that at most, there's a maximum of 2 hops between any two physical dies - both in the same package or between sockets.

With the implementation of the Infinity Fabric in the Zen microarchitecture, Intra-Chip (i.e. die-to-die) communication over AMD's Global Memory Interconnect has a bi-directional bandwidth of 39.736 GB/s per 4B link. Note that this is exactly the same bandwidth as a dual-channel DDR4 operating at a rate of 2666 MT/s that should be used in the system, therefore the bandwidth of the system will be directly tied to the DRAM transfer rate. In AMD's EPYC server processor family, which consist of 4 dies, this gives a bisection bandwidth of 158.944 GiB/s. Inter-Chip communication (i.e., chip-to-chip such as in the case of a dual-socket server) has a bi-directional bandwidth of 35.3 GiB/s for a bisection bandwidth of 141.2 GiB/s.

References

- AMD Infinity Fabric introduction by Mark Papermaster, April 6, 2017

- AMD EPYC Tech Day, June 20, 2017