Brain floating-point format (bfloat16 or BF16) is a number encoding format occupying 16 bits representing a floating-point number. It is equivalent to a standard single-precision floating-point value with a truncated mantissa field. Bfloat16 is designed to be used in hardware accelerating machine learning algorithms. Bfloat was first proposed and implemented by Google with Intel supporting it in their FPGAs, Nervana neural processors, and CPUs.

Contents

Overview[edit]

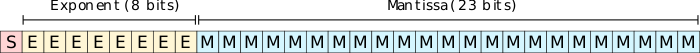

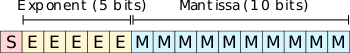

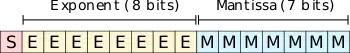

Bfloat16 follows the same format as a standard IEEE 754 single-precision floating-point but truncates the mantissa field from 23 bits to just 7 bits. Preserving the exponent bits keeps the format to the same range as the 32-bit single precision FP (~1e-38 to ~3e38). This allows for relatively simpler conversion between the two data types. In other words, while some resolution is lost, numbers can still be represented. Microsoft developed a similar format for an 8-bit floating point based on the float16 range.

- float32: (range ~1e-38 to ~3e38)

- float16: (range ~5.96e-8 to 65,504)

- bfloat16: (range ~1e-38 to ~3e38)

Motivation[edit]

The motivation behind the reduced mantissa is derived from Google's experiments that showed that it is fine to reduce the mantissa so long it's still possible to represent tiny values closer to zero as part of the summation of small differences during training. Smaller mantissa brings a number of other advantages such as reducing the multiplier power and physical silicon area.

- float32: 242=576 (100%)

- float16: 112=121 (21%)

- bfloat16: 82=64 (11%)

From the above, it can be seen that there is a factor of two and a factor of ten in terms of the number of bits that will flip (or components). From an efficiency standpoint, the gain was worth it for Google and their TPU implementation. At the system level, the benefits in memory capacity saving and bandwidth can also be realized if desired.

There are a number of other advantages from a software standpoint. From a debugging standpoint, since both bfloat16 and float32 share almost the same dynamic range, the software error behavior of bfloat16 tends to be almost the same as those of float32. Since bfloat16 maintains the same dynamic range, using lower precisions works well with bfloat16 without loss scaling. Getting training to converge on a standard 16-bit float requires multiplying the loss by around 210 and then adjusting the learning rate to get the same numerical result. This complexity is entirely eliminated with bfloat16 where there is no need to compensate for the loss of dynamic range.

Hardware support[edit]

CPUs[edit]

NPUs[edit]

- See also: neural processors

- Arm Project Trillium

- Centaur Technology CHA (NCORE)

- Flex Logix InferX

- Google TPU

- Habana HL

- Intel NNP

- Wave Computing DPU

ISAs[edit]

- Power: Power ISA v3.1

- x86-64: AVX512_BF16 (part of DL Boost technology)

- AArch64: ARMv8.6-BF16

See also[edit]

Bibliography[edit]

- Cliff Young, Google AI. (October 2018). "Codesign in Google TPUs: Inference, Training, Performance, and Scalability". Keynote speech, Processor Conference 2018.

- Carey Kloss, Intel VP Hardware and AI Products Group. (April 2019). "Deep Learning By Design; Building silicon for AI." Processor Conference 2019.