Neural Network Processors (NNP) is a family of neural processors designed by Intel Nervana for both inference and training.

Contents

Overview

Neural network processors (NNP) are a family of neural processors designed by Intel for the acceleration of artificial intelligence workloads. The design initially originated by Nervana prior to their acquisition by Intel. Intel eventually productized those chips starting with their second-generation designs in late 2019.

The NNP family comprises two separate series - NNP-I for inference and NNP-T for training.

Learning (NNP-T)

Lake Crest

- Main article: Lake Crest µarch

The first generation of NNPs were based on the Lake Crest microarchitecture. Manufactured on TSMC's 28 nm process, those chips were never productized. Samples were used for customer feedback and the design mostly served as a software development vehicle for their follow-up design.

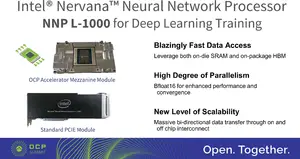

T-1000 (Spring Crest)

- Main article: Spring Crest µarch

Second-generation NNP-Ts are branded as the NNP T-1000 series and are the first chips to be productized. Fabricated TSMC's 16 nm process based on the Spring Crest microarchitecture, those chips feature a number of enhancements and refinments over the prior generation including a shift from Flexpoint to Bfloat16. Intel claims that these chips have about 3-4x the training performance of first generation. Those chips come with 32 GiB of four HBM2 stacks and are packaged in two forms - PCIe x16 Gen 3 Card and an OCP OAM.

Inference (NNP-I)

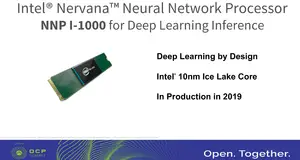

I-1000 Series (Spring Hill)

- Main article: Spring Hill µarch

The NNP I-1000 series is Intel's first series of devices designed specifically for the acceleration of inference workloads. Fabricated on Intel's 10 nm process, these chips are based on Spring Hill and incorporate a Sunny Cove core along with twelve specialized inference acceleration engines. The overall SoC design borrows considerable amount of IP from Ice Lake. Those devices come in M.2 and PCIe form factors.

- Proc 10 nm process

- Mem 4x32b LPDDR4x-4200

- TDP 10-50 W

- Eff 2.0-4.8 TOPs/W

- Perf 48-92 TOPS (Int8)

| List of NNP-I-based Processors | |||||

|---|---|---|---|---|---|

| Main processor | |||||

| Model | Launched | TDP | EUs | Peak Perf (Int8) | |

| NNP-I 1100 | 12 November 2019 | 12 W 12,000 mW 0.0161 hp 0.012 kW | 12 | 50 TOPS 50,000,000,000,000 OPS 50,000,000,000 KOPS 50,000,000 MOPS 50,000 GOPS 0.05 POPS | |

| NNP-I 1300 | 12 November 2019 | 75 W 75,000 mW 0.101 hp 0.075 kW | 24 | 170 TOPS 170,000,000,000,000 OPS 170,000,000,000 KOPS 170,000,000 MOPS 170,000 GOPS 0.17 POPS | |

| Count: 2 | |||||

Intel also announced NNP-I in an EDSFF (ruler) form factor which was designed to provide the highest compute density possible for inference. Intel hasn't announced specific models. The rulers were planned t come with a 10-35W TDP range. 32 NNP-Is in a ruler form factor can be packed in a single 1U rack.

See also

| designer | Intel + |

| first announced | May 23, 2018 + |

| first launched | 2019 + |

| full page name | nervana/nnp + |

| instance of | integrated circuit family + |

| main designer | Intel + |

| manufacturer | Intel + and TSMC + |

| name | NNP + |

| package | PCIe x16 Gen 3 Card +, OCP OAM + and M.2 + |

| process | 28 nm (0.028 μm, 2.8e-5 mm) +, 16 nm (0.016 μm, 1.6e-5 mm) + and 10 nm (0.01 μm, 1.0e-5 mm) + |

| technology | CMOS + |