| Interconnect Architectures | |

| |

| Concepts | |

| General | |

| Peripheral | |

| Storage Devices | |

| Audio Devices | |

NVLink is a proprietary system interconnect architecture that facilitates coherent data and control transmission accross multiple Nvidia GPUs and supporting CPUs.

Overview

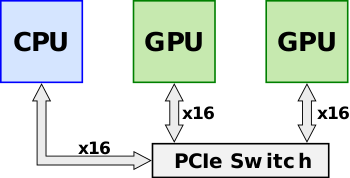

Announced in early 2014, NVLink was designed as an alternative solution to PCI Express with higher bandwidth and additional features (e.g., shared memory) specifically designed to be compatible with Nvidia's own GPU ISA for multi-GPU systems. Prior to the introduction of NVLink with Pascal (e.g., Kepler), multiple Nvidia's GPUs would sit on a shared PCIe bus. Although direct GPU-GPU transfers and accesses were already possible using Nvidia's Unified Virtual Addressing over the PCIe bus, as the size of data sets continued to grow, the bus became a growing system bottleneck. Throughput could further improve through the use of a PCIe switch.

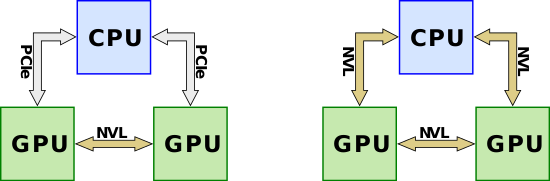

NVLink is designed to replace the inter-GPU-GPU communication from going over the PCIe lanes. It's worth noting that NVLink was also designed for CPU-GPU communication with higher bandwidth than PCIe. Although it's unlikely that NVLink would be implemented on an x86 system by either AMD or Intel, IBM has collaborate with Nvidia to support NVLink on their POWER microprocessors. For support microprocessors, the NVLink can eliminate PCIe entirely for all links.

Links

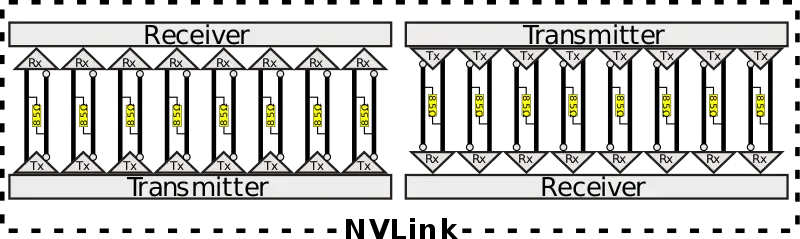

A single NVLink is a bidirectional interface which comprises 8 differential pairs in each direction for a total of 32 wires. The pairs are DC coupled an use an 85Ω differential termination with an embedded clock. To ease routing, NVLink supports lane reversal and lane polarity, meaning the physical lane ordering and their polarity between the two devices may be reversed.

Packet

| This section is empty; you can help add the missing info by editing this page. |

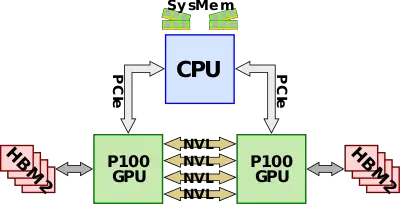

NVLink 1.0

NVLink 1.0 was first introduced with the P100 GPGPU based on the Pascal microarchitecture. P100 comes with its own HBM memory in addition to being able to being able to access system memory from the CPU side. The P100 has four NVLinks, which supports up to 20 GB/s for a bidrectional bandwidth of 40 GB/s for a total aggregated bandwidth of 160 GB/s. In the most basic configuration, all four links are connected between the two GPUs for 160 GB/s GPU-GPU bandwidth in addition to the PCIe lanes connected to the CPU for accessing system DRAM.

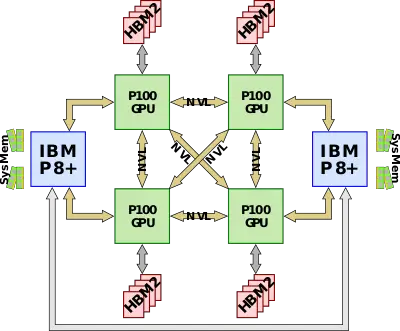

The first CPU to support NVLink natively was the IBM POWER8+ which allowed the NVLink interconnect to extend to the CPU, replacing the slower PCIe link. Since the P100 only has four NVLinks, a single link from each GPU can be used to link the CPU to the GPU. A typical full configuration node consists of four P100 GPUs and two Power CPUs. The four GPUs are fully connected to each other with the fourth link going to the CPU.

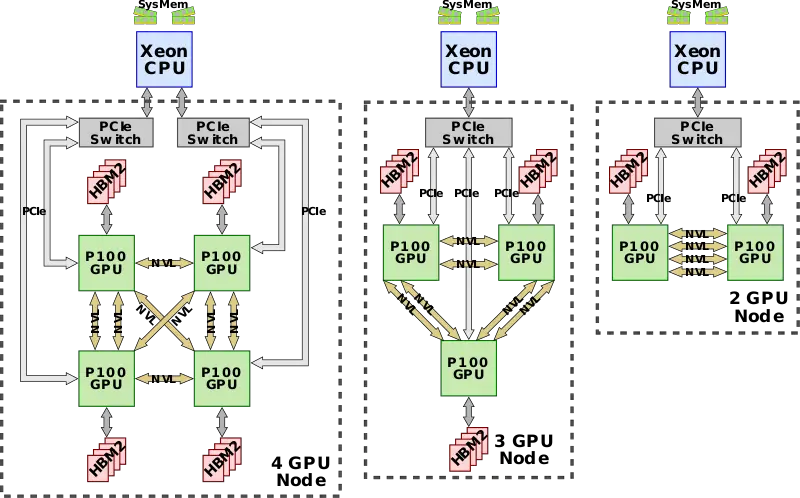

Since Intel-based CPUs do not support NVLinks (and are unlikely to ever support them), a couple of variations are possible from two to four P100 GPUs. In each configuration, the GPUs are all fully connected to each other with every two GPUs hooked up to a single PCIe switch which is directly connected to the CPU. Each link is 40 GB/s bidirectional regardless of the configuration and may be aggregated to provide higher bandwidth between any two GPUs the more links are used between them.

NVLink 2.0

NVLink 2.0 was first introduced with the V100 GPGPU based on the Volta microarchitecture along with IBM's POWER9.